In an earlier article, we tried out some of the tools to turbo-charge mainly CPU-bound Python programs, without modifying the source code too often. Another way to boost run-time performance is to leverage the run-time parallelism provided by modern multi-core machines with SMP-enabled operating systems. This article is to get you started with parallel computing in Python on multi-core/multi-processor systems and computing clusters.

If you can design your Python application so that independent parts of it run in parallel on multi-core machines, then it will boost application performance a lot. If your existing Python applications are multi-threaded, or you write your new code to exploit concurrency, then it could have run in parallel on multi-core machines. Unfortunately, the current CPython implementation can’t provide run-time parallelism for Python threads on multi-core machines.

This limitation is due to the Global Interpreter Lock (GIL); we discussed the details in an earlier article. However, I’ll present ways to conquer the GIL issue, so you can get started with parallel computing.

First, you need some understanding of parallel computing. A good starting point is this Wikipedia article. I used the Ubuntu 9.10 x86_64 desktop distribution, with Python 2.7, to test the code in this article and to generate the screenshots.

Overcoming the GIL to extract performance

The GIL limits the number of the threads that can execute at a time in CPython, to one, impairing the ability to run in parallel on multi-core machines. The way to overcome this limitation is to use sub-processes in place of threads, in your Python code. Although this sub-process model does have creation and inter-process communication (IPC) overheads, it frees you from the GIL issue, and is adequate most of the time.

If this seems complicated, don’t worry; most of the time, you won’t have to deal with the arcane lower-level details of sub-process creation, and the IPC between them. All the alternatives we discuss here are internally based upon this multi-process model; the only difference between them is the level of abstraction provided to parallelise your Python code.

Multi-threading in the multi-processing way

The easiest way to turn Python code that’s designed with threading, into an application that will use multi-core systems, is to use the inbuilt multiprocessing module, which is included in Python version 2.6 onwards. This module’s API is similar to the threading module, so you need to make very few changes, due to the similarity. Though there’s some lower-level work involved, there is no need to install any additional Python components.

You have to use the multiprocessing module’s Process class to create a new sub-process object. The start method of this object executes a function that’s passed to it in the sub-process, with the tuple of arguments that’s passed. There is also a join method, to wait for the completion of your sub-process. Save the code shown below into basic.py, and run it (python basic.py, or make the script executable with chmod u+x basic.py and run ./basic.py). You can clearly see the different outputs generated by the different sub-processes that are created.

#! /usr/bin/env python2.7

from multiprocessing import Process

def test(name):

print ' welcome ' + name + ' to multiprocessing!'

if '__main__' == __name__:

p1 = Process(target = test, args = ('Rich',))

p2 = Process(target = test, args = ('Nus',))

p3 = Process(target = test, args = ('Geeks',))

p2.start()

p1.start()

p3.start()

p1.join()

p2.join()

p3.join()

To verify that you have multiple sub-processes, run waste.py and counting.py (shown below) and see how different sub-processes print to the console in a jumbled manner. The counting.py script has a delay after printing, so you can see that the sub-processes’ output is slower.

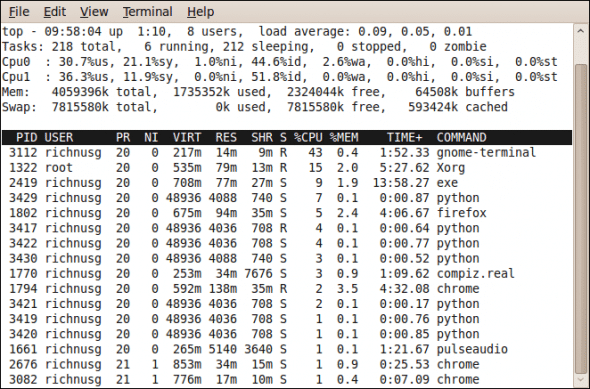

I also captured the top command’s output (Figure 1) while running waste.py on my dual-core machine; it clearly shows that multiple Python sub-processes are running. It also shows the balanced distribution of the sub-processes across multiple cores, in the form of the cores’ idle percentage.

#! /usr/bin/env python2.7 # === counting.py === from time import sleep from multiprocessing import Process def count(number, label): for i in xrange(number): print ' ' + str(i) + ' ' + label sleep((number - 15)/10.0) if '__main__' == __name__: p1 = Process(target = count, args = (20, ' Rich',)) p2 = Process(target = count, args = (25, ' Nus',)) p3 = Process(target = count, args = (30, ' Geeks',)) p1.start() p2.start() p3.start()

#! /usr/bin/env python2.7 # === waste.py === from multiprocessing import Process def waste(id): while 1: print str(id) + ' Total waste of CPU cycles!' if '__main__' == __name__: for i in xrange(20): Process(target = waste, args = (i,)).start()

If you need the thread kind of locking, then multiprocessing provides the Lock class too; you can use the acquire and release methods of the Lock object. There is also a Queue class and a Pipe function, for communication between sub-processes. The Queue is both thread- and process-safe, so you don’t have to worry about locking and unlocking while working with it.

The Pipe() returns a pair of connection objects that are connected by a pipe, in two-way mode, by default. If you want a pool of worker sub-processes, then you can use the Pool class, which offloads work to created sub-processes in a few different ways. Run miscellaneous.py (shown below) to see some of these concepts in action.

#! /usr/bin/env python2.7

# === miscellaneous.py ===

import os

from multiprocessing import Process, Lock, Queue, Pipe, cpu_count

def lockfunc(olck, uilaps):

olck.acquire()

for ui in xrange(4*uilaps):

print ' ' + str(ui) + ' lock acquired by pid: ' + str(os.getpid())

olck.release()

def queuefunc(oque):

oque.put(" message in Queue: LFY rockz!!!")

def pipefunc(oc):

oc.send(" message in Pipe: FOSS rulz!!!")

oc.close()

if '__main__' == __name__:

uicores = cpu_count()

olck = Lock()

oque = Queue()

op, oc = Pipe()

for ui in xrange(1, 2*uicores):

Process(target = lockfunc, args = (olck, ui,)).start()

opq = Process(target = queuefunc, args = (oque,))

opq.start()

print

print oque.get()

print

opq.join()

opp = Process(target = pipefunc, args = (oc,))

opp.start()

print op.recv()

print

opp.join()

The multiprocessing package provides a lot of other functionality too; please refer to this documentation.

Parallelism via the pprocess module

The external pprocess Python module is somewhat inspired by the thread Python module, and provides a simple API and different styles for parallel programming. It is very similar to the multiprocessing package, so I will only touch on important concepts; readers are encouraged to explore it further. The module provides lower-level constructs like threading- and forking-like parallelism, as well as higher-level constructs like pmap, channel, Exchange, MakeParallel, MakeReusable, etc.

To use pprocess, download the latest version. To install it in a terminal window, uncompress it with tar zxvf pprocess-version.tar.gz. Change to the extracted folder with cd pprocess-version (replace version with the version number). With root privileges (use sudo or su) run python setup.py install. Verify if the installation has been successful with python -m pprocess.

The easiest way to parallelise code that uses the map function to execute a function over a list is to use pmap, which does the same thing, but in parallel. There is an examples subdirectory in the pprocess sources that contains map-based simple.py, and the pmap version, simple_pmap.py. On execution (see Figure 2), the pmap version was roughly 10 times faster than the serial map version, clearly showing the performance boost from parallel execution on multiple cores.

The channels are objects returned after the creation of sub-processes, and they are used to communicate with the created processes. You can also create an Exchange object to know when to read from a channel. You can initialise the Exchange object with a list of channels, or add them to it later with its add method. You can use the active method to learn if it is actually monitoring any channels, and ready to see if any data is ready to be received.

This module also provides a MakeParallel class to create a wrapper around unmodified functions, which returns results via channels. Learn more about pprocess via the tutorial and references provided in the source’s docs subdirectory, and the code samples in the examples subdirectory.

Full-throttle multi-core/clustering with Parallel Python

Now we come to the most flexible, versatile and high-level tool in this article. The Parallel Python (PP) module is one of the easiest ways to write parallel applications in Python. A unique feature of this module is that you can also use it to parallelise your code over a computing cluster, through a very simple programming model: you start a PP execution server, submit jobs to it to be computed in parallel, and retrieve the computation results — that’s it!

To install PP, download the latest tarball. Extract the archive and do a (sudo or su) python setup.py install, just like for the pprocess module. Verify the installation with python -m pp.

Now get your hands dirty; run the Python script shown below:

#! /usr/bin/env python

# === parallel.py ===

from pp import Server

def coremsg(smsg, icore):

return ' ' + smsg + ' from core: ' + str(icore)

if '__main__' == __name__:

osrvr = Server()

ncpus = osrvr.get_ncpus()

djobs = {}

for i in xrange(0, ncpus):

djobs[i] = osrvr.submit(coremsg, ("hello FLOSS", i))

for i in xrange(0, ncpus):

print djobs[i]()

In the above example, we first create the PP execution server object, Server. The constructor takes many parameters, but the defaults are good, so I won’t provide any. By default, the number of worker sub-processes created (on the local machine) is the number of cores or processors in your machine — but you can create any number, not depending on ncpus. Parallel programming can’t be simpler than this!

PP shines mainly when you have to split a lot of CPU-bound stuff (say, intensive number-crunching code) into multiple parallel parts. It’s not as useful for I/O-bound code, of course. Run the following Python code to see PP’s parallel computation in action:

#! /usr/bin/env python

# === parcompute.py ===

from pp import Server

def compute(istart, iend):

isum = 0

for i in xrange(istart, iend+1):

isum += i**3 + 123456789*i**10 + i*23456789

return isum

if '__main__' == __name__:

osrvr = Server()

ncpus = osrvr.get_ncpus()

#total number of integers involved in the calculation

uinum = 10000

#number of samples per job

uinumperjob = uinum / ncpus

# extra samples for last job

uiaddtlstjob = uinum % ncpus

djobs = {}

iend = 0

istart = 0

for i in xrange(0, ncpus):

istart = i*uinumperjob + 1

if ncpus-1 == i:

iend = (i+1)*uinumperjob + uiaddtlstjob

else:

iend = (i+1)*uinumperjob

djobs[i] = osrvr.submit(compute, (istart, iend))

ics = 0

for i in djobs:

ics += djobs[i]()

print ' workers: ' + str(ncpus)

print ' parallel computed sum: ' + str(ics)

These PP examples just give you a basic feel for it; explore the more practical examples included in the examples subdirectory of the PP source. The source also includes documentation in the doc subdirectory. You have to run the ppserver.py utility, installed with the module, on remote computational nodes, if you want to parallelise your script on a computing cluster.

In cluster mode, you can even set a secret key, so that the computational nodes only accept remote connections from an “authenticated” source. There are no major differences between the multi-core/multi-processor and cluster modes of PP, except that you’d run ppserver.py instances on nodes, with various options, and pass a list of the ppserver nodes to the Server object. Explore more about the cluster mode in the PP documentation that’s included with it.

As we have seen, though the CPython GIL constrains multi-core utilisation in Python programs, the modules discussed here will let your programs run at full throttle on SMP machines to extract the highest possible performance.

[…] Turbo Charge Python Apps with Speed—Part 2 – LINUX For You […]

[…] Python code to provide native-compiled performance.In the next part, we will dirty our hands with more options that specifically address the GIL issue in CPython, to extract performance gains for Py….Related Posts:Turbo Charge Python Apps with Speed, Part 2Python for Research: An InitiationPython […]