Google has announced the open source availability of its image captioning system “Show and Tell” in TensorFlow. This new development is a step ahead by the search giant to expand its presence in the world of artificial intelligence (AI).

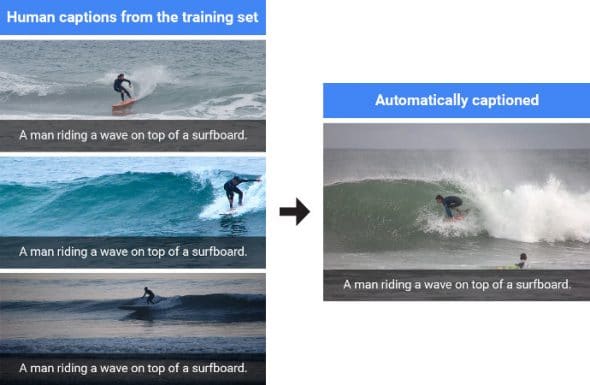

Captioning images sometimes become annoying. Thus, research scientists with the Google Brain team had developed a machine learning system back in 2014. This was aimed to automatically produce captions to describe various images.

The auto-captioning technology had proved its success at the very first level by winning the Microsoft COCO 2015 image captioning challenge. However, Google has this time made some significant improvements to its computer vision component to produce “more detailed and accurate descriptions” than the original system.

While the first image classification model in 2014 achieved 89.5 percent of accuracy, the present caption system is touted to be 93.9 percent accurate. “Initialising the image encoder with a better vision model gives the image captioning system a better ability to recognize different objects in the images, allowing it to generate more detailed and accurate descriptions,” wrote Chris Shallue, software engineer at Google Brain team, in a blog post.

Shallue and his fellow engineers have “fine-tuned” the original image model to improve the vision component of the caption system. Also, the model got evolved by jointly training its vision and language components on human generated captions.

“Importantly, the fine-tuning phase must occur after the language component has already learned to generate captions – otherwise, the noisiness of the randomly initialized language component causes irreversible corruption to the vision component,” Shallue added.

Developers can now deploy Google’s Show and Tell system on their projects and add automatic image captioning support by grabbing its code from the GitHub repository. Furthermore, the same repository includes a guide on the neural network architecture to enable custom training of the system.