Facebook AI team is also releasing the first published embeddings of the full Wikidata graph of 50 million Wikipedia concepts, created using the new tool.

Facebook AI team is open-sourcing its PyTorch-BigGraph (PBG), a tool that enables faster and easier production of graph embeddings for extremely large graphs.

According to the team, PBG is faster than commonly used embedding software and produces embeddings of comparable quality to state-of-the-art models on standard benchmarks.

With this new tool, anyone can take a large graph and quickly produce high-quality embeddings using a single machine or multiple machines in parallel.

Since PBG is written in PyTorch, it allows researchers and engineers to easily swap in their own loss functions, models and other components. In addition, PBG can also compute the gradients and is automatically scalable.

Embeddings of massive graphs using PBG

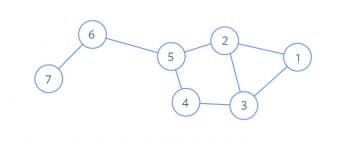

PBG uses a block partitioning of the graph to overcome the memory limitations of graph embeddings. Nodes are randomly divided into P partitions in such a way that two partitions can fit in memory. The edges are then divided into P2 buckets based on their source and destination node.

After the nodes and edges are partitioned, training can be performed on one bucket at a time.

PBG offers two different ways to train embeddings of partitioned graph data, namely, single machine and distributed training. In single-machine training, embeddings and edges are swapped out to disk when they are not being used. In distributed training, embeddings are distributed across the memory of multiple machines.

Facebook AI team also made several modifications to the standard negative sampling, which is necessary for large graphs.

As an example, the Facebook AI team is also releasing the first published embeddings of the full Wikidata graph of 50 million Wikipedia concepts, which serves as structured data for use in the AI research community.

The embeddings, which were created with PBG, can help other researchers perform machine learning tasks on Wikidata concepts.