The main difference between traditional lock-based synchronisation and software transactional memory is that lock-based synchronisation is based on pessimistic concurrency control, whereas software transactional memory is based on optimistic concurrency control.

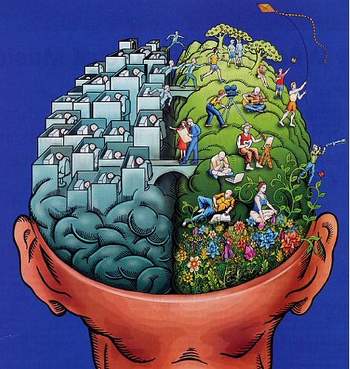

Optimistic vs pessimistic concurrency control

A pessimistic concurrency control implemented using lock-based programming is based on the premise that shared data that is accessed/updated within the critical section is expected to be non-disjointed across threads. Hence, it is necessary to obtain exclusive ownership of the data before executing the critical section, since conflicts are definitely expected during the data accesses.

Pessimistic Concurrency Control (PCC) and Optimistic Concurrency Control (OCC) are similar to “asking permission” (exclusive ownership before entering the atomic section) and “apologising if there is a conflict” (execute, assuming data is disjointed; and if there is conflict, apologise and retry). The choice of whether to ask permission first before executing, versus executing optimistically, hoping for no conflicts, and dealing with conflicts if they arise later, is not an easy decision from a performance viewpoint (for humans, as well).

Optimistic concurrency is driven by the assumption of disjoint access parallelism among concurrently executing transactions. It supposes that shared data that’s accessed/updated by concurrent transactions is expected to be disjointed, and hence, conflicts among threads when accessing shared data are typically infrequent.

Disjoint access parallelism is the disjointedness of data accesses between the concurrently executing transactions. An atomic section that exhibits disjoint access parallelism is executed by concurrently executing transactions as long as there are no conflicts among the transactions. On the other hand, if the concurrently executing transactions often access/update data that is not access-parallel, then the data accesses conflict with each other, leading to aborts for all conflicting transactions except one which commits, leading to wasted work due to aborting transactions.

Transactional semantics

Transactional memory semantics describe the expected or allowed outcomes of various memory operations on shared data accessed by the concurrent threads of a transactional memory application. Unlike database transactions, where shared data in the database is exclusively accessed through transactions, software transactional memory systems do not explicitly forbid the access of the shared data outside of transactions, i.e., in non-transactional code regions.

Therefore, any transactional semantics specification of the Software Transactional Memory (STM) also needs to cover the behaviour of the STM with respect to interaction of shared data accesses both inside transactional code, and outside transactional code. While clean and simple semantics facilitates easy adoption of the STM by the programmer, due to its ability to support simpler reasoning by the programmer, it is also important that the semantics supported should allow efficient implementation.

‘Serialisability’ criterion

Since software transactional memory has its roots in database transactions, many of the STM implementations simply adopt the correctness criteria from the database world — namely, “serialisability”.

This means that the result of executing a set of concurrent transactions must be identical and equivalent to an execution in which the transactions are executed serially, one after another. Guaranteeing the ACI (Atomicity, Consistency and Isolation) properties in a STM implementation ensures that the serialisability criterion is satisfied.

Conflict serialisability is a well-known concept from database implementations, which has been adopted by many of the STM implementations for specifying transactional semantics.

STMs guarantee conflict serialisability by enforcing two-phase locking (2PL). However, two-phase locking has been shown to be a strict model of serialisability for database transactions. That is, for a fixed number of actions performed by a fixed number of transactions, the set of serialisable schedules is a superset of the set of schedules that obey 2PL. Therefore, STM systems guarantee serialisability by using 2PL, yet 2PL is not necessary for serialisability.

The use of 2PL in STM systems results in lack of concurrency in certain cases, because some schedules that do not obey 2PL may very well be serialisable and thus produce the correct outcome. For applications that have abundant parallelism, and are without excessive data sharing, the use of 2PL is not likely to be a problem. However, for applications that involve excessive data sharing among transactions, 2PL is likely to limit concurrency and degrade performance.

Hence, there has been work on using conflict serialisability as the system’s safety property, instead of two-phase locking, in order to improve concurrency. Also note that while serialisability is a useful model for understanding the behaviour of transactional code, it falls short of completely specifying the complete STM semantics, since it does not specify the interaction of transactional code and non-transactional code.

Single Global Lock (SGL) semantics

One of the simplest and most intuitive STM semantics is to consider transactions as if executed under a single global lock. With this model, we can define the semantics of a transactional program to be equivalent to a corresponding non-transactional program, where every transactional region is converted such that it is protected by a single global program-wide lock, which needs to be acquired before the transactional region can be entered — and released at the end of the transactional region.

Therefore, from the Single Global Lock (SGL) perspective, it is as if only one atomic block can be executed transactionally, at a time. This means that the total number of concurrent transactions at any time is only one. For instance, consider an atomic block, as shown below in the following table, wherein statements s1 and s2 are enclosed inside an atomic block. Under SGL semantics, this atomic block appears as follows:

| Atomic section in terms of single global lock semantics | |||

| 1 | atomic |

–> |

lock(Tx_global_lock) |

| 2 | { |

s1 |

|

| 3 | s1; |

||

| 4 | s2; |

s2 |

|

| 5 | } |

||

| 6 | unlock(Tx_global_lock) |

||

Single Global Lock (SGL) semantics is appealing and intuitive, because it matches our natural understanding of transactions. It provides complete isolation and serialisability over all transactions. However, it does not explicitly capture the failure atomicity property of the transactions. This model cannot be directly employed as an STM implementation strategy, since it precludes any concurrency.

Under SGL, the single global lock enforces synchronisation among transactions. Non-transactional code does not acquire the global lock. Therefore, there can be data races between transactional and non-transactional code, if they contain conflicting data accesses. The behaviour of a program that uses transactions is well defined if the program does not exhibit data races when the transactions are expressed using a single global lock semantic model.

The behaviour of program containing data races is undefined. Data races in a transactional memory program can expose details of the transactional memory implementation, and so may produce different results on different systems. Hence, programmers should ensure that the application is data-race-free, in order to ensure clean interaction between transactional and non-transactional code.

Privatisation and publication safety

Since software transactional memory has been considered as a promising and less error-prone programming alternative to traditional lock-based synchronisation, STM implementations aim to provide semantic guarantees that are at least as strong as those provided by conventional lock-based implementations.

For interactions between non-transactional and transactional code regions, the behaviour should be similar to what happens with lock-based implementations, where shared data can be accessed both inside and outside critical sections. There are two well-known programming idioms used in traditional concurrent code — namely, privatisation and publication idioms. These programming idioms involve data conflicts between transactional and non-transactional code regions.

In order that STM implementations offer semantic guarantees that are equivalent to those of lock-based synchronisation for such idioms, they need to be privatisation- and publication-safe. Consider the code sample in the following table, which demonstrates the privatisation idiom.

| An example of privatisation | |

| Thread 1 | Thread 2 |

ListNode res;

atomic { //Tx1

res = lHead;

if (lHead != null) lhead = lhead.next;

}

|

atomic { //Tx2

ListNode n = lHead;

while (n != null)

{ n.val++; n = n.next;}

}

|

Thread 1 privatises the head of the linked list through the transaction Tx1, and subsequently uses it outside the transactional code through the local variable res.

Under the SGL semantics, the two transactions cannot execute concurrently. Either Tx1 executes first, or Tx2 does. If Tx1 executes, it first privatises the current head of the list, so that further accesses to it in Thread 1 do not require the accesses to be wrapped in a transaction.

When Tx2 executes next, it cannot access the previous head of the list (since it has already been privatised). On the other hand, if Tx2 executes first, it first updates all the linked-list elements, and commits. Since Tx1 and Tx2 cannot execute concurrently, the access of lHead by Thread 1 outside the transactional code region is safe, since no other thread can access lHead after it has been privatised.

Publication is just the opposite of the privatisation idiom. Consider the example code shown below

Initially data = 42, ready = false, val = 0

Thread 1: Thread 2:

atomic {

temp = data

data = 1;

atomic {

ready = true;

}

if (ready)

val = temp;

}

/*Can val be 42 at this point?*/

The variable data is initially private, and then initialised and published — hence the term “publication idiom”. This idiom is quite commonly used in concurrent code.

If the atomic blocks were implemented using traditional lock-based synchronisation, at Line 10, the variable val can either be 1 or 0, depending on which thread executed the critical section first. On the other hand, on an STM implementation, the value of val at Line 10 can end up being 42, since the initialisation of data by Thread 1 happens outside the transactional code, and can end up being read by the concurrent transaction on Thread 2 and stored into the local variable temp. Given the interleaving of Thread 1 and Thread 2, as shown in the code example, the value of val at Line 10 can end up being 42.

Publication problems can occur if a private location is modified non-transactionally, and then it is transitioned to a shared state. If a concurrent transaction reads the non-transactional data before the transition operation occurs (due to compiler reorderings or value speculation), then it may perform an invalid computation, due to its inability to detect conflicts with the non-transactional initialisation code.

Since there is a notion that transactions should offer as strong a semantic guarantee as lock-based critical regions, it is necessary that STM should be publication- and privatisation-safe. The single global lock semantics we discussed earlier are both publication- and privatisation-safe. However, ensuring efficient STM implementations which are publication- and privatisation-safe is not straight-forward, and there has been considerable research in providing scalable privatisation and publication techniques.

My ‘must-read book’ for this month

This month’s “must-read book” is also on software transactional memory. It is Software Transactional Memory by Larus, Rajwar and Harris, (second edition) from Morgan Claypool publishers. I like this book because it provides an excellent introduction to software transactional memory, detailing the various innovations and issues in this continuously evolving research area.

If you have a favourite programming book that you think is a must-read for every programmer, please do send me a note with the book’s name, and a short write-up on why you think it is useful so I can mention it in the column. This would help many readers who want to improve their coding skills.

If you have any favourite programming puzzles that you would like to discuss on this forum, please send them to me, along with your solutions and feedback, at sandyasm_AT_yahoo_DOT_com. Till we meet again next month, happy programming and here’s wishing you the very best!