With enterprises depending a lot on the Internet to run their businesses, the cloud infrastructure has become an attractive proposition from an entrepreneur’s viewpoint. Thankfully, cloud infrastructure management tools come in various open source avatars.

Cloud computing provides dynamically scalable and virtualised resources as services over the Internet. With the assistance of this technology, people can use a variety of devices, including PCs, laptops, smartphones and PDAs to access programs, storage and application-development platforms over the Internet, via services offered by cloud computing providers.

In 2011, NIST (National Institute of Standards and Technology) defined cloud computing as “…a model for enabling ubiquitous, convenient, on-demand network access to a shared pool of configurable computing resources (e.g., networks, servers, storage, applications and services) that can be rapidly provisioned and released with minimal management effort or service provider interaction.” Cloud computing is characterised by five attributes—on-demand self-service, broad network access, resource pooling, rapid elasticity and measured service. It has three service delivery models – Software as a Service (SaaS), Platform as a Service (PaaS) and Infrastructure as a Service (IaaS). For deployment, cloud computing has three models — private, public and hybrid.

Cloud computing is highly cost-effective because of resource multiplexing. Application data is stored closer to the site where it is used, in a manner that is device and location independent; potentially, this data storage strategy increases reliability as well as security. All the maintenance and security aspects are managed by the service providers. Organisations using computer clouds no longer need to support large IT teams, acquire and maintain costly hardware and software, and pay large electricity bills. Cloud service providers can operate more efficiently due to the economies of scale. Data analytics, data mining, computational financing, scientific and engineering applications, gaming, social media and data intensive activities—all benefit from cloud computing.

Cloud computing represents a dramatic shift in the design of systems capable of providing vast amounts of computing cycles and storage space. The architecture, the coordination mechanism, the design methodology, and the analysis techniques for large scale complex systems such as computing clouds will continue to evolve in response to changes in technology, the environment and the social impact of cloud computing.

Cloud infrastructure management

Considering the importance of cloud computing for enterprises and its increasing incorporation of technologies like machine learning, Big Data and artificial intelligence, managing the infrastructure of the cloud has become a top priority. According to Microsoft, the global hybrid cloud market size has grown 78 per cent year over year in 2017 to about US$ 155 billion. Public IT cloud services revenue will reach US$ 141.2 billion by 2019, and all the cloud partners are experiencing 50 per cent growth in revenue, every year.

Cloud infrastructure management refers to on-demand services provided through the IaaS model that enable access to shared pools of configurable resources like servers, networks, storage, applications and services. With the help of cloud management services, enterprises can focus on their own organisational goals. Cloud infrastructure enables efficient management, automation and cloud governance from a single console. This helps to manage the health, performance and security of any public cloud infrastructure like Amazon Web Services, Microsoft Azure, Google Cloud, Rackspace, Joyent, and any other private cloud.

Many cloud vendors provide new tools to enable enterprises to build, buy, manage, monitor, tweak and track cloud services. But instead of using commercial tools, one should consider the many open source tools available that enable enterprises to build, monitor, manage and track all the cloud infrastructure.

Let’s now look at the key open source cloud infrastructure management tools for enterprises.

OpenStack

OpenStack was founded in 2010 by two small groups of engineers from Rackspace and NASA, working in collaboration to build an open source cloud infrastructure management tool to run large computer systems in a transparent manner. In 2012, OpenStack became completely open source and non-profit.

OpenStack is regarded as a combination of open source tools that can be used to create private and public clouds, and it is built and disseminated by a large and democratic community of developers, in collaboration with users. OpenStack controls large pools of compute, storage and networking resources throughout a data centre, managed through a dashboard that gives administrators control while empowering users to provision resources through a Web interface. It lets users deploy virtual machines and other instances that handle the different tasks of managing the cloud environment. It makes horizontal scaling easy, which means the tasks that benefit from running concurrently can easily switch from serving more to fewer users, on the fly. Since OpenStack source code is open, it can be modified and changed as per the users’ needs.

Ecosystem: OpenStack comprises six core services and 13 optional services, Some of these are listed below.

- Keystone: This is the authorisation and authentication component of OpenStack. It handles the creation of the environment and is the first component installed.

- Nova: This manages the computing resources of the OpenStack cloud. It takes care of instance creation and sizing as well as location management.

- Neutron: This is responsible for creating virtual networks within the OpenStack cloud. This module creates virtual networks, routers, sub-nets, firewalls, load balancers, etc.

- Glance: This manages and maintains server images. It facilitates the uploading and storing of OpenStack images.

- Cinder: This provides block storage, as a service, to the OpenStack cloud. It virtualises pools of block storage devices and provides end users with a self-service API to request and consume resources without requiring any storage knowledge.

- Swift: This comprises storage systems for objects and files. It makes scaling easy, as developers don’t have to worry about the system’s capacity. It allows the system to handle the data backup in case of any sort of machine or network failure.

- Horizon: This is the dashboard of OpenStack. It provides a GUI interface to users to access all components of OpenStack via the API and enables administrators to use the OpenStack cloud.

- Ceilometer: This provides telemetry services, including billing services to individual users of the cloud.

- Heat: This is the orchestration component of OpenStack, allowing developers to define and store the requirements of a cloud application in a file.

OpenStack can be deployed using the following models.

- OpenStack-based public cloud: In this case, the vendor provides a public cloud computing system based on the OpenStack project.

- On-premise distribution: Here, the clients download and install the distribution within their customised network.

- Hosted OpenStack private cloud: In this case, the vendor hosts the OpenStack based private cloud, including the hardware and software.

- OpenStack-as-a-Service: Here, the vendor hosts OpenStack management Software as a Service.

- Appliance based OpenStack: This covers compatible appliances that can be added into the network provided by Nebula.

Official website: www.openstack.org

Latest version: Rocky (2018.08.30)

Apache CloudStack

Apache CloudStack is open source software especially designed to deploy and manage large networks of virtual machines to create highly scalable, available and secured IaaS based computing platforms. It is multi-hypervisor and multi-tenant, and provides an open, flexible cloud orchestration platform delivering reliable and scalable private and public clouds.

CloudStack provides a cloud orchestration layer that automates the creation, provisioning and configuration of IaaS components. It supports all the popular hypervisors —VMware, KVM, Citrix XenServer, Xen Cloud Platform, Oracle VM server and Microsoft Hyper-V.

CloudStack provides powerful features that enable a secure multi-tenant cloud computing environment. With one click, virtual servers can be deployed from a predefined template. Virtualised instances can be shut down, paused and restarted via the Web interface, the command line or by calling the extensive CloudStack API.

CloudStack can be used for the following purposes.

- Service providers can offer virtualised hosting in an elastic cloud computing configuration.

- Enterprises can stage development, testing and production in a consistent way, easing the development and publishing processes for applications.

- Web content providers can deploy scalable, elastic Web infrastructure that can adapt to meet the demands of their readers.

- Software as a Service providers can offer true multi-tenant software hosting services while securing each user’s environments.

Architecture: Apache CloudStack deployment involves two parts – the management server and the resources to be managed. Two machines are required to deploy Apache CloudStack—the CloudStack management server and the cloud infrastructure server.

- The management server: This orchestrates and allocates the resources during cloud deployment. It runs on a dedicated machine, controls the allocation of virtual machines to hosts and assigns IP addresses to virtual machine instances. It runs in the Apache Tomcat container and requires a MySQL server as the database.

- The cloud infrastructure server: This manages the following:

- Regions: One or more cross-region proximate zone/s

- Zones: A single data centre

- Pods: A rack or row of racks that include the Layer-2 switch

- Clusters: One or more homogeneous hosts

- Host: A single compute node within a cluster

- Primary storage: Storage in a single cluster

- Secondary storage: A zone-wide resource to store disk templates, ISO images and snapshots

Official website: https://cloudstack.apache.org

Latest version: 4.11.0

Eucalyptus cloud platform

Eucalyptus stands for Elastic Utility Computing Architecture for Linking Your Programs To Useful Systems. It is a powerful open source framework that provides a platform for private cloud computing implementation on computer clusters. It implements IaaS based solutions in private and hybrid clouds. Eucalyptus provides a platform for a single interface so that users can calculate the resources available in private clouds and the resources available externally in public cloud services. It is designed based on extensible and modular architecture for Web services. It also implements the industry standard Amazon Web Services (AWS) API. This helps it to export a large number of APIs for users via different tools.

Architecture: The architectural components of Eucalyptus include the following.

- Images: Software modules, configuration, applications or software deployed in the Eucalyptus cloud.

- Instances: Images when used are called instances. The controller decides how to allocate memory and provide all resources.

- Networking: This comprises three modes – the managed mode for the local network of instances including security groups and IP addresses, the system mode for MAC address allocation and attaching the instance network interface to the physical network via NC’s bridge, and the static mode for IP address allocation to instances.

- Access control: Every use of Eucalyptus is assigned an identity and these identities are grouped together for access control.

- Elastic block storage: This provides block-level storage volumes that are attached to an instance.

- Auto scaling and load balancing: This creates and destroys instances automatically based on requirements.

Components: The following components make up the Eucalyptus cloud.

- Cloud controller: This is the main controller that manages the entire platform and provides a Web and EC2 compatible interface. It performs scheduling, resource allocation and accounting.

- Walrus: Works like AWS S3 (Simple Storage Service). It offers persistent storage to all virtual machines in the cloud and can be used as a simple HTTP put/get Solution as a Service.

- Cluster controller: Manages VM execution and SLAs. Communicates with the storage controller and node controller, and is the front end for the cluster.

- Storage controller: Communicates with the cluster controller and node controller, managing all block volumes and snapshots to the instances within the specific cluster.

- VMware broker: Provides an AWS-compatible interface for VMware environments and physically runs on the cluster controller.

- Node controller: Hosts all virtual machine instances and manages the virtual network endpoints.

Official website: https://github.com/eucalyptus/eucalyptus/wiki

Latest version: 4.4.3

Synnefo

Synnefo is an open source cloud infrastructure stack that provides the network, image, volume and storage services and is written in Python. It manages multiple Google Ganeti clusters at the backend that handle low level VM operations, and it uses Archipelago to unify cloud storage. It also supports OpenStack APIs for end users.

Components: Synnefo comprises the following components.

- Astakos: This identity management component provides a common user base to Synnefo. It manages user creation, group creation, resource accounting, quotas and projects, and generates authentication tokens across the infrastructure.

- Pithos: This manages the file storage of Synnefo. It maps user files to content-addressable blocks that are stored at the backend. Pithos runs at the cloud layer and exposes the OpenStack Object Storage API to the outside world, with custom extensions for syncing. Pithos has drivers for two storage backends:

- Files on a shared file system, e.g., NFS, Lustre, GPFS or GlusterFS

- Objects on a Ceph/RADOS cluster

- Cyclades: This implements compute, network, image and volume services. It is associated with OpenStack REST APIs — OpenStack Compute, Network, Glance and Cinder. The Cyclades UI is written in JavaScript/jQuery and runs entirely on the client side for maximum responsiveness. It is just another API client; all UI operations happen with asynchronous calls over the API.

Official website: https://github.com/grnet/synnefo

Latest version: 0.19.1

FOSS-Cloud

FOSS-Cloud is regarded as an integrated, open source and redundant server infrastructure to provide cloud services, Windows or Linux based SaaS, a terminal server, and VDI or virtual server environments. It supports server and PC access via local networks or via the Internet. It provides cloud computing solutions that include virtualisation, cloud based desktops, IaaS, PaaS and SaaS.

Architecture: Users receive a design regarding how the various components are best distributed over the physical servers and how to interconnect them with redundant stackable switches. In the FOSS-Cloud documentation, a user can find examples of mapping the required hardware. With virtualisation, the amount of memory is often the limiting factor. The physical servers must have at least two network interfaces, a lot of memory and powerful processors. FOSS-Cloud enables an environment with multiple physical servers that together offer a total solution, including backup servers, etc.

FOSS-Cloud also offers a solution for a single server. It provides an opportunity to get acquainted with possibilities without having to immediately submit a very demanding investment request to your boss.

A third option is to install a demo environment. This requires a single server with at least 4GB of memory and a processor that is suitable for KVM. During installation, the user can choose the demo environment and get started just 15 minutes later. Of course, the performance of the virtual machines depends on the power of the hardware on which the server runs.

Official website: http://www.foss-cloud.org/en/wiki/FOSS-Cloud

Latest version: 0.6.1

openQRM

openQRM is a free, open source cloud computing infrastructure management platform for heterogeneous data centre infrastructures. It provides a complete, automated workflow engine for VM deployment and provides professional management and monitoring for data centres.

It is a fully pluggable architecture focused on automatic, rapid and appliance-based deployment and monitoring, and especially on supporting and conforming to multiple virtualisation technologies. openQRM is a single management console for the complete IT infrastructure and provides a well-defined API, which can be used to integrate third party tools as additional plugins. The openQRM platform provides an easy way of building a private cloud network inside an organisation’s network.

openQRM provides a Web based, open source data centre management and cloud platform with the help of which various internal and external technologies can be abstracted and grouped within a common management tool. It is a framework that implements an open plugin architecture. For example, an existing hypervisor such as KVM or Xen can be easily integrated as one of the many possible resource providers.

Instead of providing individual tools for individual tasks, such as configuration management and system monitoring, openQRM integrates proven open source management tools such as Nagios and Zabbix.

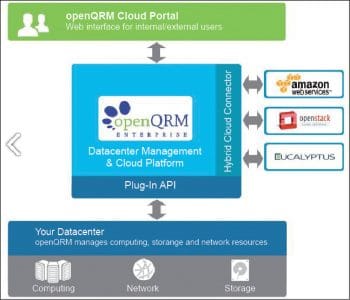

Architecture: The openQRM system architecture comprises three components.

- The data centre management and cloud platform

- The plugin API

- The hybrid cloud connector

The data centre management and cloud platform provides the basic functionality of openQRM; it uses the plugin API to communicate with the data centre resources that are also installed on the local network (hypervisor, storage and network).

In terms of storage, openQRM can handle LVM, iSCSI, NFS, ATA over Ethernet, SAN Boot, and Tmpfs storage. For the network configuration, openQRM integrates critical network services such as DNS, DHCP, TFTP, and Wake-on-LAN. The network manager included with the package helps administrators to configure the network bridges required for these services.

The hybrid cloud connector interfaces with external data centre resources, such as Amazon Web Services, Eucalyptus or OpenStack cloud. To do so, it relies on APIs by individual manufacturers, which dock with openQRM via the plugin architecture. The openQRM cloud portal provides a Web interface that internal or external users can use to compile IT resources as needed. Figure 5 shows an overview of the openQRM system architecture.

Official website: http://www.openqrm.org/

Latest version: 5.3.8

Cloud Foundry

Cloud Foundry is an open source, multi-cloud PaaS, which facilitates users to run and deploy apps on their own computing infrastructure, or deploy IaaS like AWS, vSphere or OpenStack. It helps in cost reduction and reducing the complexity of deploying cloud infrastructure. Developers can deploy apps onto Cloud Foundry using existing tools without even slight modifications in code.

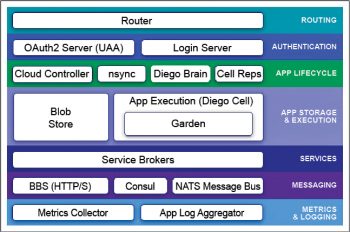

Cloud Foundry designates two types of VMs — the component VMs that constitute the platform’s infrastructure, and the host VMs that host apps for the outside world.

Components: The following components make up Cloud Foundry.

- Router: This routes incoming traffic to the cloud controller or application.

- OAuth2 server and login server: These work together to provide identity management.

- Cloud controller and Diego Brain: These direct the application deployment. Cloud controller directs Diego Brain via CC-bridge components to run applications.

- Nsync, BBS and Cell Reps: These work together to keep the apps up and running.

- Blobstore: This is a repository for large binary files. It contains application code packages, Buildpacks and Droplets.

- Diego Cell: This manages the life cycles of the container and the processes running it.

- Service brokers: These provide the service instance.

- Consul and BBS: The Consul server stores control data like IP addresses and distributed locks. BBS stores updated and disposable data in MySQL using the Go MySQL driver.

- Loggregator: This provides application logs to developers.

- Metrics collector: This collects metrics and statistical data from various components.

Official website: https://www.cloudfoundry.org/

SaltStack

SaltStack or Salt is open source cloud infrastructure configuration management software written in Python and supports the ‘Infrastructure as Code’ approach for cloud management. It provides a powerful interface for cloud hosts to interact and interface.

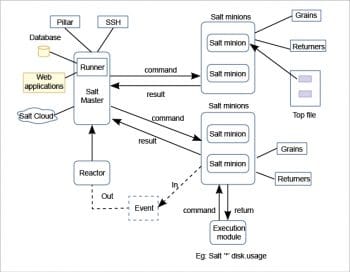

Salt is designed to allow users to explicitly target and issue commands to multiple machines directly. It is based around the idea of a master, which controls one or more minions. Commands are normally issued from the master to a target group of minions. These minions execute the tasks specified in the commands and return the resulting data back to the master. Communication between a master and minions occurs over the ZeroMQ message bus.

SaltStack modules communicate with the supported minion operating systems. The Salt Master runs on Linux by default, but any operating system can be a minion, and currently Windows, VMware vSphere and BSD UNIX variants are well supported. The Salt Master and the minions use keys to communicate. When a minion connects to a master for the first time, it automatically stores keys on the master. SaltStack also offers Salt SSH, which provides agentless systems management.

Architecture: The components that make up SaltStack architecture are listed below.

- SaltMaster: This send commands and configurations to Salt slaves.

- SaltMinions: These receive commands and configurations from SaltMaster.

- Executions: These are modules and ad hoc commands.

- Formulas: These are used for installing a package, configuring and starting a service, and setting up users and permissions.

- Grains: These offer an interface to minions.

- Pillar: This generates and stores sensitive information with regard to minions.

- Top file: This matches Salt states and pillar data to Salt minions.

- Runners: These perform tasks like job status and connection status, and read data from external APIs.

- Returners: These are responsible for triggering reactions when events occur.

- SaltCloud: This is an interface to interact with cloud hosts.

- SaltSSH: This runs Salt commands over SSH on systems.

Official website: https://saltstack.com/

Latest version: 2018.3.2