This real-time grocery price comparison system has been designed for leading Indian e-commerce platforms. Built on microservices architecture, the system leverages FastAPI for backend services and Selenium for dynamic web scraping to deliver accurate, up-to-date pricing data.

Online grocery platforms have significantly reshaped consumer shopping habits in today’s digital economy. With numerous e-commerce grocery services now available, users often struggle to compare prices efficiently across different platforms and make cost-effective decisions. This paper addresses that challenge by developing a system capable of providing real-time grocery price comparisons, allowing users to make more informed and economical purchasing choices.

This work sits at the intersection of consumer facing technology, web data extraction, and mobile application development. As grocery delivery platforms like Zepto, JioMart, Swiggy Instamart, and BigBasket continue to grow, price variations across these services have become frequent. However, the Indian market still lacks a centralised solution that offers real-time, user-friendly price comparison in a mobile-first format.

Our goal is to design and implement an Android application that fetches, compares, and displays current grocery prices from various vendors. The application is built to deliver a seamless user experience, supported by a scalable and modular backend. The backend system, developed using FastAPI and Selenium, handles real-time data scraping and exposes this information through RESTful APIs. For development and testing, the API is made accessible to the Android frontend using ngrok tunnelling. The frontend asynchronously retrieves pricing data and presents it through a clean, intuitive interface aligned with modern Android UI standards.

Key outcomes of this research work include a working mobile app that supports real-time grocery price comparisons, a modular backend supporting scraping and API services, and a flexible proof-of-concept that could be extended into intelligent shopping tools or dynamic pricing analytics platforms in the future.

System architecture

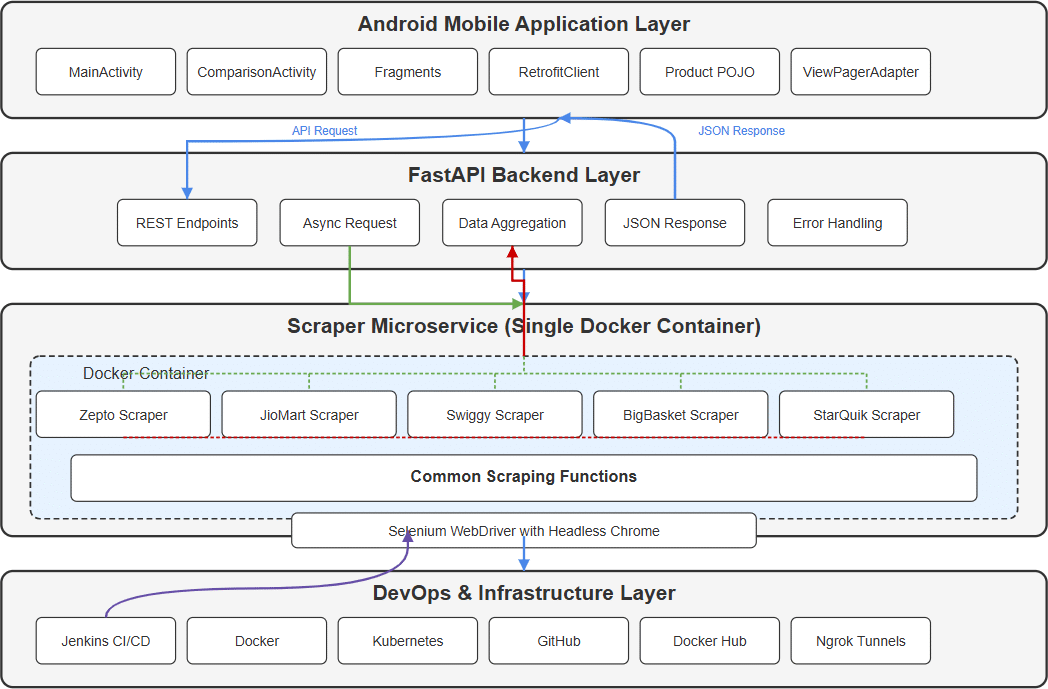

The proposed system follows a modular, microservices-based architecture designed to aggregate grocery pricing data from multiple Indian e-commerce platforms in real-time. It emphasises scalability, low-latency API access, and smooth integration with mobile clients, while also supporting containerised deployment and CI/CD automation for streamlined development and updates. Figure 1 illustrates the high-level architecture.

Scraper microservices

Each grocery platform — Zepto, JioMart, Swiggy Instamart, BigBasket, and StarQuick — is associated with an independent scraping service. These services are implemented using Selenium WebDriver and operate in headless Chrome instances. Each scraper is encapsulated within a Docker container to maintain isolation, ease of deployment, and fault containment. Scrapers extract structured data including product name, price, unit, availability, and image URL, either by parsing rendered DOM elements or intercepting API calls using Chrome Dev-Tools Protocol.

FastAPI backend server

The FastAPI backend exposes RESTful endpoints to deliver scraped data to external consumers, particularly the Android mobile frontend. It is architected to support asynchronous requests and rapid response times. The API exposes end points such as

/search?query={product}, which return JSON formatted data. The server also handles request routing, response formatting, and basic input validation.

Mobile application layer

An Android-based mobile application built using Java serves as the user interface layer. It interacts with the FastAPI backend via HTTP APIs, rendering product listings and comparison data in a structured and user-friendly format. The app is designed for minimal data loading times and efficient rendering of image and price data.

CI/CD pipeline with Jenkins

A Jenkins-based CI/CD pipeline automates building, testing, and deploying all components. For the mobile application, Jenkins clones the GitHub repository, builds the APK using Gradle, and archives the artifact. For backend and scrapers, Jenkins builds Docker images and publishes them to Docker Hub. Deployment is performed using Kubernetes manifests via the Jenkins Kubernetes plugin and kubectl commands.

Containerisation and orchestration

All backend and scraper services are containerised using Docker and deployed to a Kubernetes cluster. Kubernetes is used to manage service discovery, load balancing, rolling updates, and fault tolerance. This setup ensures that each microservice can scale independently based on resource usage and demand.

Experimental setup

To validate the system functionality and performance, a structured experimental setup was designed, simulating near production conditions. The objective was to test real-time data retrieval, API responsiveness, Android integration, and deployment reliability across a modular microservices environment.

Environment configuration

The experimental environment consisted of local development machines running Linux and Windows 11, with the scraper and backend services containerised using Docker. The Android application was tested on physical devices (Samsung Galaxy S23, One Plus) connected to the internet via ngrok- exposed FastAPI endpoints.

Version control and source management

All code artifacts, including backend services, scraping scripts, and mobile source code, were hosted on GitHub. The repository (https://github.com/sakina27/price-scanner-app) enabled version control, issue tracking, and team collaboration. GitHub was also integrated with Jenkins to trigger automated build pipelines on every push to the main branch.

Scraper execution

Each scraping service was encapsulated in its own Docker container and configured to run in headless mode using ChromeDriver. Execution was managed via cron jobs or manual triggers. Selenium waits ensured dynamic elements were fully loaded, while Chrome DevTools Protocol was employed to intercept and extract clean JSON payloads from API calls.

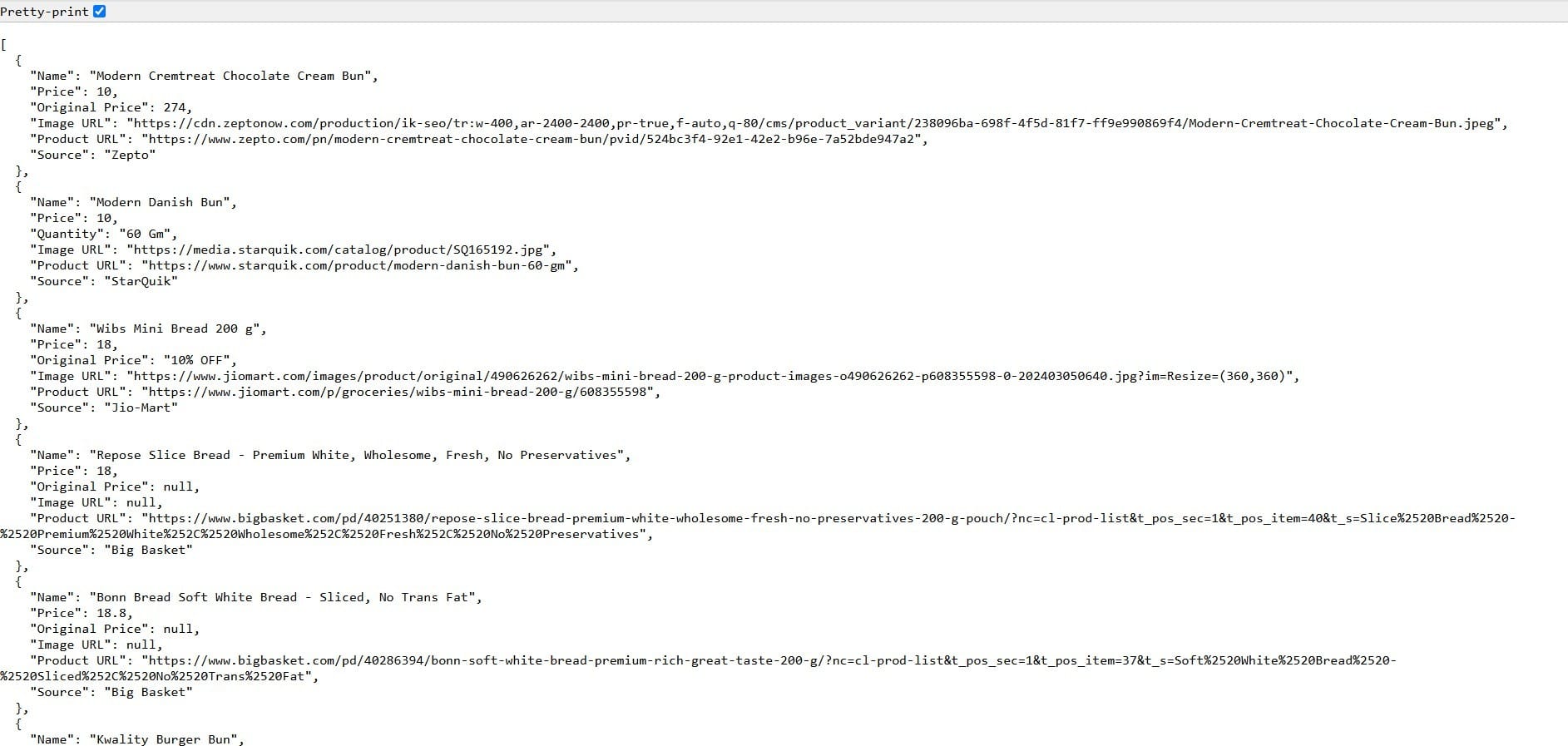

Figure 2 shows a formatted JSON output containing product data scraped from multiple online grocery platforms. Each entry includes the item name, price, store source, image URL, and product link used by the system for real-time price comparison.

Mobile integration testing

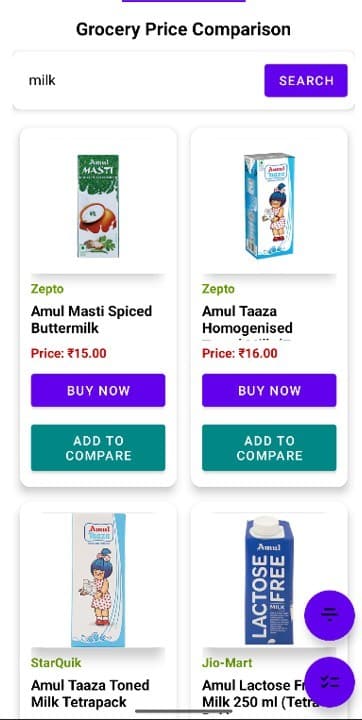

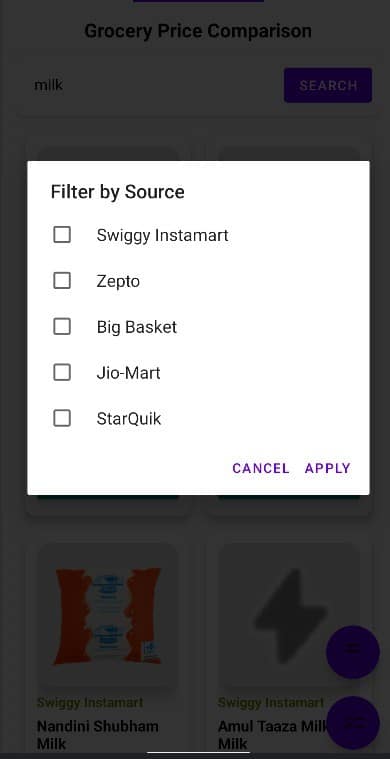

The Android app was tested with live data sourced from the backend via ngrok URLs. Functional tests covered UI rendering, JSON parsing, error handling, and image loading. The app successfully displayed over 100 unique products per store, with no significant lags or crashes. Figure 3 shows the mobile UI of the grocery price comparison app. Upon searching for ‘milk’, the app displays product listings from various online retailers like Zepto, StarQuik, and JioMart, along with prices, images, and actionable buttons for purchase or comparison.

CI/CD pipeline validation

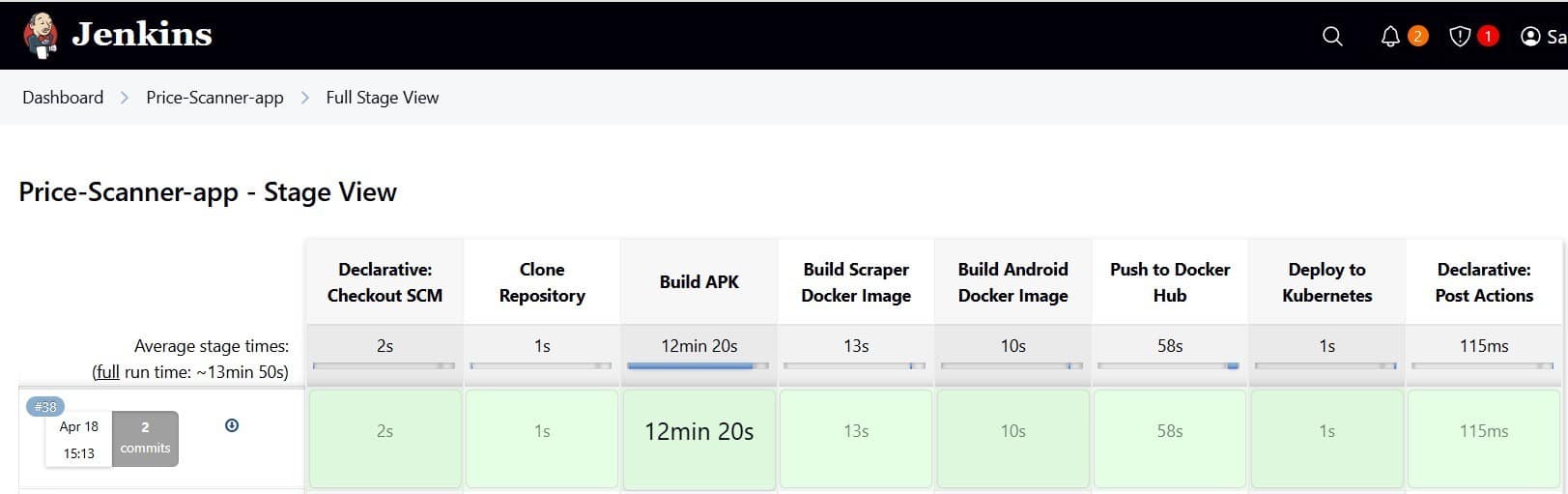

A multi-stage CI/CD pipeline was implemented using Jenkins, triggered automatically on each commit to the GitHub repository. The pipeline consisted of the following stages:

- Building the Android APK using Gradle.

- Creating Docker images for the scraper and API services.

- Pushing the images to Docker Hub.

- Deploying the services to a Kubernetes cluster using declarative YAML manifests.

To ensure safe and incremental rollouts, the pipeline was tested with canary deployments and rollback procedures. Figure 4 shows the Jenkins Stage View for the full pipeline. It visually represents each phase from source checkout and APK generation to image building, Docker Hub publishing, and Kubernetes deployment, along with the time taken for each step, offering insight into overall performance and pipeline efficiency.

Containerisation and orchestration

All services, including scraping bots, backend APIs, and auxiliary tools were containerised using Docker. Docker is a platform that enables developers to package applications along with their dependencies and environment configurations into lightweight, portable containers. This ensures consistency across different stages of development and deployment, and eliminates the classic ‘it works on my machine’ problem.

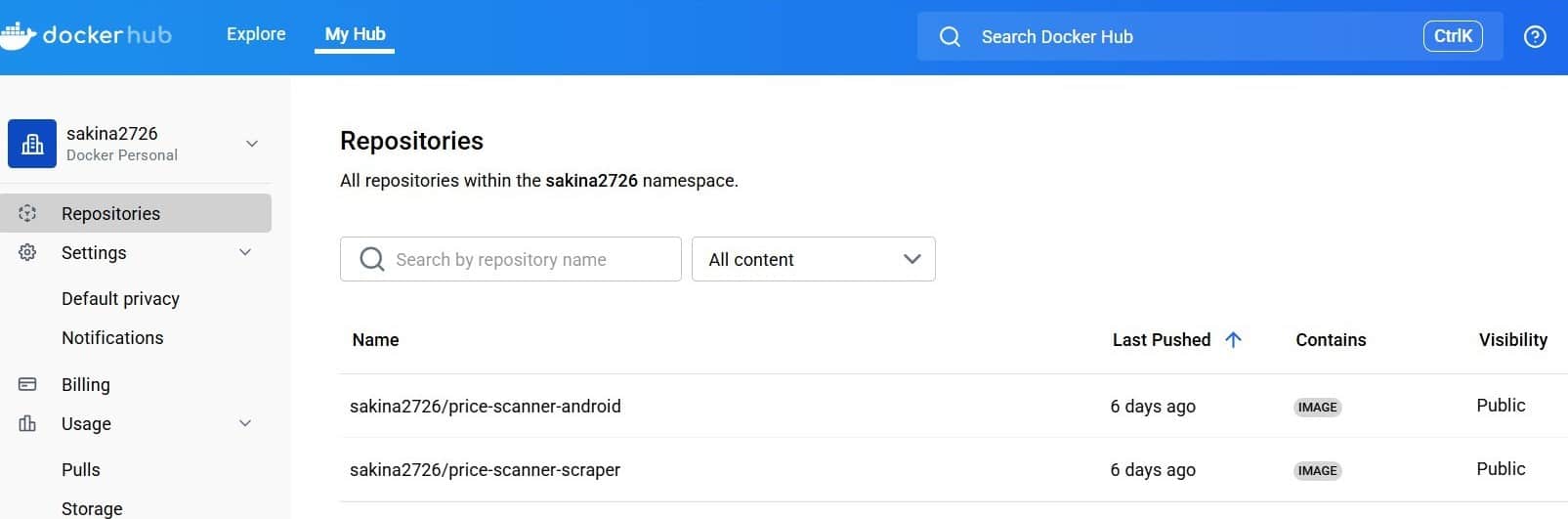

Each service was defined in a separate Dockerfile, specifying its runtime environment, dependencies, and execution commands. These containers were built as images and managed using Docker Compose during development for multi-container orchestration. Figure 5 displays the Docker Hub interface showing two public repositories: one for the Android frontend (price-scanner-android) and another for the scraping engine (price-scanner-scraper). These images are automatically built and pushed via the CI/CD pipeline and later deployed to the Kubernetes cluster.

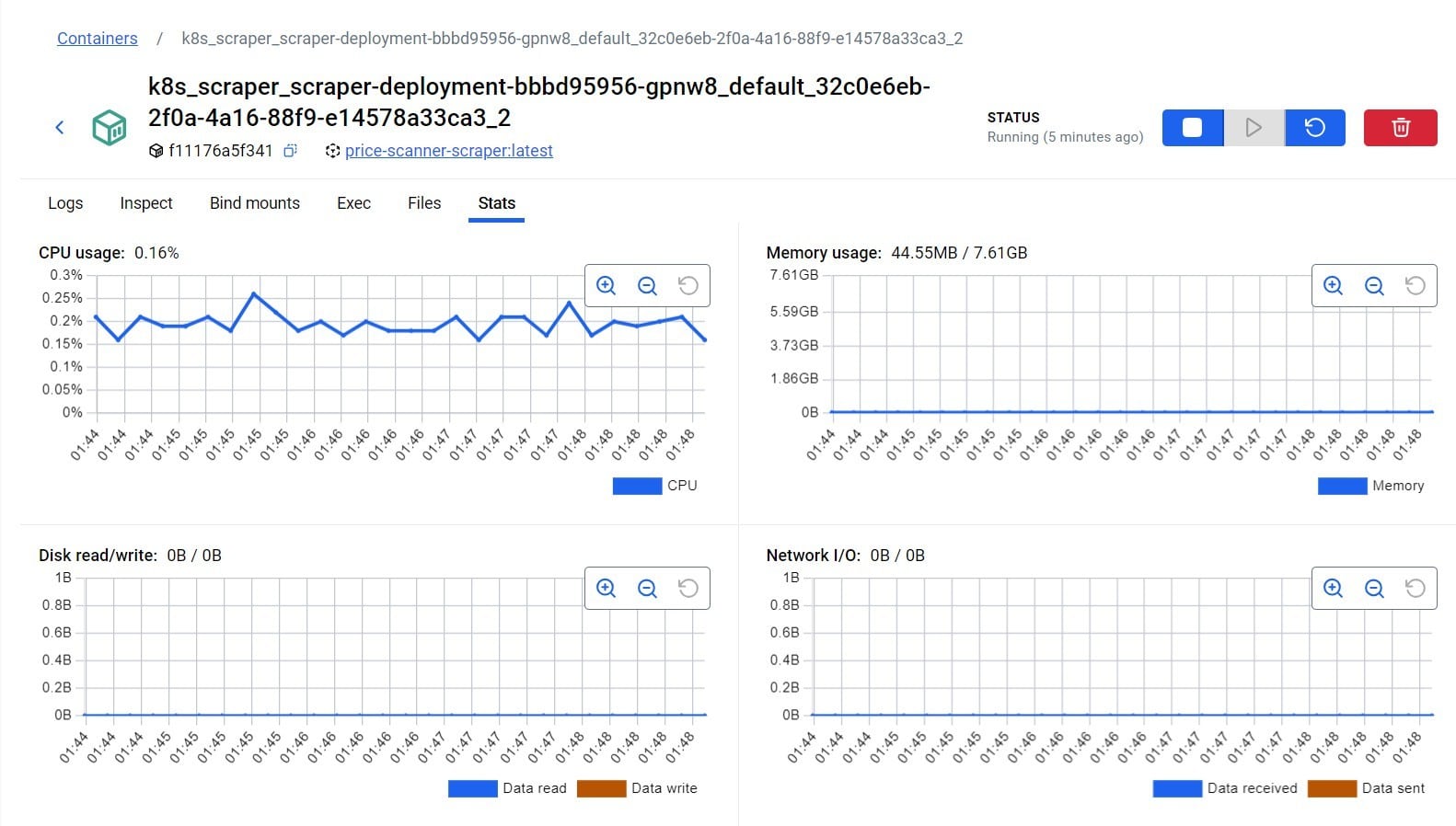

For deployment, the containerised services were orchestrated using a Kubernetes cluster. Kubernetes, being cloud provider-agnostic, offers capabilities like load balancing, horizontal scaling, self-healing, and service discovery. Pods were configured with resource limits and health checks to ensure optimal operation. Monitoring and debugging were carried out using the Kubernetes Dashboard, ‘kubectl describe’ and ‘kubectl logs’ commands, allowing real-time visibility into container behaviour and resource usage.

Monitoring and logging

FastAPI logging middleware and Docker stdout streams were used for runtime logging. Each scraper logged execution time, item count, and errors. Backend logs included HTTP status codes and timestamps. Kubernetes tools provided insights into pod states, CPU/memory usage, and deployment health (Figure 6).

Ethical compliance

All scraping operations respected the robots.txt directives of the targeted platforms. Only publicly accessible pages were accessed, and no authentication-protected or rate-limited APIs were used. The data collection was conducted strictly for academic research, ensuring compliance with ethical and legal standards.

This comprehensive experimental setup validated the performance, reliability, and modularity of the proposed system under realistic deployment scenarios.

Results

The Android application was successfully developed using a microservices architecture. It includes the following key components:

- A scraping service using FastAPI and Selenium for extracting product data from Zepto, Swiggy Instamart, BigBasket, and JioMart.

- A backend developed in Java for managing data, exposing REST APIs, and enabling comparison logic.

- The user must first enter the name of any product and search for it. The application will then show products from all the configured websites and sort them from the lowest to the highest price as shown in Figure 3.

- If a user clicks on any product, the user will be redirected to the actual app (if available) or website to see more details as shown in Figure 7.

- There are two floating buttons. The first one gives an option to filter out products on the basis of the source (Zepto, Swiggy, etc) as shown in Figure 8.

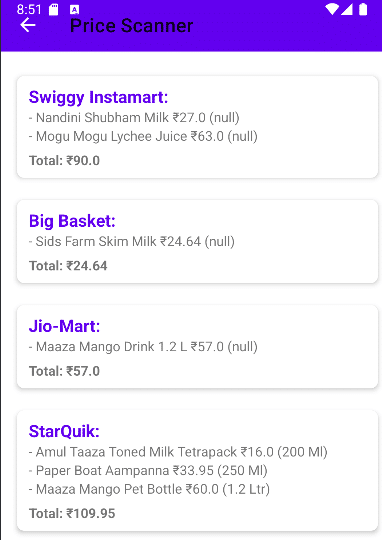

- The second floating button takes you to the ‘Comparison list’ screen, which gives the total value of products from each source. Users can choose the source as per the total value and shop from a particular website (Figure 9).

The modular structure of this system makes it easy to integrate new retailers over time, without increasing maintenance complexity. Notably, the implementation follows ethical scraping guidelines, ensuring minimal server load and respectful adherence to each platform’s usage policies.

Beyond its technical merits, the system provides real-world value by helping users make quicker, better informed shopping decisions. In a highly price-sensitive market, real-time comparisons empower users to save both money and the effort of switching between multiple apps. This is how open source technologies, when combined with thoughtful design, can address practical consumer challenges while maintaining flexibility, scalability, and ethical integrity.

What still needs to be done

Although the system achieves its core objectives, there are several areas where functionality can be expanded. These enhancements span technical scalability, user experience, and system performance.

Improved scalability

Asynchronous scraping using task queues like Celery or Kafka can help handle multiple platform requests in parallel and lower response latency.

Historical pricing and insights

Adding backend support for logging historical price data would enable trend analysis and allow users to make more informed, data driven decisions.

User personalisation

Features such as account creation, saved searches, price drop notifications, and delivery preferences could provide a more tailored experience.

ML-based recommendations

Machine learning can be introduced to suggest cost-effective alternatives, identify volume based savings, or rank products by price per unit.

Enhanced accessibility

Improving support for screen readers, voice input, and keyboard navigation would make the app more inclusive for users with different accessibility needs.

Caching and scheduled updates

Integrating intelligent caching and background refresh schedules would reduce scraping overhead and improve app responsiveness.

Domain expansion

The platform could be extended to support food delivery and cab fare comparison, reusing the existing modular microservices framework to deploy new scraping containers with minimal overhead.

These additions would not only improve performance and user engagement but also strengthen the system’s readiness for larger-scale deployment and broader use cases.