This outline of the essential tools, platforms, and frameworks across each layer of the agentic AI stack provides a structured blueprint for organisations seeking to adopt these systems at scale.

Agentic AI refers to AI systems capable of taking actions, using tools, coordinating workflows, and achieving goals with minimal human intervention. Unlike traditional generative AI, which focuses on producing text, images, or code, agentic AI systems can:

- Understand objectives

- Break them into tasks

- Select tools or APIs

- Execute multi-step workflows

- Monitor progress

- Adapt based on feedback

- Collaborate with other agents or humans

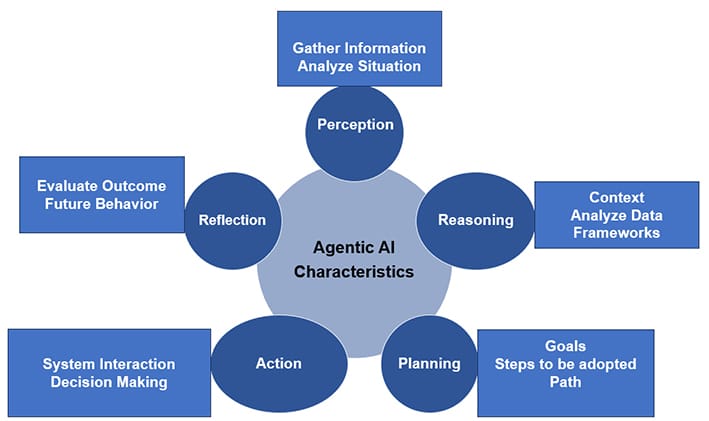

Key characteristics of agentic AI

Agentic AI systems plan, decide, act, and learn. This is fundamentally different from conversational or generative AI because they don’t just produce responses; they autonomously execute tasks and drive outcomes across systems. The process involves perception, reasoning, planning, action and reflection.

Perception

Agentic AI gathers information from its surroundings and different sources like sensors, databases, and user/digital interfaces. This involves analysing text, images, or other forms of data to understand the situation.

Reasoning

It analyses the gathered data to understand the context, identify relevant information, and formulate potential solutions using large language models (LLMs).

Planning

The agent uses the information it gathered to develop a plan. This involves setting goals, breaking them down into smaller steps and charting a path to achieve goals.

Action

The agent executes the plan by interacting with systems to perform the tasks and make decisions.

Reflection

The agent evaluates outcomes and adjusts future behaviour. This reflection loop improves accuracy and reduces manual review over time.

This continuous cycle of perception, planning, action, and reflection allows agentic AI to learn and improve over time.

| Industry trends in agentic AI |

|

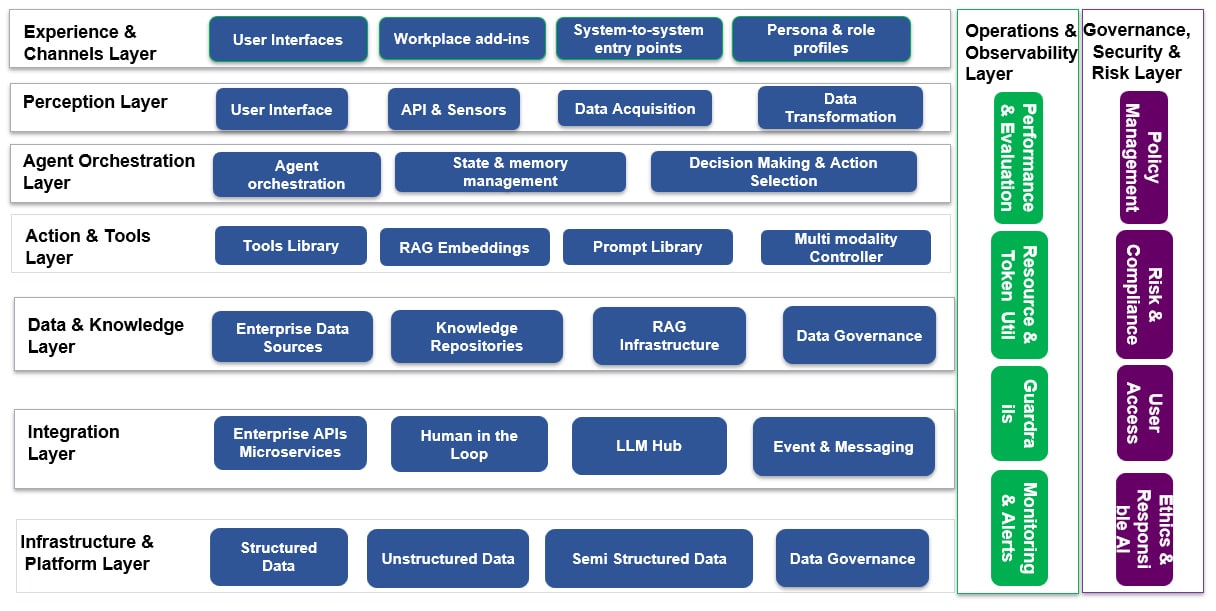

Agentic AI reference architecture

Agentic AI systems follow single-agent and multi-agent architecture models. These systems leverage machine learning models and methods to enable intelligent decision making and automation.

Agentic AI architecture is robust, modular, and secure to support autonomous reasoning, workflow orchestration, and multi‑agent collaboration. The architecture integrates planning, memory, execution, safety, and governance across the enterprise.

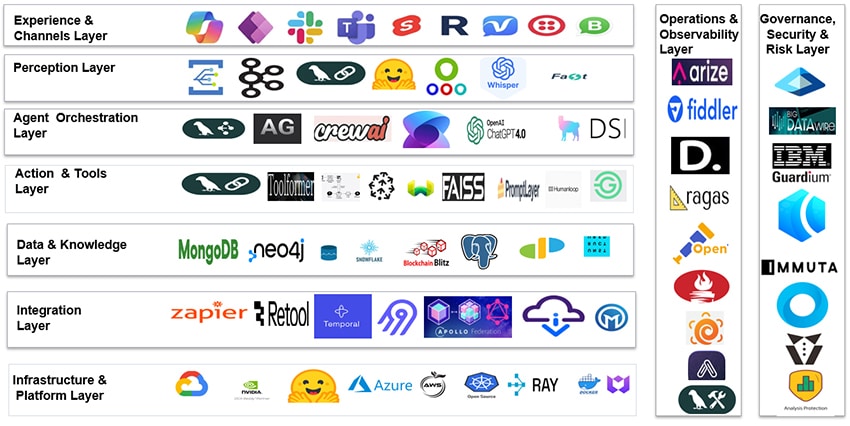

The various layers of the agentic AI architecture are classified as:

- Experience and channels layer

- Perception layer

- Cognitive / agent orchestration layer

- Action and tools layer

- Data and knowledge layer

- Integration layer

- Infrastructure and platform layer

- Operations and observability layer

- Governance, security, and risk layer

Experience and channels layer

This layer focuses on the user interface and how humans or external systems interact with the agents. Its components are:

- User interfaces: Web apps, portals, mobile apps, chat widgets, voice interfaces, CLI

- Workplace add-ins: Teams/Slack plugins, Outlook add‑ins, intranet tiles, line‑of‑business UI embeds

- System‑to‑system entry points: Webhooks, event streams, scheduled jobs that trigger agents

- Persona and role profiles: Map enterprise roles to specific agents, tools, and permissions

The tools used in this layer are Microsoft Copilot, Power Apps, Slack/Teams bots, Streamlit, Retool, Voiceflow, Twilio, WhatsApp Business API.

Perception layer

This is the ingestion and normalisation layer where agents perceive the world. Agentic AI gathers information from its surroundings and different sources like sensors, databases, and user/digital interfaces. This involves analysing text, images, or other forms of data to understand the situation. It converts unstructured data into a structured format, allowing the cognitive layer to process information efficiently. The components of this layer are:

- User interface input handlers: Text, voice, image, form data, file uploads

- APIs and sensors: Domain APIs, IoT feeds, logs, events, EHR/ERP/CRM data triggers

- Data acquisition systems: Batch ingestions, message buses (Kafka, Service Bus), streaming platforms

- Data transformation and normalisation:

- Parsing, validation, schema mapping

- PII redaction/de‑identification where needed

- Conversion into canonical ‘events’ or ‘observations’ for agents

Tools used in the perception layer are Azure Event Grid, Kafka, LangChain input parsers, Hugging Face Transformers, OpenCV (vision), Whisper (speech), FastAPI.

Agent orchestration layer

This layer is the brain of the agentic AI system. It manages the agent’s internal state, processes information from the perception layer, and determines the most appropriate actions based on its goals and current understanding. The main purpose of this layer is to plan, reason, orchestrate tools, manage state, and coordinate multi‑agent workflows. Key capabilities are:

- Planning and task decomposition: Break high‑level goals into sub‑tasks, sequence them, and adapt plans as new information arrives.

- Agent orchestration: Single agent flows (one agent managing a workflow), multi‑agent collaboration

- State and memory management: Short-term conversation state, long-term task memory, episodic memory of past runs and outcomes

- Contextual reasoning: Using retrieved knowledge, tool outputs, and history to make decisions; handling ambiguity and asking clarifying questions when needed.

- Continuous learning (behavioural): Incorporating feedback signals, success/failure, and human corrections into future decisions (via patterns, not direct self‑training in production models).

- Decision-making and action selection: Choosing which tool to call, when to involve humans, and how to proceed given constraints.

Tools used in this layer are LangGraph, AutoGen, CrewAI, Semantic Kernel, OpenAI Function Calling, Meta LlamaIndex, DSPy.

Action and tools layer

This layer translates decisions from the orchestration layer into activity. It provides a structured, governed toolkit that agents responsible for validating actions can safely invoke to read, write, and act across the enterprise and monitor implementation. The various components of this layer are:

- Tools library/skills registry: CRUD operations on enterprise systems, domain workflows, external services

- RAG and embeddings: Embedding models, vector stores for documents, KBs, FAQs, policies, retrieval pipelines and ranking logic

- Prompt/pattern library: Reusable prompt templates, chain/graph definitions, safety patterns and escalation prompts

- HITL controller and coordinator: Human-in-the-loop decision points, approval workflows, exception handling flows and routing

- Multimodality controller: Handling text, images, audio, and structured data interchangeably; routing to specialist models (vision, speech, code, etc)

Some of the prominent tools used in this layer are LangChain, Toolformer, RAG pipelines, Pinecone, Weaviate, FAISS, PromptLayer, HumanLoop, Guardrails.ai.

Data and knowledge layer

This provides governed, high‑quality, context‑rich knowledge and data that agents can reason over. The components of this layer are:

- Enterprise data sources: Data warehouses, data lakes, operational DBs, domain systems

- Knowledge repositories: Document stores, wikis, policy repositories, SOPs, email archives, tickets, chat logs, call transcripts

- Semantic layer: Ontologies, taxonomies, business glossaries, knowledge graphs linking entities and relationships

- RAG infrastructure: Vector stores for per‑domain corpora, indexing, chunking, metadata tagging

- Data governance: Data classification, data lineage and stewardship, access policies (by role, domain, sensitivity)

Tools used in this layer are Azure Data Lake, Snowflake, Databricks, MongoDB Atlas, Neo4j, Postgres, ElasticSearch, Milvus, Unstructured.io.

Integration layer

This layer provides robust, secure connectivity between agents, data, and enterprise applications. Various components of this layer are:

- Enterprise APIs and microservices: REST/GraphQL/gRPC endpoints to line‑of‑business systems

- Human‑in‑the‑loop interfaces: Review portals, approval dashboards, case management tools, workflow inboxes

- LLM hub and enterprise LLM gateways: Routing across multiple models (open, closed, domain‑tuned), policy-based model selection (e.g., sensitivity, latency, cost)

- Enterprise data and knowledgebase access: Connectors to data warehouses, data lakes, ECM systems, search

- Event and messaging: Pub/Sub-integration with enterprise buses (e.g., Kafka, Service Bus, Google Cloud Pub/Sub)

Tools used in this layer are Azure API Management, MuleSoft, Zapier, Retool, Temporal, Airbyte, GraphQL Federation, REST/gRPC endpoints.

Infrastructure and platform layer

This layer provides a scalable, highly available, reliable and resilient foundation for agents to execute complex tasks efficiently. The components of this layer are:

- Cloud platforms: Azure, AWS, Google Cloud, NVIDIA and specialised AI clouds

- Runtime: GPU clusters, CPU pools, Kubernetes, serverless, container orchestrators

- Model and LLM infrastructure: LLM API gateways and routers, and hosted model endpoints

- Vector and agent state databases: Vector DBs for embeddings, NoSQL/relational stores for agent state and logs

- Data engineering pipelines: ETL/ELT pipelines feeding data and knowledge layer, data quality tools and schedulers

- External services: Identity providers, secrets management, key vaults, observability stacks

Tools used in this layer are Azure, AWS, GCP, NVIDIA DGX, Hugging Face Inference Endpoints, Kubernetes, Ray, Docker, Terraform.

Operations and observability layer

This monitors system activities, provides feedback mechanisms, and facilitates continuous improvement by optimising processes based on operational data. Its components are:

- Performance and evaluation: Metrics on task success, latency, cost, and user satisfaction

- Resource and token utilisation: Telemetry for token usage, GPU/CPU, memory, cost tracking and optimisation policies

- Agent traces and behaviour tracking: Full traces of tool calls, decisions, and outcomes, episode‑level and step‑level logs

- Guardrails and runtime safety: LLM safety filters, PII detection, rate limiting, policy enforcement, circuit breakers

- Monitoring and alerts: Dashboards for health, anomalies, drift, alerts on suspicious behavior, failure spikes, policy violations

Tools used in this layer are Arize AI, Fiddler, DeepEval, RAGAS, OpenTelemetry, Prometheus, Grafana, AgentOps, LangSmith.

Governance, security, and risk layer

This layer ensures agents operate within legal, ethical, and enterprise boundaries. Major responsibilities include:

- Identity and access management: Agent identities and service principles, role‑based and attribute‑based access control, action‑level permissions

- Policy management: Data usage policies (PII, PHI, PCI, etc), regulatory constraints, model usage policies and provider constraints

- Risk and compliance oversight: Logging and auditability of every action and decision, compliance mapping (HIPAA, GDPR, SOC2, etc, as applicable)

- Ethics and responsible AI: Bias testing, fairness checks, human accountability and override mechanisms, incident response playbooks for AI failures

Tools used in this layer are Azure Purview, Immuta, Okta, Vault, AWS IAM, Microsoft Entra, Truera, IBM Guardium, BigID.

Open source tools for agentic AI architecture

This section briefly outlines open source tools used in the different layers of agentic AI architecture.

Experience and channel layer

Streamlit: This framework facilitates rapid prototyping by allowing developers to create Python-based web interfaces for machine learning applications without deep frontend expertise.

Perception layer

- Kafka: This serves as the real-time event backbone, streaming data from various sources to the agent.

- Hugging Face Transformers: Provides pre-trained models for natural language processing (NLP) to interpret text data.

- OpenCV: A comprehensive toolkit for computer vision, allowing agents to process and understand visual inputs.

- Whisper: Specifically handles speech-to-text conversion, enabling voice-activated agentic workflows.

- FastAPI: High-performance web framework used to build APIs that ingest data and serve model predictions.

- LangChain input parsers: Framework that normalises varied input formats into structured data types that agents can process consistently.

Agent orchestration layer

- LangGraph: Framework that enables complex, stateful agentic workflows using directed acyclic graphs (DAGs).

- AutoGen: Specifically designed for building multi-agent systems, where agents can converse to solve tasks.

- CrewAI: Manages role-based multi-agent flows, assigning specific ‘personas’ and tasks to different agents.

- Semantic Kernel: This integrates LLMs with conventional programming, focusing heavily on agent memory and planning capabilities.

- LlamaIndex: This acts as the interface between data and agents, ensuring the LLM can query and reason over private data.

- DSPy: Framework for a programmatic approach to optimising LLM prompts and weights, moving away from manual prompt engineering towards declarative programming.

Action and tools layer

- LangChain: Provides a standard interface for agents to call external functions, APIs, or scripts.

- Toolformer: Model trained to decide which tools to call and when, in a self-supervised manner.

- RAG pipelines: These implement retrieval-augmented generation to fetch relevant context before generating a response.

- Weaviate: Vector database used to store and retrieve high-dimensional data for RAG.

- FAISS: Library optimised for efficient similarity search and clustering of dense vectors.

- Guardrails.ai: Framework that ensures safety and reliability by validating agent outputs against specific quality and security constraints.

Data and knowledge layer

- Neo4j: Graph database used to store complex relationships between data points, useful for ‘GraphRAG’.

- Postgres: Traditional relational database for structured data storage and transactional integrity.

- ElasticSearch: Distributed search engine used for rapid full-text indexing and retrieval.

- Milvus: Platform for highly scalable vector database for managing large-scale embedding data.

Integration layer

- Temporal: Framework that ensures durable, reliable execution of long-running workflows, handling retries and state management.

- Airbyte: ETL/ELT platform with a library of connectors to sync data from various sources into the agent’s knowledge layer.

- GraphQL Federation: Framework that unifies multiple microservices into a single graph, allowing agents to query across different services seamlessly.

- REST/gRPC endpoints: Standardised communication protocols for interoperability between the agent and other software services.

Infrastructure layer

- Kubernetes (K8s): Framework that manages the deployment, scaling, and orchestration of agent containers.

- Ray: Framework for scaling AI and Python workloads, such as distributed training or parallelising agentic tasks.

- Docker: Provides the container runtime to ensure the agent’s environment is consistent across different machines.

- Terraform: Enables infrastructure as code (IaC) to automatically provision the cloud resources needed for the AI stack.

Operations and observability layer

- Arize AI: Platform that provides observability specifically for LLMs, tracking traces and identifying where a reasoning chain may have failed.

- DeepEval: Framework for testing and evaluating LLM outputs based on specific metrics.

- RAGAS: Designed to evaluate the performance of retrieval-augmented generation pipelines.

- Prometheus: Monitoring tool that collects and stores metrics (CPU, memory, latency) as time-series data.

- Grafana: Visualisation platform used to create dashboards for the metrics collected by Prometheus.

Governance, security, and risk layer

Vault (HashiCorp)

Framework that manages sensitive information such as API keys, passwords, and certificates, ensuring agents access them securely.

These tools form a cohesive ecosystem that enables agents to operate with autonomy while remaining aligned with enterprise values and regulatory expectations.

To adopt agentic AI effectively, enterprises must first understand the fundamentals of agentic loops and develop a unified data and AI strategy that supports orchestration, tool integration, memory, and governance.

Agentic AI is not a competitor to human capability but a strategic enabler augmenting expertise, amplifying productivity, and powering the next generation of intelligent and autonomous enterprise operations.

| Acknowledgements The authors would like to thank Tricon Solutions LLC and Gspann Technologies, Inc., for giving the required time and support in many ways when this article was being written. |

Disclaimer: The views expressed in this article are those of the authors. Tricon Solutions LLC and Gspann Technologies, Inc., do not subscribe to the substance, veracity or truthfulness of the said opinion.