Adopting the cloud has never been easier. Managing it, however, has quietly become one of the hardest problems in modern engineering. Thankfully, open source cloud management tools are of immense help.

Most organisations today operate across multiple cloud providers — often a mix of AWS, Google Cloud, Microsoft Azure, and on-premises infrastructure. While this flexibility enables scale and speed, it also introduces complexity: inconsistent configurations, rising costs, security blind spots, and fragmented visibility.

This is where open source cloud management tools have become essential. They act as the connective tissue across providers, enabling teams to manage infrastructure, applications, costs, and security in a consistent, vendor-neutral way. In 2026, cloud success is no longer defined by which provider you choose, but by how well you manage what you deploy.

Infrastructure provisioning and control planes

Infrastructure-as-code (IaC) is no longer optional — it is the baseline.

Manually creating cloud resources might work at a small scale, but it quickly leads to drift, undocumented changes, and fragile environments. Open source IaC tools solve this by treating infrastructure the same way we treat software: versioned, reviewable, and repeatable.

Terraform and its open alternative OpenTofu have become the standard for provisioning compute, networking, storage, and IAM across cloud providers. Crossplane takes this further by turning Kubernetes into a control plane for cloud resources, while Pulumi allows teams to define infrastructure using general-purpose programming languages.

What matters here is not the syntax, but the outcome: infrastructure that can be recreated, audited, and evolved safely.

Kubernetes and application lifecycle management

Once infrastructure exists, the next challenge is managing applications at scale.

Kubernetes has become the common abstraction layer across clouds, whether running on managed services or private clusters. But Kubernetes alone is not enough. Teams need reliable ways to deploy, update, and roll back applications.

Helm simplifies application packaging, while GitOps tools like Argo CD ensure that what’s running in production always matches what’s defined in Git. This shift — from imperative deployments to declarative state — has fundamentally changed how cloud platforms are operated.

The result is fewer manual interventions, faster recovery from failures, and significantly better operational confidence.

Observability and monitoring

Uptime is no longer the only metric that matters — visibility is.

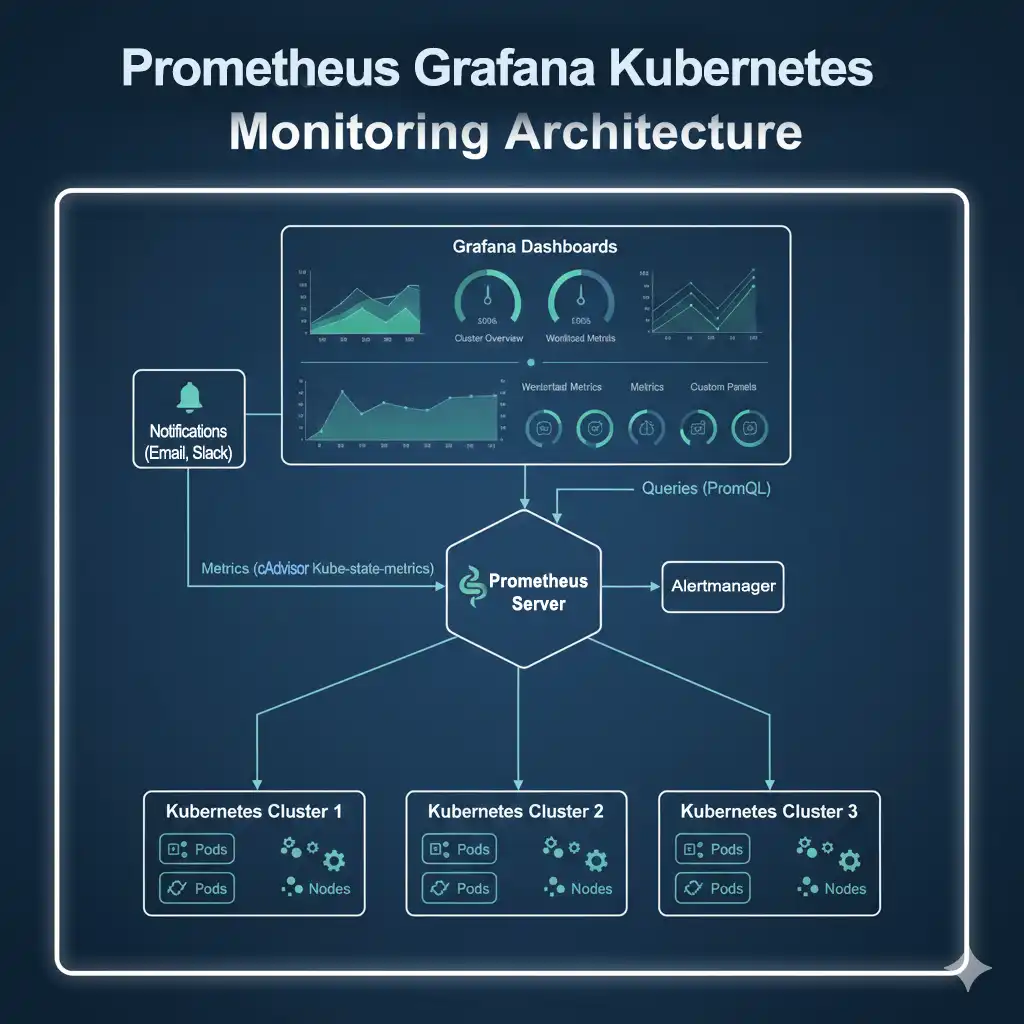

Modern cloud systems are distributed by default. When something breaks, the question is not if it will happen, but how quickly teams can understand why. Open source observability tools provide that insight.

Prometheus has become the backbone for metrics collection, Grafana for visualisation, and OpenTelemetry for standardised tracing and logging. Together, they allow teams to observe infrastructure, applications, and services across clouds using a single mental model.

Cost governance and FinOps

Cloud costs don’t explode because of one big mistake — they grow silently, resource by resource.

Open source FinOps tools help bring financial accountability back into engineering workflows. Kubecost and OpenCost provide visibility into Kubernetes spending, while Infracost estimates cloud costs directly inside CI/CD pipelines before changes are deployed.

In 2026, cloud cost management is no longer just a finance problem. It is an engineering responsibility, and open source tooling makes that responsibility visible and measurable.

Security, policy and compliance

Security in the cloud cannot rely on checklists and audits alone. It must be enforced continuously, as code.

Tools like Open Policy Agent allow teams to define and enforce policies programmatically. Trivy scans container images and dependencies for vulnerabilities, while Falco monitors runtime behaviour for suspicious activity.

The shift here is subtle but important: security moves from being reactive to being embedded into the platform itself.

Managing AI and ML workloads on cloud

AI workloads stress cloud systems differently than traditional applications. They demand high compute, specialised hardware, reproducibility, and careful resource scheduling.

Open source tools like MLflow, Kubeflow, and Ray help manage experimentation, training pipelines and distributed workloads across cloud environments. These tools allow teams to treat machine learning as a first-class production workload rather than an experimental afterthought.

As AI adoption grows, cloud platforms without strong ML management quickly become operational bottlenecks.

Choosing the right cloud management stack

There is no universal ‘best’ toolset.

The right cloud management stack depends on team size, regulatory constraints, workload type, and operational maturity. The real challenge is avoiding tool sprawl —adopting too many tools without a clear ownership model.

A small, well-integrated set of open source tools almost always outperforms a large, fragmented stack.

Looking ahead, cloud management is moving towards:

- Platform engineering teams

- Unified control planes

- AI-assisted operations

- Policy-driven automation

Open source will continue to lead this evolution, not because it is free, but because it is transparent, adaptable, and community-driven.

In 2026, the cloud itself is no longer the differentiator. Management is.

Organisations that will succeed are those that invest in open, consistent, and observable platforms — platforms that work across AWS, Google Cloud, Microsoft Azure, and beyond. Open source tools make this possible. They give teams control, clarity, and confidence in an increasingly complex cloud landscape. And in the long run, that matters far more than the logo on the console.