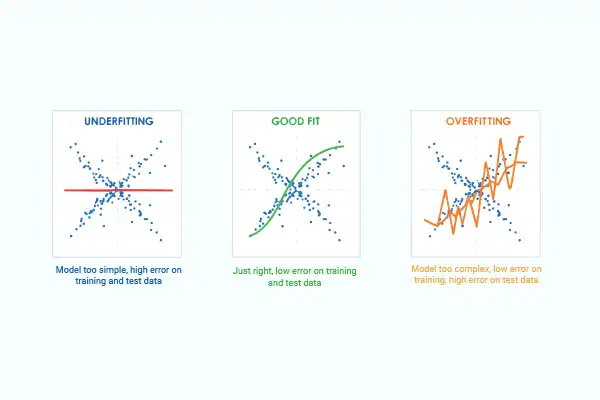

Machine learning models must balance the concepts of underfitting and overfitting.

In machine learning, a model’s goal is not just to fit the given data, but to generalise well to unseen data. Two common failure modes prevent this:

- Underfitting – the model is too simple to capture the true pattern.

- Overfitting – the model is too complex and memorises the training data.

I will demonstrate both concepts using simple, executable Python code, and a dataset generated from a known mathematical formula. This makes it easy to compare predicted values with true values.

Dataset with known ground truth

To clearly understand model behaviour, we generate data using a known formula:

![]()

This quadratic relationship allows us to check:

- How well a model fits training data

- How it behaves on unseen values

import numpy as np X = np.array([[1], [2], [3], [4], [5], [6], [7], [8], [9], [10]]) y = np.array([x[0]**2 + 2*x[0] + 1 for x in X])

Underfitting example: Linear regression

Linear regression assumes a straight-line relationship between input and output. Since our data is quadratic, this assumption is incorrect. Here’s the code:

from sklearn.linear_model import LinearRegression

model = LinearRegression()

# Train

model.fit(X, y)

# Predict

print(“Underfitting (Linear Regression)”)

print(“x | predicted | true”)

print(“--+-----------+------”)

print(“Seen (training range)”)

for v in [1, 3, 5, 7, 9]:

y_pred = model.predict([[v]])[0]

y_true = v*v + 2*v + 1

print(f”{v:2d} | {y_pred:9.2f} | {y_true:4d}”)

print(“\nUnseen (outside training range)”)

for v in [11, 12, 15]:

y_pred = model.predict([[v]])[0]

y_true = v*v + 2*v + 1

print(f”{v:2d} | {y_pred:9.2f} | {y_true:4d}”)

The output is:

x | predicted | true --+-----------+------ Seen (training range) 1 | -8.00 | 4 3 | 18.00 | 16 5 | 44.00 | 36 7 | 70.00 | 64 9 | 96.00 | 100 Unseen (outside training range) 11 | 122.00 | 144 12 | 135.00 | 169 15 | 174.00 | 256

This is what is observed:

- The model makes noticeable errors even on training data.

- Errors increase smoothly for unseen values.

- The model cannot capture curvature.

- This is a classic case of underfitting:

- The model is too simple.

- It fails to learn the true data pattern.

Overfitting example: K-Nearest Neighbors (K = 1)

K-Nearest Neighbors (KNN) makes predictions based on nearby data points. With K = 1, the model simply copies the nearest training value. The code is:

from sklearn.neighbors import KNeighborsRegressor

model = KNeighborsRegressor(n_neighbors=1)

# Train

model.fit(X, y)

# Predict

print(“Overfitting (KNN(N=1)”)

print(“x | predicted | true”)

print(“--+-----------+------”)

print(“Seen (training range)”)

for v in [1, 3, 5, 7, 9]:

y_pred = model.predict([[v]])[0]

y_true = v*v + 2*v + 1

print(f”{v:2d} | {y_pred:9.2f} | {y_true:4d}”)

print(“\nUnseen (outside training range)”)

for v in [11, 12, 15]:

y_pred = model.predict([[v]])[0]

y_true = v*v + 2*v + 1

print(f”{v:2d} | {y_pred:9.2f} | {y_true:4d}”)

The output is:

x | predicted | true --+-----------+------ Seen (training range) 1 | 4.00 | 4 3 | 16.00 | 16 5 | 36.00 | 36 7 | 64.00 | 64 9 | 100.00 | 100 Unseen (outside training range) 11 | 121.00 | 144 12 | 121.00 | 169 15 | 121.00 | 256

It can be observed that:

- Predictions are perfect on training data.

- For unseen values, the model repeats the nearest known output.

- It completely fails to follow the quadratic growth.

- This demonstrates overfitting:

- The model memorises training data.

- It does not learn the underlying function.

- The bias–variance interpretation is:

- Underfitting corresponds to high bias and low variance. The model makes strong assumptions and is consistently wrong.

- Overfitting corresponds to low bias and high variance. The model fits training data perfectly but is unstable on new data.

The goal of machine learning is to find a balance between these two extremes as the table below indicates.

| Model | Training error | Test error | Behaviour |

| Linear regression |

High | High | Underfit |

| KNN (K=1) | Zero | Very High | Overfit |

| Ideal | Balanced | Low | Good |

To conclude, underfitting means not learning enough while overfitting means learning too much. Good models learn the pattern, not the data points.