With hallucinations, bias, opaque decisions, and CO2 costs adding up, AI needs discipline and responsibility built in from the start.

Cases of AI causing real-world harm increased noticeably in 2024-25. Responsible AI has now become a mandatory compliance layer for enterprise stability, moving from ‘good practice’ to a fundamental design condition.

Global incident trackers, including the AI Incident Database and the OICT Monitor, indicate a marked escalation in AI-related harm. Within a matter of months, incident counts increased from approximately 140,000 to more than 150,000, thereby raising the risk share from around 50 per cent to over 60 per cent. The expanding set of risks includes multimillion-dollar deepfake CEO impersonation scams, ransomware attacks enabled by AI tooling, discriminatory outcomes, unsafe predictions, and privacy breaches. Misuse is now easy, scalable, and cheap.

Even generative video systems show clear, high-visibility bias. In a widely noted example, a model produced only male images when asked about CEOs and only female images when asked about flight attendants. These outcomes are not fringe glitches; they expose structural weaknesses across modern AI systems and mirror what we see in everyday use cases, from loan-approval bots rejecting applicants unfairly to e-commerce customers telling us their AI-first experiences are too costly to scale.

For me, this surge changes the framing entirely. Deepfakes, cyber fraud, biased predictions, privacy breaches, and misuse are operational risks that are affecting consumers, enterprises, and public institutions.

AI models continue to struggle with hallucinations and inconsistent predictions. Healthcare provides some of the clearest evidence. A model incorrectly predicting diabetes for an individual led to the wrong medication being prescribed, with serious consequences. Another model offered flawed medical suggestions that understandably alarmed users. When systems behave this way, trust erodes faster than technical teams can explain what went wrong.

The environmental impact adds another dimension. A single ChatGPT-style prompt produces about 4.32 grams of CO₂. That may seem minor, but on the enterprise scale it adds up fast. When millions of queries flow through models running in non-optimised data centres, the emissions rise sharply.

Operating costs also directly correlate with model maintenance and can increase with scalability challenges. The need to maintain constant accuracy in the face of drift and other risks further complicates responsible deployment.

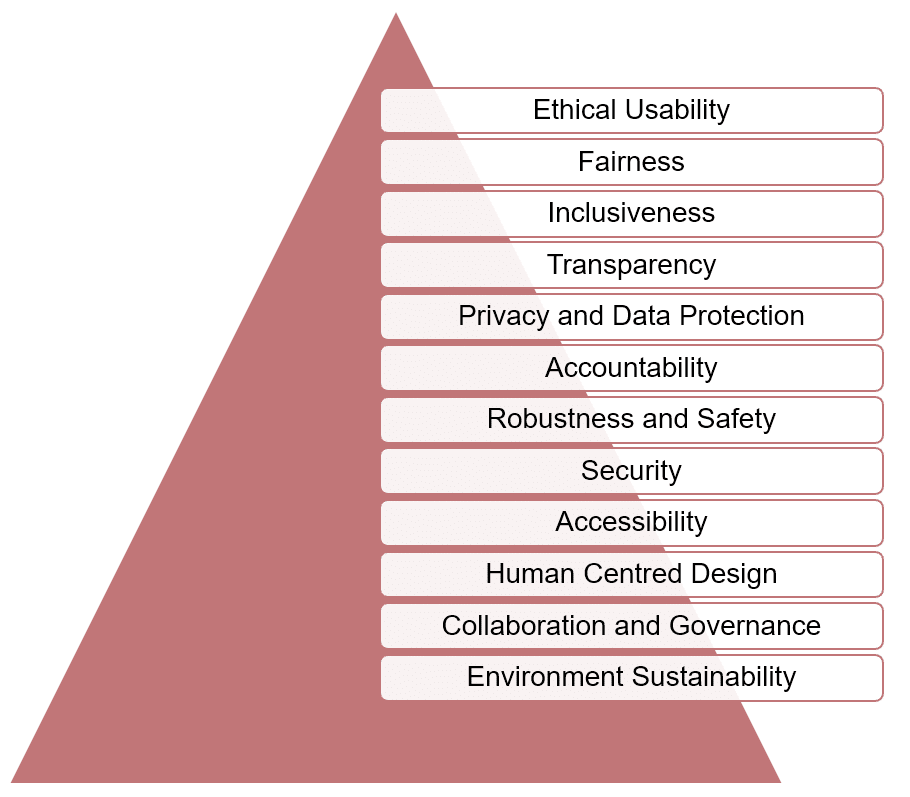

Principles of responsible AI

Principles of responsible AI ensure that AI continues to work equitably and does not dilute results based on the different characteristics of users. Here is a quick look at the principles that I recommend.

Ethical usability

AI applications should be created only for ethical usage.

Fairness

This is where it all starts. AI must treat everyone fairly and not favour one group over another. For example, AI used for hiring should not prefer one gender or community.

Transparency

We must understand how AI arrives at critical decisions. The core function of explainability tools like SHAP and LIME is to explain why a model made a particular choice. They use model cards and training summaries to show the entire workflow by which the AI derived its result.

Privacy

Users should be aware of the personal data being collected by AI and how it’s being used. Informed consent and the right to delete any data they are uncomfortable sharing is crucial.

Accountability

Every AI system should have a structured reporting and grievance-resolution process with a clearly accountable team or individual. Users should be able to report issues and have them fixed.

Safety

AI must operate reliably even when conditions change. Systems should be tested to handle unexpected situations or manipulations. For example, a chatbot should not provide incorrect advice if someone attempts to confuse it intentionally. Failure analysis and real-world testing help make AI dependable.

Security

AI must be protected against cyberattacks. Strong measures should be put in place to prevent misuse and data poisoning.

Accessibility

Disabilities and/or inadequate technical knowledge should not prevent any user from accessing and safely using AI. A simple interface and an inclusive user experience can be effective.

Sustainability

Every AI operation should adopt sustainable practices to remain energy efficient. These can include algorithm optimisation and the establishment of eco-friendly data centres.

Collaboration

Cross-functional teams can help implement the principles outlined above more effectively.

How mandatory compliance is evolving with regulatory pressures

Regulation has finally caught up with technological scale. Industry research indicates that more than 99 per cent of global companies now implement at least one responsible AI practice.

Gartner predicts that by 2026, more than half of all organisations will have operationalised responsible AI into their systems.

At the forefront is the EU AI Act, which classifies systems into:

- Banned

- High-risk

- Limited-risk

- Minimal-risk

Violations of the banned-system provisions may result in penalties of up to 6 per cent of global revenue from AI or €35 million. This is one of the strongest reasons I describe responsible AI as a mandatory compliance layer rather than a voluntary commitment. Regulatory expectations are now explicit: responsibility is compulsory.

Industry frameworks and toolkits provide practical guidance for enterprises to help build auditable and fully compliant AI systems.

Gartner’s PRISM model is a checklist to verify that each stage of AI development, from data collection through final deployment, adheres to responsible standards. The IEEE 7000 series goes a step further by embedding ethics directly into system design. This can help developers and AI architects follow responsible AI standards from the ground up. Similarly, ISO 42001 is a set of globally recognised guidelines for organisations to adopt consistent responsible AI practices irrespective of their industry or geographical location.

On the practical side, responsible AI toolkits provide hands-on support. Microsoft’s toolkit provides open source APIs that enable the integration of fairness, security, and ethical compliance checks directly into AI systems. IBM’s Fairness 360 toolkit equips teams with tools to detect bias, mitigate unfair outputs, and ensure transparency in model predictions. Infosys’ Responsible AI toolkit provides APIs that validate whether AI applications adhere to ethical and governance principles, maintain fairness, and meet regulatory guardrails.

Tools that operationalise responsibility

Putting responsible AI into practice means being careful and consistent at every stage of an AI system’s life. To reduce the chances of unfair or discriminatory outcomes, I rely on a combination of practical methods to keep the system trustworthy:

- Diverse and representative datasets

- Fairness-aware algorithms and testing

- Demographic audits

- Bias detection and mitigation tools (IBM Fairness 360, Microsoft Fairlearn, Google What-If)

- Human-in-the-loop reviews

- Regular, structured audits

- Diverse teams that expand perspective and reduce blind spots

- Explainability tools like SHAP and LIME

- Adopt a governance framework tailored to the specific context in which the AI application will be deployed.

Sustainability measures are just as critical in the scheme of AI adoption responsibility. For enterprise-level organisations, small steps lead to significant results. You can optimise models for efficiency, use green data centres, and deploy energy-efficient hardware to reduce overall CO₂ emissions.

Enterprise boards have now recognised the need for responsible AI. AI risks now hold the same level of importance as cybersecurity or financial exposure. Companies now have governance committees to enforce responsible AI standards.

All development and expert opinions converge on a single clear resolution. Responsible AI standards must be incorporated from the outset and should be present at all stages of design, development and deployment. This is the only way to ensure the system remains stable and secure.

Trust grows when users know that AI will behave as expected. A strong commitment to ethical standards also ultimately builds the AI brand’s reputation.

This article is based on the session titled ‘Responsible AI in GenAI and LLM Systems’ by Selvi Nandakumar, director of product management, Infosys Equinox, at AI DevCon in Bengaluru. It has been transcribed and curated by Apurba Sen, senior journalist at the EFY Group.