New MIT-led technique uses idle processor time to dramatically speed up training of large language models without sacrificing accuracy.

Researchers at MIT have unveiled a practical way to boost the efficiency of large language model (LLM) training by exploiting otherwise wasted compute resources, potentially lowering both energy use and development cost for advanced AI systems.

At the heart of the advance is a system that doubles training speed for reasoning-focused LLMs by turning idle processor time into productive work. Traditional reinforcement-learning-based training forces all processors to wait until the slowest completes its task before moving on, leaving some hardware sitting idle for large fractions of the total compute cycle. The new method avoids that bottleneck. Instead of simply waiting, a lightweight auxiliary model is trained on the side to predict the outputs of the main “reasoning” LLM. When processors finish shorter tasks early, they switch to updating this smaller model. The primary LLM then verifies these predictions, significantly reducing the overall workload and speeding up the process.

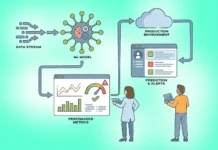

Key to the system’s success is its adaptive approach: the auxiliary model isn’t static but is continuously trained alongside the main model. This keeps predictions aligned as the LLM evolves during training, avoiding the stale estimates that plague conventional speculative decoding techniques. An adaptive rollout engine also tweaks how the system operates based on ongoing workload patterns, optimizing performance in real time.

In tests across multiple reasoning models, this “Taming the Long Tail” (TLT) strategy increased training throughput by between 70% and over 200% while maintaining accuracy. That scalability promises meaningful reductions in power consumption and computing cost for next-generation AI systems used in complex tasks like financial forecasting or power-grid risk assessment. Developers and researchers see broader potential in the technique, including integration into diverse training and inference frameworks beyond reinforcement learning. The system also produces a small auxiliary model that could itself serve deployment needs, further boosting efficiency. The work, backed by multi-institutional collaboration and major funding partners, underscores the growing focus on energy-efficient electronics and compute in AI research.