Solr is an open source search server that was originally developed at CNet, and then donated to Apache. You should only be thinking about Solr if you want to implement some kind of search facility for your Web application that’s backed by random sources of data. Although it has been built with enterprise applications in mind, where users would set up instances of a search server on a separate machine dedicated for this purpose, this shouldn’t stop you from using it for small projects too.

Solr is based on the full-text search library called Apache Lucene. It is mostly built as an enhancement on top of an index provided by it. Solr has been built completely in Java and so, you’ll need to have Java and a servlet container like Tomcat or GlassFish installed, on top of which Solr can be deployed as a war package. However, that does not mean that you need to know Java, unless you want to make modifications to Solr!

Solr provides XML/HTTP interfaces and APIs just like Web services, and so can work with almost any programming language. The extensive external configurations available are more than enough to make customisations. Apart from this, it even provides a comprehensive administration interface with a browser.

Solr also gives you a good set of default settings to start with, and provides good documentation along with it. The best applications being used out there are highly customised, but they require a deep understanding of the type of data to be searched, and the queries that users are going to make.

Information can be collected directly from filesystems, databases, websites, directories, mail servers or anything else. On the whole, Solr is a good compromise for enterprise use as well as personal applications.

Why Solr?

Whenever we think about search, what comes to our minds is usually Google; after all, it has been the world leader in terms of search technology. Then why use Solr?

For starters, Solr allows you to have precise control over what gets indexed, how it gets indexed and how it’s retrieved from the index. In short, a powerful search solution that is totally under your control, and can change the way users perceive and interact with your application. Setting up a separate instance of a search server will help you streamline your website and help your users wade through data with an ease that they have never experienced before.

But then, why go through the trouble of setting up a separate search server, when even databases can provide such text-indexing capabilities? Why can’t we just SELECT * FROM Content to retrieve data? More importantly, if Solr has been implemented on top of Lucene, why can’t we build our own search application with its help?

Well, building a search application on top of Lucene would require a lot of work — and you’d really have to know what you’re doing (in which case, you wouldn’t need to read this article at all). Talking about comparisons with databases, it depends on what kind of search application you want to build. If you want something that helps with exact-match queries, like the names of all the people who were born in 1988, or a list of all authors who wrote books on a particular subject, then you’re better off with databases, without any extra effort. However, if you want to answer queries that are less predictable and need proper analysis, like a list of documents in which a particular keyword occurs, or anything similar that is more flexible, Solr is there for you.

Before creating a database in a DBMS, you need to properly design a schema — and even minor changes in the structure of the data need to be reflected back into the database. This is not so with Solr — actually, it acts more like a NoSQL document store, where documents are in turn stored in the form of a Lucene index. Does that mean we can use it as a NoSQL database?

Probably, but Solr hasn’t been built for that purpose; it’s more efficient as a search server, and we should let it remain that way.

Features and components

Carefully implemented instances of Solr can provide features that can match even those of Google — and what’s more, it allows for further customisation, if needed; after all, it’s open source!

Let’s take a brief look at some of the important and special features that Solr has to offer, without going into too much technical detail.

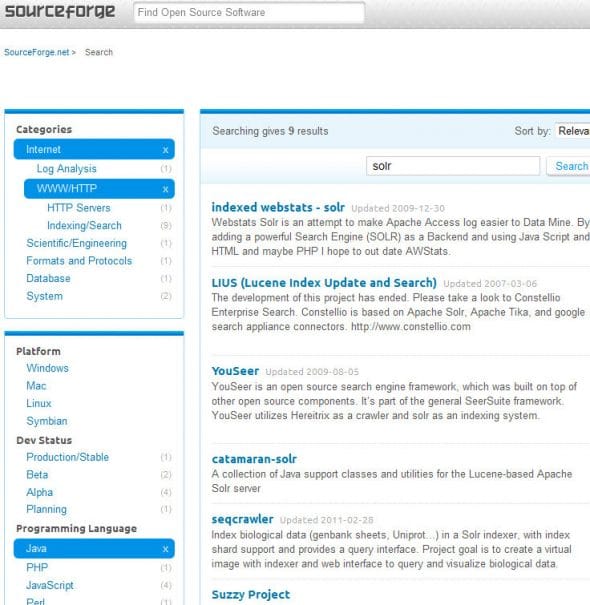

- Faceted search: This is one feature that stands out from the others. Whenever we encounter search in most applications, it comes with options to narrow down our search results with respect to certain parameters. Faceting provides such functionality, by providing facets as the navigational elements in the search. This technique is quite popular in places like online e-commerce websites, where we might need to filter search results from a particular category or a particular company.

Figure 1: A live example of Facets in the search application of the SourceForge website - Spatial search: Although spatial search or geo-aware search is not a “feature” as such, it is quite relevant these days — and geo-location information is often included with the data, so it is important to mention it here. Spatial search is a plugin that is expected to be included with native support in Solr version 4.0, and will provide results filtered according to the location information. For example, a search for a coffee shop should produce results suitable to your current location.

- Clustering: Clustering is also used for the aggregation of data. Solr provides for search result clustering with the Carrot2 clustering engine. Clustering is different from faceting, in the sense that it is done dynamically. We don’t need to mark documents or specify fields to be categorised for clustering to take place; it is done automatically by recognising the words that occur most commonly in the text, and by recognising the structure of the text. Clustering is done when the search query is executed, while faceting is done during indexing. One of the clustering algorithms that I am aware of is the k-means clustering algorithm.

Other features (which search engines must obviously have) include hit highlighting, auto-suggest, spell-checker and the “more like this” functionality, which do not need introduction.

Installation and setup

First of all, let’s get down to installing and setting up a single core instance of Solr on top of Tomcat. We will try to install the example schema that comes along with the archive.

Before proceeding, it is worth mentioning that both the Lucene and Solr projects were merged in March 2010, due to which the version numbering sometimes becomes quite ambiguous. To avoid such confusion, let’s look at the current and next version numbers of Lucene/Solr:

| Version | Status |

| 1.4.1 | The current version of Apache Solr |

| 1.5/2.0 | These were the next versions to be released before the Lucene/Solr merge, and have been skipped in order to keep up with Lucene. |

| 3.1 | This is the next Lucene/Solr point release. |

| 4.0 | This will be the next major release after the Lucene/Solr merge. |

So, having dealt with that, you can begin by downloading and unzipping the apache-solr-1.4.1.tgz file from the website. As mentioned before, you will need to have at least Java 5 and a servlet container installed.

In the apache-solr-1.4.1/dist directory, you will find the apache-solr-1.4.1.war file, which can be uploaded to any servlet container (in our case, I assume it is Tomcat). Rename it to solr.war and deploy the file.

Next, you need to set up the home folder which contains all the configuration files, etc. To do this, you can either go to apache-solr-1.4.1/example, copy the solr directory, and paste it into the bin folder of Tomcat — or you can set the Java system property of the solr.solr.home parameter to point to the location of your home folder.

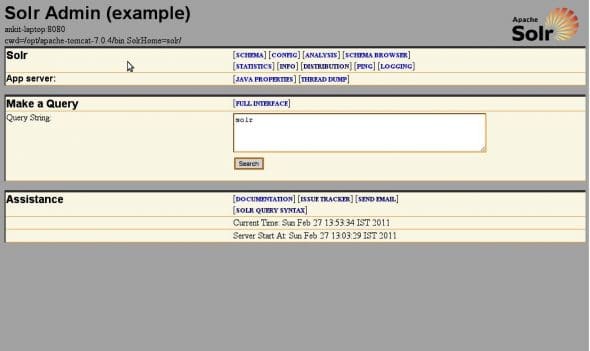

Now, visit http://localhost:8080/solr/admin. You should see the Solr Admin console (see Figure 2), and you’re done.

If you’re still not able to get this working, you can try an example setup using the lightweight Jetty server, which can be run using the following command in the example folder:

java -jar start.jar

This will set up Jetty to listen on port 8983 instead of 8080, so you will have to replace the port number in the corresponding examples. You may explore the Admin console now, which consists of several tabs and a query box. At this point, queries will not return results, since we haven’t yet indexed anything; we will do so later.

Architecture

Solr consumes data in the form of documents. A document is like a basic unit of information that is fed into Solr. It consists of fields, which are, in turn, a more specific piece of information.

The process shown so far, is a simplified version of the installation procedure, and probably not how you would like it in a production system. For such purposes, you should refer the actual documentation on the Solr wiki.

Let’s now look at some of the important files, and the directory structure:

| Directory | Function |

solr/bin |

This is an optional directory for keeping replication scripts, in case we want to implement some amount of replication on our servers. |

solr/data |

This is the default location where the index of your search application will be stored. Its location can be changed in the solrconfig.xml file. |

solr/conf |

This is the most important directory, where all the configuration files are stored. |

The two most important files in the conf directory are as follows; each of these is extensively commented, with every parameter explained in detail:

solrconfig.xml— This file handles the entire primary configuration. It is used to define request handlers, response writers (explained below), auto-warming parameters and plugins.schema.xml— This file, as is obvious, dictates the “schema” or the structure of your documents. It also specifies how each field in the document should be indexed, and what fields each document can contain. The file is divided into sections to define types, fields and other definitions pertaining to documents. It also specifies Analyzers in order to determine the manner in which each field is indexed and retrieved. Schema design is one of the most important steps while designing an application on top of Solr.

RequestHandler

A RequestHandler dictates what is to be done when a particular request is made. It basically processes all the interactions with the search server, including querying, indexing, etc. Examples of some of the many RequestHandlers available (besides the StandardRequestHandler) are DisMaxRequestHandler, DataImportHandler, MoreLikeThisHandler, SpellCheckerRequestHandler, etc.

Each of these are built to perform specific functions; Solr even allows you to write custom handlers for your own purpose.

ResponseWriter

ResponseWriter generates and formats the response to a query, or any request made to the server. Multiple ResponseWriters are available to produce the data to be consumed in the form of JSON, PHP, Python, Ruby, CSV, XML and even XSLT. A custom ResponseWriter can also be written in a manner similar to a RequestHandler, by implementing an interface specified for each, in Java.

Search components

Search components are something that provide a specific functionality that can be reused with any type of handler to provide a common logic. These are the most basic extension methodologies in Solr. Each component is configured in the solrconfig.xml file as an addition to the RequestHandlers.

Indexing techniques

Indexing is feeding data into Solr’s index. Proceeding with the example, let’s first send the data to our installed application for indexing, with the help of the included simple post application. Go to the apache-solr-1.4./example/exampledocs folder, from the location where you initially unzipped the Solr archive. In this directory, give the following command:

java -Durl=http://localhost:8080/solr/update -jar post.jar *.xml

After the process is completed, you will be able to search for your data from the admin console. The data that gets posted to the Solr index is all the XML files that are contained n the exampledocs folder. You may look at the structure of each of the documents that get posted.

There are multiple ways for sending data to be indexed:

- XML-post: Solr allows for a predefined set of XML schemas, which specify the instruction or the action to be performed. This may range from the simple addition of new documents, to deletion, updation and committing of data. These XML commands can simply be POSTed to the server (at

http://localhost:8080/solr/updatein this case), and it will perform the specified operation. This is precisely what the post.jar application does. For example, to delete a document, you can run the following command in a terminal:curl http://localhost:8080/solr/update -F stream.body='<delete><id>VA902B</id></delete>'

- CSV: Solr can import the data to be accepted in the form of a CSV format, where values are usually separated by a delimiter such as a comma or a tab. It can also be set up to accept streams of data in the form of CSV, if your data source generates it. CSV data can be sent to Solr by sending data

to http://localhost:8080/solr/update/csv. A samplebooks.csvfile is included inexampledocs, which can be uploaded usingcurlin a manner similar to that shown above. - Solr Cell: What more could you possibly want from a search server, than its ability to index PDF, Word, Excel, JPEG and even MP3 files? Solr Cell, a.k.a.

ExtractingRequestHandler, is what allows you to do so! It has been built as a wrapper around the Apache Tika project, which can strip out data from a wide range of supported documents — and what’s more, it can even auto-detect the MIME type of the data. DataImportHandler: This has been built to handle direct database imports, either with the help of JDBC, or XML imports from a URL or a file. The XML import functionality can be used to index data over HTTP/REST APIs, or even RSS feeds. In addition to full import, it supports delta-imports — that is, a partial update of the index, reflecting only the recent changes in the database since the last build, instead of a complete rebuild of the index. TheDataImportHandlercan be defined in thesolrconfig.xmlfile; it can further point to a file for detailed configuration of the connection and data source information. Theexample-DIHfolder already contains some examples, along with detailed documentation, for you to try out.

Querying

So finally we have all our data set, and we are ready to make a query or two in order to know how it works. Let’s make a basic query now: type solr into the search box at the admin console, and see what you get. The URL generated looks something like what’s shown below:

http://localhost:8080/solr/select/?q=solr&version=2.2&start=0&rows=10&indent=on

We can guess that http://localhost:8080/solr/select is the endpoint at which we will be making our queries, while the q=solr parameter represents our search query. The start=0 and rows=10 parameters are used for paginating, since Solr does not display all results at once. Besides this, there are a lot of parameters that have been left out, which include features like boosting, sub-expressions, filter queries, sorting, and of course, faceting!

Additionally, the DisMax Query parser allows for even more sophisticated queries that should be more than enough to satisfy even advanced users.

Looking at the search results, we’ll be able to see XML that has been enclosed within the <response> tags. The initial metadata, like the status, query time, etc., are enclosed within the <lst name="responseHeader"> element, and the subsequent results are enclosed within the <result name="response" numFound="1" start="0"> element, which further contains the <doc> element that represents each document returned as a result. It should be obvious that the numFound attribute represents the number of results returned.

In conclusion, it will be useful to note that add-on modules for using Solr with CMSes like Drupal and WordPress are already available. These modules include all the configuration information to set up Solr for your website, and provide a much better searching mechanism than the one included with the core.

Nice

not getting the data from solr csv url in php. please any suggestions……