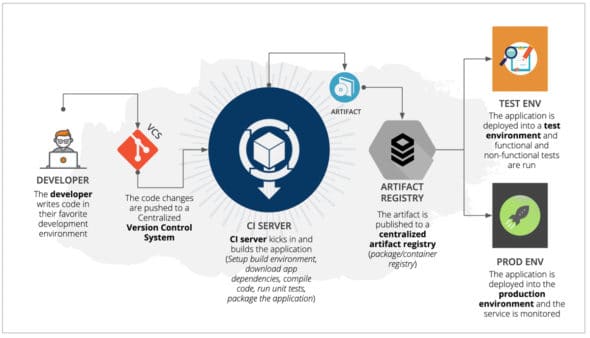

In this article, we will discuss how to integrate security into our day-to-day lives with as little overhead as possible. DevSecOps is enabling teams to deploy applications securely to production environments multiple times in a day. Let’s see how this is done.

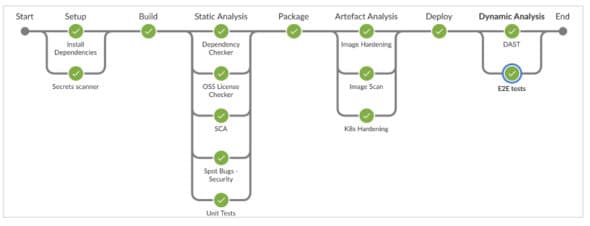

On a real CI tool (Jenkins Blue Ocean), the process or the development jobs look like what’s shown in Figure 1.

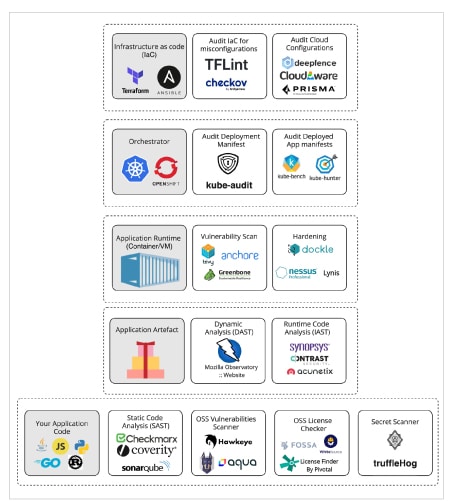

Developers have been automating as much as possible to meet the practice of continuous deployment. At first glance, this probably looks like the perfect setup for delivering certain software. What do you think? Please refer to Figure 2.

How about what’s shown in Figure 3?

| Disclaimer: You might notice that I am referring to a lot of tools throughout this article. I have chosen all these tools based on my experience. So, I strongly recommend that you analyse before choosing any tool because like you and me, every application is different and special. |

Build pipelines are the sweet spot for integrating security tools so that all the security practices are verified, regardless of which tools each developer has in his/her development environment.

Now let’s think about the list of practices we want to automate.

The composition of application code, typically, is:

- Application code

- Application dependencies

- Application runtime

- Infrastructure code

How to secure application code

The first obvious challenge is to find security weaknesses in the code. Security code reviews before each version release have been followed for a very long time, but they come very late in the cycle and need time from specialists. Static code analysis tools can help overcome the problem.

Static application security testing (SAST) can scan the code for bad security practices and identify vulnerabilities. But how does one choose a good SAST tool? The choice will depend on:

- Support needed for the programming languages used in the organisation

- Number of rules or tests

- Whether it covers all the OWASP Top 10 vulnerabilities

- Whether it covers all the common weakness enumerations (CWEs)

- Suggests remediations

- Has the option to suppress false positives (not the entire rule, only the specific instance)

- Has options to add custom detection rules

Some popular tools for static code analysis are given in Figure 4.

| Tip: Use tools focused on your programming language. For example, gosec finds more vulnerabilities compared to paid tools. |

One more thing! How about secrets like API keys, auth tokens, encryption keys, etc? We all know that secrets should not be committed to the repositories. But accidents can happen. To solve this problem, we can follow two approaches.

Preventive: Have a pre-commit or pre-push hook to check whether any secrets are present in the commit, and block them from getting created.

Reactive: Run a secrets scanner in the pipeline to detect any secrets that have checked into the repository — before they get leaked.

Is the preventive approach enough on its own? Unfortunately, no. There can be occasions where we fail to run the pre-checks. As an example, a developer who is not part of your team may commit code to your repo. However, you may have switched to a new laptop in which you have not installed all the tools. And you may deliberately skip pre-checks to push the changes through faster. So we need to use both preventive and reactive approaches.

The next obvious question is: What do you do in case a secret is detected in the scan and leaked in public? Well, you should rotate the secrets and follow a proper security incident response plan.

How about application dependencies

A software application depends on a lot of libraries or packages. For example, if an application needs to make HTTP calls, we might use an HTTP library instead of writing our own library. But those libraries can have security bugs. The OSS community fixes these vulnerabilities and releases a new version of those libraries.

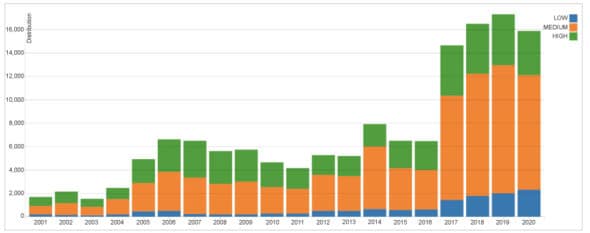

The graph in Figure 6 tells us how many new common vulnerabilities and exposures (CVEs) are added to the national vulnerability database (NVD) alone every year.

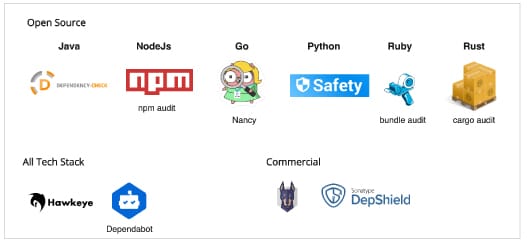

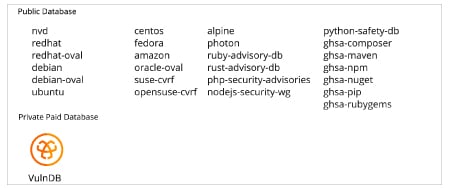

Dependency checkers help to identify these security fixes in OSS libraries and notify developers to update the library or package. A dependency checker will identify the list of your application dependencies, and check it against the vulnerability database to see if any known vulnerability exists for the particular version being used (please refer to Figure 7).

Open source licence compliance

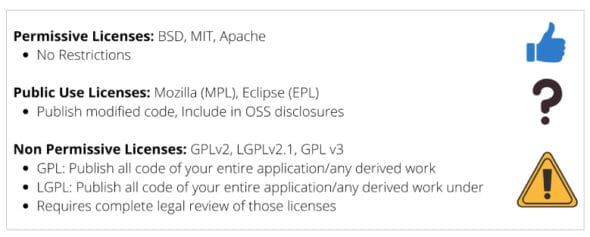

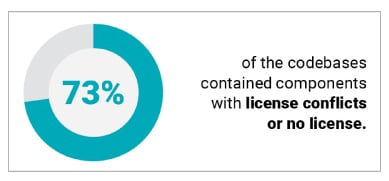

Open source libraries that we depend on for building our applications may come with different sets of licence requirements (Figure 8).

(https://www.synopsys.com/blogs/software-security/5-open-source-trends-2020-ossra/)

Licence restrictions may vary depending on how the library is used — whether the library is dynamically linked or modified source code is used as well (see Figure 9).

So the solution to the problem is to have an automated open source compliance (OSC) checker, which will scan all the dependencies and identify the licence associated with it. You can make a list of approved licences and tools based on your organisation’s standards. The licence checker will compare both the data and identify any dependency that violates the organisation’s policy (Figure 10).

| Tip: There are very few tools in this space. License Finder from Pivotal works very well and is open source as well. |

How about application run times (containers)?

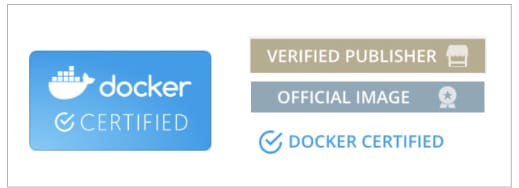

Docker containers have been used widely to ship the application with its runtime and its operating system dependencies. Each container image consists of a set of open source packages like kernel, glibc, shell, utils, etc. These system packages may contain security issues as well. So scanning the container images is inevitable. Choosing the right container image is crucial. The criteria for choosing a container image are:

- It’s officially published by the Docker community or the library maintainer (check whether your publisher has any of the image tags given in Figure 11).

- It has a small footprint (like alpine).

- It is updated regularly.

- A new image is published in case of new vulnerabilities.

We can use a distroless image from Google as an option.

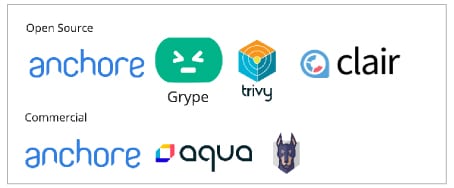

The next step involves scanning the container images for known vulnerabilities. There are a lot of open source tools available to scan container images. My preference is Anchore open source. Again, choose a tool that uses all the databases. For example, Trivy includes all public databases.

Common mistakes

You can avoid common mistakes while building container images, as indicated below.

- Use multi-stage builds and reduce the image footprint. For example, to run any go applications, we can use the go lang image to build the artifact and copy it to an alpine image.

FROM golang:1.7.3 WORKDIR /go/src/github.com/alexellis/href-counter/ RUN go get -d -v golang.org/x/net/html COPY app.go . RUN CGO_ENABLED=0 GOOS=linux go build -a -installsuffix cgo -o app . FROM alpine:latest RUN apk --no-cache add ca-certificates WORKDIR /root/ COPY --from=0 /go/src/github.com/alexellis/href-counter/app . CMD [“./app”]

Install additional packages without storing the package manager’s cache information:

RUN apk --no-cache add ca-certificates

- Run the application as non-root user.

Use automated tools to check instructions in the dockerfile. Dockle supports auditing CIS benchmarks as well.

Hardening infrastructure scripts

Security misconfiguration is at the top in the NSA list of cloud vulnerabilities. Modern applications are more than just the code developers create. Since the infrastructure is written as code, we can perform automated analysis of the Infrastructure as a Code (IaaC) templates to spot potential security issues, or misconfiguration and compliance violations. This way you can mitigate risks before provisioning cloud native infrastructure.

Dynamic application security testing

Dynamic application security testing (DAST) performs actual attacks against the application in a sandbox environment. DAST helps us to identify common vulnerabilities in the application. For example, if an application uses cookies, these tools can check whether Secure, HttpOnly and Domain Flags are configured or not. We can use OWASP ZAP and Burp Suite to perform these kinds of checks.

OWASP ZAP API scan supports scanning of backend APIs as well. It uses the API specification JSON to test these.

Sample pipeline implementation

Considering the above practices, we have implemented a sample pipeline for a Java Tech stack. The pipeline was written to work in Jenkins. For other CI pipeline boilerplate codes, do follow me on GitHub.

Refer to my GitHub page

(https://github.com/rmkanda/tools) for the complete set of tools available in each category.