If you are a student or professional interested in the latest trends in the computing world, you would have heard of terms like artificial intelligence, data science, machine learning, deep learning, etc. The first article in this series on artificial intelligence explains these terms, and sets the platform for a simple tutorial that will help beginners get started with AI.

Today it is absolutely necessary for any student or professional in the field of computer science to learn at least the basics of AI, data science, machine learning and deep learning. However, where does one begin to do so?

To answer this question, I have gone through a number of textbooks and tutorials that teach AI. Some start at a theoretical level (a lot of maths), some teach you AI in a language-agnostic way (they don’t care whether you know C, C++, Java, Python, or some other programming language), and yet others assume you are an expert in linear algebra, probability, statistics, etc. In my opinion, all of them are useful to a great extent. But the important question remains — where should an absolute beginner interested in AI begin his or her journey?

Frankly, there are many fine ways to begin your AI journey. However, I have a few concerns regarding many of them. Too much maths is a distraction while too little is like a driver who doesn’t know where his/her car’s engine is placed. Starting with advanced concepts is really effective if the potential AI engineer or data scientist is good in linear algebra, probability, statistics, etc. Starting with the very basics and ending at the middle of nowhere is fine only if the potential AI learner wants to end the journey at that particular point. Considering all these facts, I believe an AI tutorial for beginners should start at the very basics and end with a real AI project (small, yet one that will outperform any conventional program capable of performing the same task).

This series on AI will start from the very basics and will reach up to an intermediate level. But in addition to discussing topics in AI, I also want to ‘cut the clutter’ (the name of a popular Indian news show) about the topics involved since there is a lot of confusion among people where terms like AI, machine learning, data science, etc, are concerned. AI based applications are often necessary due to the huge volume of data being produced every single day. A cursory search on the Internet will tell you that about 2.5 quintillion bytes of data are produced a day (quintillion is the enormous number 1018). However, do remember that most of this data is absolutely irrelevant to us, including tons of YouTube videos with no merit, emails sent without a second thought, reports on trivial matters on newspapers, and so on and so forth. However, this vast ocean of data also contains invaluable knowledge which often is priceless. Conventional software programs cannot carry out the Herculean task of processing such data. AI is one of the few technologies that can handle this information overload.

We also need to distinguish between fact and fiction as far as the power of AI is concerned. I remember a talk by an expert in the field of AI a few years back. He talked about an AI based image recognition system that was able to classify the images of Siberian Huskies (a breed of dogs) and Siberian snow wolves with absolute or near absolute accuracy. Search the Internet and you will see how similar the two animals look. Had the system been so accurate, it would have been considered a triumph of AI. Sadly, this was not the case. The image recognition system was only classifying the background images of the two animals. Images of Siberian Huskies (since they are domestic animals) almost always had some rectangular or round objects in the background, whereas the images of Siberian snow wolves (being wild animals located in Siberia) had snow in the background. Such examples have led to the need for AI with some guarantee for accuracy in recent years.

Indeed, AI has shown some of its true power in recent years. A simple example is the suggestions we get from a lot of websites like YouTube, Amazon, etc. Many times, I have been astonished by the suggestions I have received as it almost felt as if AI was able to read my mind. Whether such suggestions are good or bad for us in the long run is a hot topic of debate. Then there is the critical question, “Is AI good or bad?” I believe that a ‘Terminator’ movie sort of future, where machines attack humans deliberately is far, far away in the future. However, the word ‘deliberately’ in the previous sentence is very important. AI based systems at present could malfunction and accidentally hurt humans. However, many systems that claim the powers of AI are conventional software programs with a large number of ‘if’ and ‘for’ statements with no magic of AI in it. Thus, it is safe to say that we are yet to see the real power of AI in our daily lives. But whether that impact will be good (like curing cancer) or bad (deepfake videos of world leaders leading to riots and war) is yet to be seen. On a personal level, I believe AI is a boon and will drastically improve the quality of life of the coming generations.

What is AI?

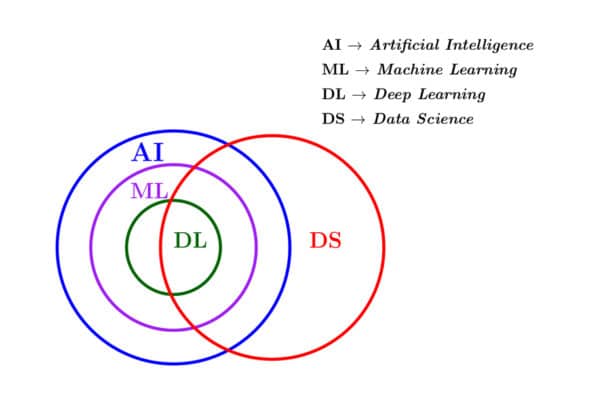

So, before we proceed any further, let us try to understand how AI, machine learning, deep learning, data science, etc, are related yet distinct from each other. Very often these terms are used (erroneously) as synonyms. First, let us consider a Venn diagram that represents the relationship between AI, machine learning, deep learning and data science (Figure 1). Please keep in mind that this is not the only such Venn diagram. Indeed, it is very plausible that you may find other Venn diagrams showing different relationships between the four different entities shown in Figure 1. However, in my opinion, Figure 1 is the most authentic diagram that captures the interrelationship between the different fields in question to the maximum extent.

First of all, let me make a disclaimer. Many of the definitions of the terms involved in this first article in this series on AI may not be mathematically the most accurate. I believe that formally defining every term with utmost precision at this level of our discussion is counterproductive and a wastage of time. However, in the subsequent articles in this series we will revisit these terms and formally define them. At this point in time of our discussion, consider AI as a set of programs that can mimic human intelligence to some extent. But what do I mean by human intelligence?

Imagine your AI program is a one-year old baby. As usual, this baby will learn his/her mother tongue simply by listening to the people speaking around him/her. He/she will soon learn to identify shapes, colours, objects, etc, without any difficulty at all. Further, he/she will be able to respond to the emotions of people around him. For example, any 3-year-old baby will know how to sweet talk his/her parents into giving him/her all the chocolates and lollipops he/she wants. Similarly, an AI program too will be able to sense and adapt to its surroundings, just like the baby. However, such true AI applications will be achieved only in the far future (if at all).

Figure 1 shows that machine learning is a strict subset of AI and as such one of the many techniques used to implement artificially intelligent systems. Machine learning involves techniques in which large data sets are used to train programs so that the necessary task can be carried out effectively. Further, the accuracy of performing a particular task increases with larger and larger training data sets. Notice that there are other techniques used to develop artificially intelligent systems like Boolean logic based systems, fuzzy logic based systems, genetic programming based systems, etc. However, nowadays machine learning is the most vibrant technology used to implement AI based systems. Figure 1 also shows that deep learning is a strict subset of machine learning, making it just one of the many machine learning techniques. However, here again I need to inform you that, currently, in practice, most of the serious machine learning techniques involve deep learning. At this point, I refrain even from trying to define deep learning. Just keep in mind that deep learning involves the use of large artificial neural networks.

Now, what is data science (the red circle) doing in Figure 1? Well, data science is a discipline of computer science/mathematics which deals with the processing and interpretation of large sets of data. By large, how large do I mean? Some of the corporate giants like Facebook claimed that their servers could handle a few petabytes of data as far back in 2010. So, when we say huge data, we mean terabytes and petabytes and not gigabytes of data. A lot of data science applications involve the use of AI, machine learning and deep learning techniques. Hence, it is a bit difficult to ignore data science when we discuss AI. However, data science involves a lot of conventional programming and database management techniques like the use of Apache Hadoop for Big Data analysis.

Henceforth, I will be using the abbreviations AI, ML, DL and DS as shown in Figure 1. The discussions in this series will mainly focus on AI and ML with frequent additional references to data science.

The beginning of our journey and a few difficult choices to make

Now that we know the topics that will be covered in this series of articles, let us discuss the prerequisites for joining this tutorial. I plan to cover the content in such a way that any person who can operate a Linux machine (a person who can operate an MS Windows or a macOS machine is also fine, but some of the installation steps might require additional help) along with basic knowledge of mathematics and computer programming will definitely appreciate the power of AI, once he or she has meticulously gone through this series.

It is possible to learn AI in a language-agnostic way without worrying much about programming languages. However, our discussion will involve a lot of programming and will be executed based on a single programming language. So, before we fix our (programming) language of communication, let us review the top programming languages used for AI, ML, DL and DS applications. One of the earliest languages used for developing AI based applications was Lisp, a functional programming language. Prolog, a logic programming language, was used in the 1970s for the same purpose. We will discuss more about Lisp and Prolog in the coming articles when we focus on the history of AI.

Nowadays, programming languages like Java, C, C++, Scala, Haskell, MATLAB, R, Julia, etc, are also used for developing AI based applications. However, the huge popularity and widespread use of Python in developing AI based applications almost made the choice unanimous. Hence, from this point onwards we will proceed with our discussion on AI based on Python. However, let me caution you. From here onwards, we make a number of choices (or rather I am making the choices for you). The choices mostly depend on ease of use, popularity, and (on a few occasions) on my own comfort and familiarity with a software/technique as well as the best of my intentions to make this tutorial highly effective. However, I encourage you to explore any other potential programming language, software or tool we may not have chosen. Sometimes such an alternative choice may be the best for you in the long run.

Now we need to make another immediate choice — whether to use Python 2 or Python 3? Considering the youth and the long career ahead of many of the potential readers of this series, I will stick with Python 3. First, let us install Python 3 in our systems. Execute the command ‘sudo apt install python3’ in the Linux terminal to install the latest version of Python 3 in your Ubuntu system (Python 3 is probably already installed in your system). The installation of Python 3 in other Linux distributions is also very easy. It can be easily installed in MS Windows and macOS operating systems too. The following command will show you the version of Python 3 installed in your system:

python3 --version Python 3.8.10

We need to install a lot of Python packages as we proceed through the series. Hence, we need to install a package management system. Some of the choices include pip, Conda, Mamba, etc. I chose pip as our package management system for this tutorial because it is relatively simple as well as the recommended installation tool for Python. Personally, I am of the opinion that both Conda and Mamba are more powerful than pip and you are welcome to try them out. However, I will stick with pip. The command ‘sudo apt install python3-pip’ will install pip in an Ubuntu system. Notice that pip, Conda and Mamba are cross-platform software and can be installed in Linux, Windows and macOS systems. The command ‘pip3 –version’ shows the version of pip installed in your system, as shown below:

pip 20.0.2 from /usr/lib/python3/dist-packages/pip (python 3.8)

Now we need to install an integrated development environment (IDE) for Python. IDEs help programmers write, compile, debug and execute code very easily. There are many contenders for this position also. PyCharm, IDLE, Spyder, etc, are popular Python IDEs. However, since our primary aim is to develop AI and data science based applications, we consider two other heavy contenders — JupyterLab and Google Colab. Strictly speaking, they are not just IDEs; rather, they are very powerful Web based interactive development environments. Both work on Web browsers and offer immense power. JupyterLab is free and open source software supported by Project Jupyter, a non-profit organisation. Google Colab follows the freemium model, where the basic model is free and for any additional features a payment is required. I am of the opinion that Google Colab is more powerful and has more features than JupyterLab. However, the freemium model of Google Colab and my relative inexperience with it made me choose JupyterLab over Google Colab for this tutorial. But I strongly encourage you to get familiar with Google Colab at some point in your AI journey.

JupyterLab can be installed locally using the command ‘pip3 install jupyterlab’. The command ‘jupyter-lab’ will execute JupyterLab in the default Web browser of your system. An older and similar Web based system called Jupyter Notebook is also provided by Project Jupyter. Jupyter Notebook can be locally installed with the command ‘pip3 install notebook’ and can be executed using the command ‘jupyter notebook’. However, Jupyter Notebook is less powerful than JupyterLab, and it is now official that JupyterLab will eventually replace Jupyter Notebook. Hence, in this tutorial we will be using JupyterLab when the time comes. However, in the beginning stages of this tutorial we will be using the Linux terminal to run Python programs, and hence the immediate need for pip, the package management system.

Anaconda is a very popular distribution of Python and R programming languages for machine learning and data science applications. As potential AI engineers and data scientists, it is a good idea to get familiar with Anaconda also.

Now, we need to fix the most important aspect of this tutorial — the style in which we will cover the topics. There are a large number of Python libraries to support the development of AI based applications. Some of them are NumPy, SciPy, Pandas, Matplotlib, Seaborn, TensorFlow, Keras, Scikit-learn, PyTorch, etc. Many of the textbooks and tutorials on AI, machine learning and data science are based on complete coverage of one or more of these packages. Though such coverage of the features of a particular package is effective, I have planned a more maths-oriented tutorial. We will first discuss a maths concept required for developing AI applications, and then follow the discussion by introducing the necessary Python basics and the details of the Python libraries required. Thus, unlike most other tutorials, we will revisit Python libraries again and again to explore the features necessary for the implementation of certain maths concepts. However, at times, I will also request you to learn some basic concepts of Python and mathematics on your own. That settles the final question about the nature of this tutorial.

After all this buildup, it would be a sin if we stop this article at this point without discussing even a single line of Python code or a mathematical object necessary for AI. Hence, we move on to learn one of the most important topics in mathematics required for conquering AI and machine learning.

Vectors and matrices

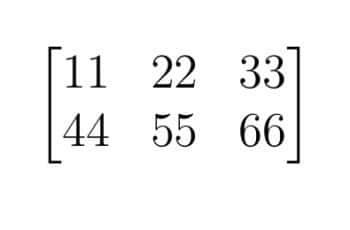

A matrix is a rectangular array of numbers, symbols or mathematical expressions arranged in rows and columns. Figure 2 shows a 2 x 3 (pronounced ‘2 by 3’) matrix having 2 rows and 3 columns. If you are familiar with programming, this matrix can be represented as a two-dimensional array in many popular programming languages. A matrix with only one row is called a row vector and a matrix with only one column is called a column vector. The vector [11, 22, 33] is an example of a row vector.

But why are matrices and vectors so important in a discussion on AI and machine learning? Well, they are the core of linear algebra, a branch of mathematics. Linear algebraic techniques are heavily used in AI and machine learning. Mathematicians have studied the properties and applications of matrices and vectors for centuries. Mathematicians like Gauss, Euler, Leibniz, Cayley, Cramer, and Hamilton have a theorem named after them in the fields of linear algebra and matrix theory. Thus, over the years, a lot of techniques have been developed in linear algebra to analyse the properties of matrices and vectors.

Complex data can often easily be represented in the form of a vector or a matrix. Let us see a simple example. Consider a person working in the field of medical transcription. From the medical records of a person named P, details of the age, height in centimetres, weight in kilograms, systolic blood pressure, diastolic blood pressure and fasting blood sugar level in milligrams/decilitres can be obtained. Further, such information can easily be represented as the row vector. As an example, P = [60, 160, 90, 130, 95, 160]. But here comes the first challenge in AI and machine learning. What if there are a billion health records. The task will be incomplete even if tens of thousands of professionals work to manually extract data from these billion health records. Hence, AI and machine learning applications try to extract data from these records using programs.

The second challenge in AI and machine learning is data interpretation. This is a vast field and there are a number of techniques worth exploring. We will go through the most relevant of them in the coming articles in this series. AI and machine learning based applications also face hardware challenges, in addition to mathematical/computational challenges. With the huge amount of data being processed, data storage, processor speed, power consumption, etc, also become a major challenge for AI based applications. Challenges apart, I believe it is time for us to write the first line of AI code.

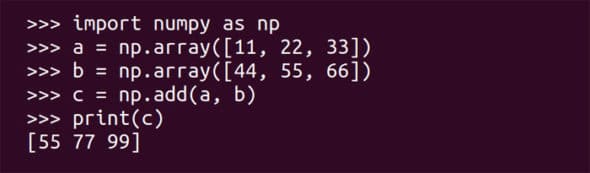

We will write a simple Python script to add two vectors. For that, we use a Python library called NumPy. This is a Python library that supports multi-dimensional matrices (arrays) and a lot of mathematical functions to operate on them. The command ‘pip3 install numpy’ will install the package NumPy for Python 3. Notice that NumPy would have been preinstalled if you were using JupyterLab, Google Colab or Anaconda. However, we will operate from the Linux terminal for the first few articles in this series on AI for ease of use. Execute the command ‘python3’ on the Linux terminal to work from the Python console. This console is software that allows line-by-line execution of Python code. Figure 3 shows the line-by-line execution of the Python code to add two vectors and show the output on the terminal.

First, let us try to understand the code. An important note before we proceed any further. Since this tutorial assumes very little programming experience, I will label lines of code as either (basic) or (AI). Lines labelled (basic) are part of classical Python code, whereas lines labelled (AI) are part of the Python code for developing AI applications. I know such a classification is not necessary or very meaningful. However, I want to distinguish between basic Python and advanced Python so that programmers with both basic and intermediate skills in programming will find this tutorial useful.

The line of code import numpy as np (basic) imports the library NumPy and names it as np. The import statement in Python is similar to the #include statement of C/C++ to use header files and the import statement of Java to use packages. The lines of code a = np.array([11, 22, 33]) and b = np.array([44, 55, 66]) (AI) create two one-dimensional arrays named ‘a’ and ‘b’. Let me do one more simplification for the sake of understanding. For the time being, assume a vector is equivalent to a one-dimensional array. The line of code c = np.add(a, b) (AI) adds the two vectors named ‘a’ and ‘b’ and stores the result in a vector named ‘c’. Of course, naming variables as ‘a’, ‘b’, ‘c’, etc, is a bad programming practice, but mathematicians tend to differ and name vectors as ‘u’, ‘v’, ‘w’, etc. If you are an absolute beginner in Python programming, please learn how variables in Python work.

Finally, the line of code print(c) (basic) prints the value of the object, the vector [55 77 99], on the terminal. This is a line of basic Python code. The vector c = [55=11+44 77=22+55 99=33+66] gives you a hint about vector and matrix addition. However, if you want to formally learn how vectors and matrices are added, and if you don’t have any good mathematical textbooks on the topic available at your disposal, I suggest you go through the Wikipedia article on matrix addition. A quick search on the Internet will show you that a classic C, C++ or Java program to add two vectors takes a lot more of code. This itself shows how suitable Python is for handling vectors and matrices, the lifeline of linear algebra. The strength of Python will be further appreciated as we perform more and more complex vector operations.

Before we conclude this article, I want to make two disclaimers. First, the programming example just discussed above deals with the addition of two row vectors (1 x 3 matrices to be precise). But a real machine learning application might be dealing with a 1000000 X 1000000 matrix. However, with practice and patience we will be able to handle these. The second disclaimer is that many of the definitions given in this article involve gross simplifications and some hand-waving. But, as mentioned earlier, I will give formal definitions for any term I have defined loosely before the conclusion of this series.

Now it is time for us to wind up this article. I request all of you to install the necessary software mentioned in this article and run each and every line of code discussed here. We will begin the next article in this series by discussing the history, scope and future of AI, and then proceed deeper into matrix theory, the backbone of linear algebra.

![Artificial-Intelligence-Explaining-the-Basics-featured-image] Artificial Intelligence](https://www.opensourceforu.com/wp-content/uploads/2022/05/Artificial-Intelligence-Explaining-the-Basics-featured-image.jpg)