Businesses are always looking for ways to deliver software faster at minimal cost. Adoption of cloud technologies not only makes this easier but also reduces operational cost. Containerisation has emerged as a popular strategy for cloud adoption. This is where Kubernetes CronJob, a powerful tool for scheduling and running periodic jobs in a containerised environment, comes in. Let’s see how it works.

Containerisation is a technology that has been gaining popularity in recent years due to its ability to make applications portable, scalable, and easily deployable. Containers provide a lightweight and flexible way to package and distribute software applications; they help to build once and deploy anywhere by encapsulating an application and its dependencies in a container that can run on any host operating system with the same results. Containers are isolated from each other and the host operating system, providing a secure environment for running applications.

Cloud containerisation has further enhanced the advantages of containerisation by enabling users to host their containers on cloud platforms like AWS, Google Cloud Platform, and Microsoft Azure Container orchestrators such as Kubernetes and cloud-managed implementations like EKS, AKS, GKE, and AWS ROSA automate the deployment as well as auto scaling of containers and their dependencies.

Batch jobs

Batch jobs are computer programs processed in groups, which are used for long-running tasks that can be executed at a later time. They can be made to run on specific schedules such as daily, weekly, or fortnightly, using scheduled tasks in Windows or Cron jobs in Linux and UNIX systems.

While Linux Cron jobs are suitable for basic job scheduling needs, they may not be adequate for more complex or mission-critical workloads. Leveraging this gap, many enterprise job scheduling tools have emerged and gained traction, offering advanced job scheduling features like complex jobs and workflows.

Control-M is a workload automation platform that is being adopted widely as it helps organisations manage their workloads across hybrid environments from a single interface.

Control-M features

Control-M offers many features that make it an ideal choice for managing complex workloads in the cloud.

Unified control: Control-M provides a unified interface for managing workloads across different platforms, including cloud environments like AWS.

Advanced scheduling: It offers advanced job scheduling capabilities that allow users to define complex workflows and dependencies management among jobs with ease.

Monitoring and alerting: Control-M provides real-time monitoring and alerting capabilities that enable users to quickly identify and resolve issues with their workloads.

Self-service: It enables self-service automation for developers, allowing them to easily deploy and manage their applications in the cloud.

Control modules (CM): These integrate with external systems like SAP, Oracle E-Business Suite, etc.

| Feature | Control-M | Kubernetes CronJob | EKS Cron Job |

| Orchestration | Yes | Yes | Yes |

| Scalability | Yes | Yes | Yes |

| High availability | Yes | Yes | Yes |

| Monitoring | Yes | Yes | Yes |

| Job dependencies | Yes | Yes | Yes |

| Job scheduling | Yes | Yes | Yes |

| Job logs | Yes | Yes | Yes |

| Integration with external tools | Yes | Yes | Yes |

| Job trigger | Time, file, message | Time, interval, cron expression | Time, interval, cron expression |

| Job types | Command, script, file transfer, database, email, FTP, Hadoop, SAP, more | Command, script, HTTP, TCP, messaging, more | Command, script, HTTP, TCP, messaging, more |

| API support | Yes | Yes | Yes |

| Cloud support | Yes | Yes | Yes |

Kubernetes CronJob

Kubernetes provides a powerful and flexible Cron job functionality that enables users to schedule and run batch jobs on a regular basis. These are Kubernetes native objects, defining jobs and workflows with a high degree of granularity to run at a specified time or interval. They have quite a few advantages over Linux Cron jobs.

Cron jobs in Kubernetes are defined using YAML files that specify the job’s schedule, command, and other parameters. Once a Cron job is created, Kubernetes automatically creates and manages the job’s pods, ensuring that the job is executed according to the defined schedule.

Jobs can be scheduled based on time, date, and frequency, with support for complex scheduling and calendar exceptions. Dependencies can be defined using job templates or init containers, and pre- and post-processing jobs can also be specified. Kubernetes jobs can also be triggered by events as well as manually.

Kubernetes provides real-time monitoring and reporting of job execution through logs and metrics, with customisable dashboards and alerts for job failures or performance thresholds. Resource management features allow for allocation of system resources and prioritisation of critical jobs. Integration with external systems is already enabled. Reporting capabilities enable customised reports and automatic scheduling. Robust security features ensure the integrity and confidentiality of batch processing workflows through authentication, authorisation, and encryption.

Users can define access control policies, audit trails, and encryption keys to protect sensitive data. For more details on Kubernetes CronJob capabilities, do visit their site.

The benefits of moving batch workflows to Kubernetes CronJobs are:

a. Lower TCO.

b. Improved scalability, automation, and flexibility.

c. Kubernetes CronJob allows users to containerise jobs, making them run on their existing systems.

d. Kubernetes CronJobs provide a highly scalable and resilient infrastructure that can run batch jobs at any scale, from small to very large. They can scale up or down based on workload demands, ensuring that the required resources are always available.

e. Fault-tolerance: Kubernetes CronJob provides built-in mechanisms for handling job failures, ensuring that jobs are completed successfully even in the event of failures.

f. Simplified management: Kubernetes CronJob allows users to manage their jobs from a single interface, simplifying the management of their workloads.

g. Increased automation: Kubernetes CronJobs enable users to automate the scheduling and execution of batch jobs, reducing the need for manual intervention.

h. Greater flexibility: Kubernetes CronJobs provide greater flexibility in defining batch jobs, allowing users to specify complex workflows and dependencies.

i. Enhanced visibility: Kubernetes CronJobs provide real-time monitoring and alerting capabilities, giving users greater visibility into the status and performance of their batch jobs

To containerise an application using Kubernetes CronJob, here are the steps to follow:

- Package the application and its dependencies into a container image.

- Create a Kubernetes CronJob YAML file that defines the schedule for running the job and the container image to use.

- Deploy the CronJob YAML file to the Kubernetes cluster.

| Control-M | How to handle in Kubernetes CronJob |

| Job failure alert | Create a prometheusRule that defines an alert for job failures. The rule should use the Kube_job_status_failed metric to trigger an alert if a job has failed. |

| On demand execution and restarts | Use the kubectl create job command followed by the name of the CronJob. The Kubernetes API also provides a create endpoint for the batch/v1beta1/cronjobs/{name}/trigger resource to trigger a CronJob using an API call. To automatically restart when it fails or completes, set the spec.concurrencyPolicy field to ‘retry’, but do it after due diligence. |

| Job scheduling (daily, once a week, month-end, do not run on holidays, file/job trigger) | Kubernetes CronJob provides the ability to schedule jobs on a recurring basis using a cron expression. The cron expression consists of six fields that specify the minute, hour, day of the month, month, day of the week, and year to run the job. To prevent Kubernetes CronJobs from running on holidays, use external holiday calendars or APIs to generate a list of holidays and exclude them from the schedule. |

| Job logs monitoring for troubleshooting (one log file per job execution) |

By default, Kubernetes CronJobs do not create separate log files for each job execution. Instead, the logs for each execution are combined and stored in the pod’s container log file. To troubleshoot a specific job execution, you can filter the logs by the pod name or the job name and time stamp. |

| Job hold (i.e., job should not run between 1 p.m. and 4 p.m.) | We can hold a Kubernetes CronJob by updating its spec.suspend field to true. When this field is set to true, the CronJob controller will not create new job runs and stop any active job runs. |

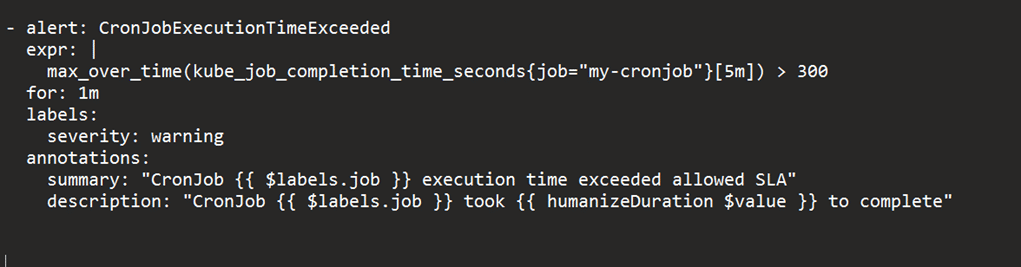

| Job notification if a job runs for more than a certain allowed SLA time limit | When the CronJob runs longer than the allowed SLA time, Prometheus will trigger the alert and send it to the configured notification system. We need to configure a Prometheus rule for this. |

Control-M and Kubernetes CronJob: A comparison, and addressing the feature gaps

- Control-M is a commercial product with heavy infrastructure requirements. On the other hand, Kubernetes CronJob leverages existing infrastructure and achieves the same results, reducing your capex and opex.

- While Kubernetes CronJob offers many benefits over traditional batch scheduling tools like Control-M, there are still some gaps that need to be addressed. Table 1 compares the key features of both.

- Table 2 shows how frequently used Control-M features that are not available out-of-the-box in Kubernetes CronJob can be made available in the latter.

- Let us now see how to address the gaps as quite a few features of Control-M are not available out-of-the-box in Kubernetes CronJobs.

Sending an alert on exceeding execution time

This feature of Control-M is not available out-of-the-box in Kubernetes CronJob at the time of writing this. We can create alerts in Kubernetes CronJob when execution time exceeds a certain threshold, using the Prometheus built-in monitoring and alerting system by creating metrics and the custom query for this. Here are the steps.

Set up Prometheus: Install Prometheus on the Kubernetes cluster using the Prometheus Operator or by creating a Prometheus deployment manually. Once Prometheus is running, alerts can be set up by accessing its dashboard.

Configuring CronJob metrics: Expose metrics from CronJob that Prometheus can scrape by adding annotations to the CronJob’s pod template (in the Prometheus format).

annotations: prometheus.io/scrape: “true” prometheus.io/path: “/metrics” prometheus.io/port: “8080”

Set up alert rules: To create an alert rule for CronJob, you need to write a PromQL query that measures the execution time of your CronJob and sets a threshold for when an alert should be triggered. Here’s an example query:

max by (job) (rate(my_cronjob_duration_seconds_sum[5m])) > 60

This query calculates the maximum duration of your CronJob over the past 5 minutes and triggers an alert if it exceeds 60 seconds.

Configure alert receivers: Prometheus supports various alert receivers, including email, Slack, PagerDuty, and more. You can configure alert receivers in the Prometheus alertmanager.yaml file.

Once you have set up Prometheus and configured your CronJob’s metrics and alert rules, you should start receiving alerts when the execution time of your CronJob exceeds the threshold you set.

Control-M workspaces

This feature of Control-M allows users to manage the execution and dependencies of jobs across different environments. Kubernetes CronJobs do not provide a direct equivalent of the exact functionality, but they do provide similar capabilities for managing jobs across different environments. You can use Kubernetes name spaces and define separate CronJobs for each name space.

Name spaces provide a way to partition resources in a Kubernetes cluster and create virtual clusters that can be used to separate different environments, such as development, staging, and production. You can create separate name spaces for each environment and deploy your CronJobs to the appropriate name space. This will allow you to manage the execution of jobs in each environment separately and ensure that they do not interfere with each other.

A similar functionality like Control-M workspaces can be achieved using CronJobs by defining separate CronJobs for each environment. For example, you can create a development CronJob, a staging CronJob, and a production CronJob, each with its own schedule and configuration.

Another approach is to use Kubernetes ConfigMaps and Secrets to manage environment-specific configurations and credentials. ConfigMap provides a way to store configuration data as key-value pairs or in files. Environment-specific configuration data, such as database URLs, API keys, and other settings can be stored in ConfigMap and can be referenced in CronJobs to ensure that each job is using the appropriate configuration.

Creating a job failure alert

To create a job failure alert in Kubernetes CronJob, you can follow these steps:

a. Create a Kubernetes pod that runs a monitoring script, which checks the status of the job. This monitoring script can be written in any language, but it should be able to communicate with the Kubernetes API.

b. In the monitoring script, check the status of the job. If the job is running, do nothing. If the job has been completed, check its status.

kind: Pod

Metadata:

name: job-monitor

labels:

app: job-monitor

spec:

containers:

- name: job-monitor

image: my-monitoring-image

env:

- name: JOB_NAME

value: my-job

- name: NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

command: [“/bin/sh”]

args: [“-c”, “while true; do kubectl get job $JOB_NAME -n $NAMESPACE -o jsonpath=’{.status.conditions [?(@.type==\”Failed\”)].status}’ | grep ‘True’ &&

kubectl describe job $JOB_NAME -n $NAMESPACE && echo Job $JOB_NAME failed | mail -s ‘Job Failed’ my-email@example.com; sleep 60; done”]

In the above example, the pod runs a monitoring script that uses the kubectl command to check the status of the job specified by the JOB_NAME environment variable. The script runs in an infinite loop, checking the status of the job every minute.

If the job status is ‘failed’, the above script uses kubectl describe to get more information about the job and sends an email alert to my-email@example.com (pagerduty can be used).

Workload change manager

Kubernetes CronJob workload management involves managing and scheduling jobs to run on a Kubernetes cluster. While there are some similarities between workload change management in Control-M and Kubernetes CronJob management, there are also some key differences.

Some features of Kubernetes CronJob workload change management that are similar to Control-M include:

a. Change requests: Kubernetes provides a centralised interface for managing changes to job schedules and workflows, enabling workload change managers to review and approve changes.

b. Non-production environment: Kubernetes also provides a non-production environment, where changes can be tested before they are implemented in the production environment.

c. Access controls: Kubernetes offers granular access controls that allow workload change managers to restrict access to specific areas of the system. This helps to ensure that only authorised personnel are able to make changes to the system.

Running CronJobs in parallel with EKS

By default, Kubernetes CronJobs runs jobs sequentially. To run CronJobs in parallel, you can set the .spec.concurrencyPolicy field in the CronJob spec to ‘Allow’.

spec: schedule: “*/5 * * * *** concurrencyPolicy: “Allow”

To run the same job in parallel, type the following command:

apiVersion: batch/v1betal kind: Cron Job metadata: name: my-cronjob spec: schedule: “*/5 parallelism: 2 jobTemplate: spec: template: spec: containers: - name: my-container image: my-docker-image command: [“my-command”] restartPolicy: OnFailure

Running multiple jobs concurrently or in parallel can increase the load on the cluster and cause performance issues. It’s important to select this workload after due diligence on the impact and by provisioning adequate resources.

Running non-container jobs from Kubernetes CronJob

This is very simple and involves using a Kubernetes pod template to run a command or script that can execute the external job.

a. Create a pod template that runs the command or script that can execute the external job.

b. This pod template runs a container with alpine:latest image and runs the external-job-command command.

c. Create a CronJob that uses the pod template you just created. For example:

kind: Cron Job metadata: name: my-cronjob spec: schedule: “0 1 * * *” jobTemplate: spec: template: spec: containers: name: my-container image: alpine:latest command: [“/bin/sh”,”external-job-command”]

This CronJob schedules the pod to run the external-job-command command.

On-demand execution and forced restarts

To manually trigger a CronJob, use the kubectl create job command followed by the name of the CronJob. The Kubernetes API also provides a create endpoint for the batch/v1beta1/cronjobs/{name}/trigger resource to trigger a CronJob using an API call.

When a CronJob is manually triggered or restarted, a new job object is created with a unique name and a new pod is scheduled to run the workload. The old job object and pod are terminated, and any resources used by the old pod are released.

To configure a CronJob to automatically restart when it fails or completes, set the spec.concurrencyPolicy field to ‘Forbid’ (the default), ‘Allow’, or ‘Replace’.

The Forbid policy prevents concurrent executions of the same CronJob. Allow allows concurrent executions, and Replace replaces the old job with a new one, if the old job is still running when the next scheduled execution time arrives.

Additionally, you can configure the spec.failedJobsHistoryLimit and spec.successfulJobsHistoryLimit fields to control the number of failed and successful job objects that are retained by the CronJob controller. This helps with troubleshooting and audit logging.

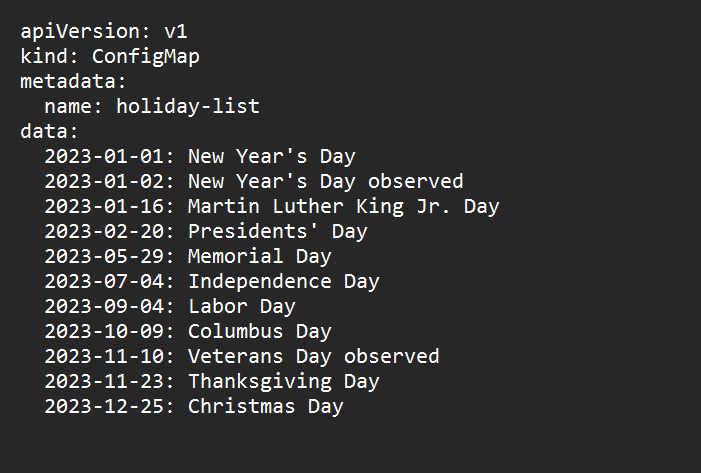

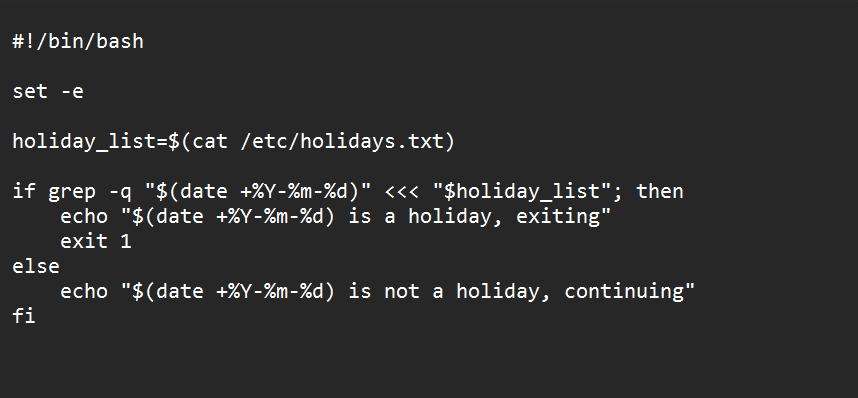

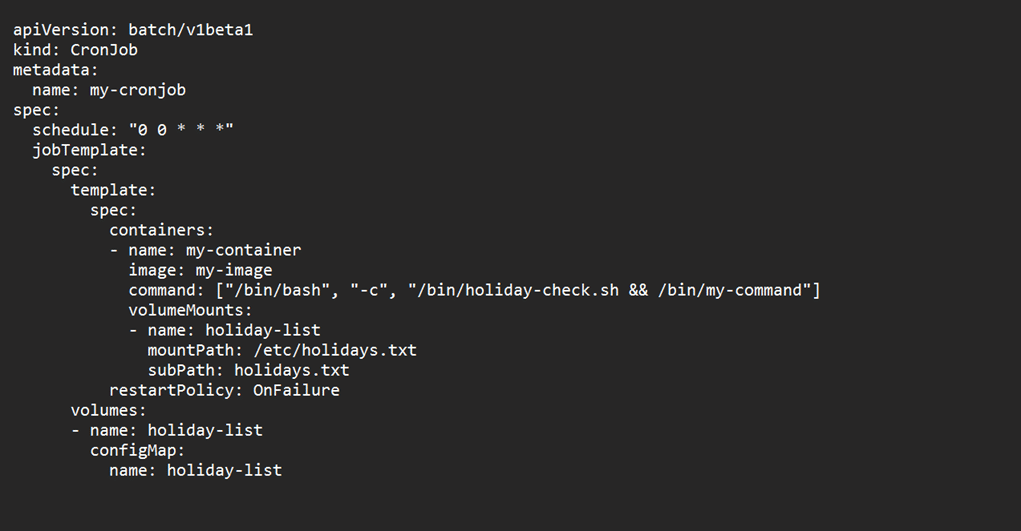

Preventing Kubernetes CronJobs from running on holidays

a. To prevent jobs from running on holidays, you can use external holiday calendars or APIs to generate a list of holidays and exclude them from the schedule. You can also use Kubernetes Jobs to trigger CronJobs based on a file or job trigger (refer Figures 1, 2 and 3).

b. Create a ConfigMap that contains a list of holidays in the format YYYY-MM-DD.

c. Create a script that checks if the current date is a holiday, and exits with an error code if it is.

Mount the ConfigMap as a file in the pod that runs the CronJob.

In the above example, CronJob runs a container that executes a Bash script /bin/holiday-check.sh before running the actual command /bin/my-command. The Bash script reads the holiday list from the mounted ConfigMap file /etc/holidays.txt and exits with an error code if the current date is a holiday.

Sample code for scheduling jobs

a. To run a job every day at a specific time you can use the sample code given below:

apiVersion: batch/v1betal kind: CronJob metadata: name: my-cronjob spec: schedule: “*/5 * * * *” jobTemplate: spec: template: spec: containers: - name: my-container image: my-image command: [“/bin/sh”, “-c”, “echo hello”] restartPolicy: OnFailure Metadata: labels: app: my-app successfulJobsHistoryLimit: 3 failedJobsHistoryLimit: 3

In the above code, a CronJob is scheduled to run every 5 minutes (line 6). The job runs a simple command to echo ‘hello’. The restartPolicy is set to OnFailure, which means that the job will be restarted if it fails.

b. To run a job once a week, use the following format in the above code, line 6:

0 5 * * 0

The job will run at 5:00 a.m. every Sunday. The last digit (0) indicates the day of the week, where Sunday is 0 and Saturday is 6.

c. To run a job at the end of every month, use the following format in the above code, line 6.

0 0 28-31 * *

The job will run at midnight on the 28th, 29th, 30th, or 31st day of the month.

d. To prevent a job from running on holidays, you’ll need to create a list of holidays and exclude those days from the Cron schedule. Here’s an example:

0 5 * * 1-5 [ $(date +\%w) -ne 2 ]

This example runs the job every week day at 5:00 a.m. (Monday to Friday) but skips the job on Tuesdays. The $(date +\%w) command gets the day of the week, where Monday is 1 and Sunday is 7. The expression -ne 2 means ‘not equal to 2’, which excludes Tuesdays.

e. To run a job when a specific file or job completes, you can use a file trigger or job trigger. Here’s an example using a file trigger:

0 5 * * * /path/to/command && test -e /path/to/file

The first command will run the job every day at 5:00 a.m. If it succeeds, the test command will check if the specified file exists. If the file exists, the second command will run. If the file doesn’t exist, the second command won’t run.

Complex examples of job scheduling

Here’s an example of how to create an alert for a CronJob that runs longer than 5 minutes.

- Create a Kubernetes job completion event rule in Prometheus as shown in Figure 4.

This rule uses the kube_job_completion_time_seconds metric provided by kube-state-metrics exporter to track the completion time of the CronJob. The max_over_time function calculates the maximum completion time over the last 5 minutes and triggers an alert if it exceeds that. - Configure Prometheus to send alerts to a notification system such as Slack or PagerDuty.

- Apply the Prometheus alert rule to your Kubernetes cluster using a PrometheusRule object.

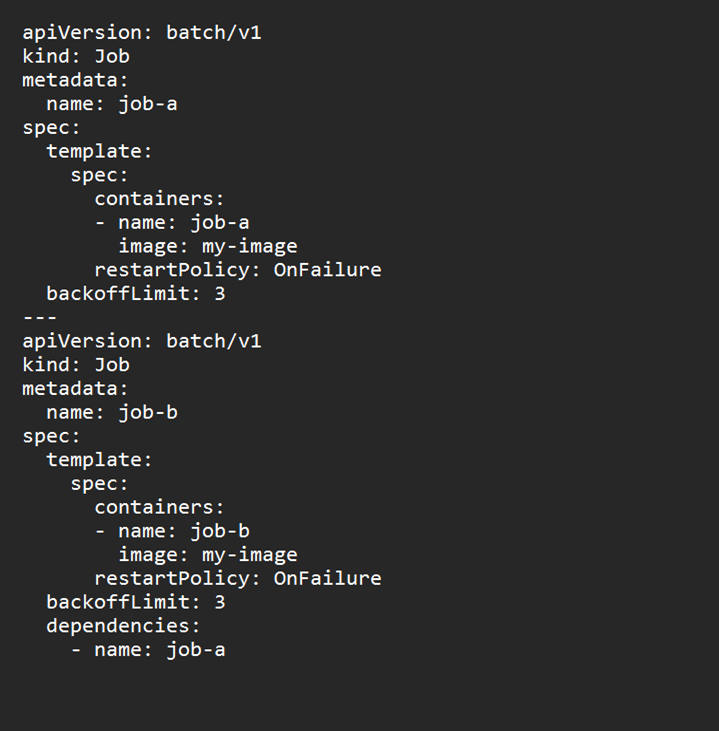

To create a job that depends on another job, specify the name of the job in the spec.dependencies field (refer Figure 5).

Containerisation has revolutionised the way applications are developed, deployed, and managed. Kubernetes CronJob is a powerful tool for scheduling and running periodic jobs in a containerised environment, enabling users to take full advantage of the benefits of containerisation.