It was just another day. I turned on the computer, and started listing the days work in my mind while it booted. But to my surprise, the monitor showed the message, Hard disk not found. At first, I thought it could be a loose connection, which is nothing to worry about. But then I recalled the previous days programming experiments and realised that I had damaged the file system of my hard disk! Thanks to TestDisk (cgsecurity.org), I was able to recover the entire disk with all the partitions and files. Not a single file was lost. Even the bootloader was restored.

But this does not imply that any disk damage can be undone and everything can be recovered. I knew I was lucky this time. So after this episode, I started to take frequent backups of important data.

Before getting introduced to some important backup utilities for desktops and workstations, lets discuss the importance of data backups.

When recoveries fail and backups succeed

Data recovery and backup are the two options you have when important files get lost. The third one is starting everything from scratch, which drives you crazy. You perform recovery when you delete some files accidentally, or your disk is damaged, partially, while data backup is a process of creating duplicates of your data frequently and restoring them when needed. Data recovery just retrieves what is lost, i.e., you have nothing to do unless you have lost something. You may not feel the need for data backup, but be aware that it is the right and best method to safeguard data.

As mentioned earlier, there are many tools like TestDisk, which can recover your whole hard disk. But recovering lost data from a disk always comes with some challenges. Recovery depends on a single storage device and any negative parameter related to it can ruin the entire process. Sometimes the disk is physically damaged; or the files are overwritten, which makes them unrecoverable. Also, you cannot say for sure that all disks and file systems are supported.

Backing up is a process that you cannot think of when your data is lost. You should take backups of your files/folders when you make important changes. Many backup utilities can automate this process, making things easier. Copies of your data (or of only the changes) can be stored in a remote location, or just in another local disk, making your data immortal. Restoring from backups is a simple process.

Why a simple copy-paste isn’t enough

Does the term backup sound challenging to you? For many, it seems to be a process that can only be done by experts with the help of sophisticated tools. But let me correct thatjust keeping a copy of your files in a CD or some other storage media is a backup. If you are an experienced user, you might think that I am silly to mention this as an important point. But believe me, there are many to whom data backup is still an alien concept.

However, a simple copy-paste isnt enough, especially when you are doing a long-term project. Imagine that you are shooting a movie. Every day you capture some video clips and add them to your library (say, a folder titled CLIPS). What should be your backup strategy here? You have two choices, if you dont want to think about the backup software at this stage. The first one is to take the copy of the folder CLIPS each time you make a change, and rename it with the current date (e.g., CLIPS_28_07_2015). The second one is to take copies of the changes only.

In the first case, you are wasting a lot of space, while in the second, it is very difficult to keep track of each change. Either way, you have to do everything manually, wasting a lot of time and making way for confusion. Therefore, automated backup utilities are necessary.

Online or offline backups?

We have just seen the importance of automated backup utilities, and we are going to check two of them. But we should discuss another important thing before that whether automatic or manual backups are best for you. Also, should you go for CDs, DVDs, hard disks or online storage? And further, we need to think about how to preserve these backups.

The answers are based on the longevity and reliability of these media. No medium has an infinite lifespan. Their actual lifespan may be topics of considerable debate and depend on a lot of parameters. But studies show that you cannot rely upon CDs and DVDs for periods of over one or two decades while hard disks have an amazingly short life span of just four-five years. It will be interesting to know more about M-Disc, which is supposed to have a life span of 1000 years. But it doesnt seem to be feasible right now. And I dont think tapes will be of any interest to a desktop user.

This means that if you rely on a local storage device, you have to constantly monitor and duplicate it. There is also another reason to do thispresent data storage standards will become obsolete as technology advances.

This is where the importance of cloud storage comes in. Rely upon some online storage provider or buy some space from a hosting company so that they will take care of your backups. This means that you take the backup of your local files and upload it to a server, and your service provider will do everything to keep it forever. With remote storage, you may need encryption to keep your data safe. Thanks to the tools we are going to study, all of this is now automated.

Déjà Dup, the beginners tool

Déjà Dup isnt a tool for novices because of how simple it is; rather, it is a highly automated program that is really user-friendly, which makes it suitable for a beginner. In fact, it is a clever graphical wrapper around Duplicity, which is a sophisticated command line utility. Well be having a look at Duplicity later.

Since Déjà Dup is a front-end for Duplicity, it also follows the chain method, whereby a full backup is only taken the first time. The backups that follow note the changes only, which is a tricky method used to save space. It is recommended to take full backups periodically so that you dont have to be afraid of any bugs.

Déjà Dup is shipped with Ubuntu while the basic editions of other distros may not have it. You can install it using your default package manager. In Debian-like systems, the following command will do (open the terminal, type it, and press Enter):

sudo apt-get install deja-dup

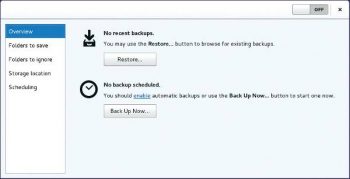

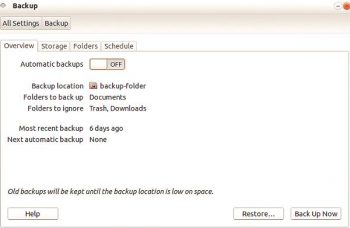

The latest version of Déjà Dup appears as Backup in menus. In Ubuntu, Déjà Dup can be opened from the System Settings window. Figure 1 shows the start screen of Déjà Dup in Debian and Figure 2 shows the same in Ubuntu.

Taking backups with Déjà Dup

This is very simple and can be done by performing the following basic steps:

1. Select the folders to be preserved in the Folders tab. Also choose which sub-folders are to be ignored.

2. Select the destination (i.e., where the backups are kept) in the Storage tab.

3. You can set a schedule in the Schedule tab, which takes effect only if you enable Automatic backups in the Overview tab.

4. Click the Back Up Now button in the Overview tab. You may now be prompted to set a password.

The Folders to ignore facility is highly useful in case you have to take the backup of a master directory, excluding some junk or bulk content inside it. The classic example is to take the backup of your home folder, excluding Trash and Downloads.

To test the use of Déjà Dup, please choose a lightweight folder in Step 1, select a Local Folder (e.g., /tmp) in Step 2, and go on.

If you are taking an important backup, you may choose an external disk as the Backup location. Well look at an example for remote storage right after checking how to restore backups using Déjà Dup.

Restoring backups with Déjà Dup

The general process is somewhat as follows:

1. Click Restore... in the Overview tab.

2. Choose Restore from the newly-appeared dialogue box and click Forward. If you are storing backups in a single location, you have to change nothing here.

3. Déjà Dup asks for a password if you are storing your backups in a password-protected area (especially an FTP server). Give it and move on.

4. Now Déjà Dup asks for a restore point, i.e., the exact moment from when to restore. Choose a date/timestamp and go forward.

5. The next frame asks for a location to restore the backup. You can choose the original location or an alternative folder. The first option is best if you accidentally deleted a folder. Go on with the wizard.

6. Now you will be asked for the encryption password (if any). This is the password you used to encrypt your files while taking a backup. Give it and the restoration works.

Déjà Dup online backups

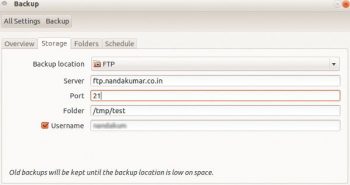

Déjà Dup supports many remote storage services. The new version supports Amazon S3 and Rackspace Cloud Files, in addition to the standard remote storage methods. Here we are going to experiment with the classic FTP storage. In order to perform this, you need an FTP account. I am using my FTP account in the website that I own.

Here are the steps:

1. Choose the folders in the Folders tab. Give your FTP account details in the Storage tab after selecting FTP from the Storage location combo box (Figure 3). Details about the server and port can be obtained from your service provider or Web hosting control panel. In my case, as I own nandakumar.co.in, the server is ftp.nandakumar.co.in and the port is 21. You also have to give the FTP account username. Folder refers to the path of the folder where the backups should be kept. The path should be relative to the FTP directory which is allocated to you.

2. Click Back Up Now in the Overview tab. In the upcoming steps, give your FTP account password and the encryption password for the backup (Figures 4 and 5) and youre done!

I dont think there is a need to explain the restoration process. Just click the Restore… button in the Overview tab and follow the instructions.

Note: If youd like to use a Web hosting account for FTP backup storage, it is recommended that you create a dedicated account for this purpose. This ensures better privacy, isolation and security.

The simplicity of Duplicity

Although servers rely entirely on the command line, desktop users no longer do. But there is still a small community of people who like to explore the power and beauty of it. So let us get to explore Duplicity, the command line backend that powers Déjà Dup.

Duplicity supports GnuPG encryption and allows us to use local or remote storage methods (such as FTP, sftp, scp, WebDAV, WebDAVs, Google Docs, HSi and Amazon S3). It is powered by rsync, a fast and reliable file copying tool, the algorithm for which helps in efficient data transfer. Duplicity supports many features including deleted files, full UNIX permissions and symbolic links, excluding hard links.

And the good news is that it is not really hard to use.

Warning: Duplicity may crash while copying /proc if you are backing up the root directory /. Use –exclude /proc to avoid this.

Installation

Open a terminal, type duplicity and press the Enter key to see something (probably an error message) so that you can ensure it is installed in your system. If not, use a package manager or give the following commands:

sudo apt-get install duplicity sudo apt-get install ncftp

The second package is to get FTP support. Yes, it is optional.

Using Duplicity

The following example illustrates how we can store the backup of /home/nandakumar/test in /home/nandakumar/backup. Remember that the latter should be replaced with some external storage device or there is no use of taking a backup. We wont go into that right now for the sake of simplicity.

Here is the command to be used for this purpose:

duplicity test file:///home/nandakumar/backup

We skipped /home/nandakumar from the path of test since the terminal is in the home folder, by default (otherwise use a full path or cd). However, Duplicity insists that one of the paths must be a URI. Thats why we left the second one intact.

Once this command is executed, the program asks for an encryption passphrase. Give something powerful and remember it.

If this command is run repeatedly, Duplicity copies changes since the last backup only. If you want to take a full backup, use the option full, as follows:

duplicity full test file:///home/nandakumar/backup

Restoration can also be done simply, using the following command:

duplicity file:///home/nandakumar/backup test

If youd like to exclude some files/sub-folders from being backed up, use the –exclude option, as follows:

duplicity --exclude subfolder1 test file:///home/nandakumar/backup

The non-interactive route

From the above examples, weve seen that most of the time when we run Duplicity, it asks for passwords. This is inconvenient if we are writing a shell script. There should not be any prompt at all. Thats why Duplicity supports inputs via environment variables. Lets look at the following example:

export PASSPHRASE=MyEncryptionPassword export FTP_PASSWORD=MyFTPPassword duplicity test ftp://username@ftp.domain.com/backup unset PASSPHRASE unset FTP_PASSWORD

In the above example, the folder test is backed up into another folder backup, which resides in ftp.domain.com. If you use a local storage, there is no need to set an FTP_PASSWORD.

Note: These lines can appear inside your bash history file. Delete them for security

A final word

We now know the basics of two backup utilities. However, I suggest you read up on Duplicity to find out more about its hidden power (run the command man duplicity).

If you work on some long-term projects, make use of these tools. But it is better to stick to the classic copy-paste method once you have the final product (if you have completed writing a novel, for instance). This helps to avoid software dependencies.

References

[1] https://wiki.gnome.org/Apps/DejaDup

[2] http://duplicity.nongnu.org/duplicity.1.html

[3] https://help.ubuntu.com/community/DuplicityBackupHowto