The historic rise in the adoption of Linux containers makes for interesting reading.

Steve, a software engineer with a big tech firm in Silicon Valley, has been working with his team on a cool new artificial intelligence system that the team has been developing locally. The team members have built it over a year, using a collection of open source tools, libraries and software, and are now faced with the mammoth task of deploying it onto live servers—the stage at which the real problems start kicking in!

The team had built all the scripts using Python 2.7, before realising that the production servers had only Python 3 installed. There were a number of dependencies that were inadvertently upgraded and, as a result, a lot of the code got deprecated. There were varied security policies, network topologies, and different types of storage that could not be simultaneously handled by the application. Pipelines broke down, responses were lost and ultimately, the app crashed, splattering error messages across the terminal.

There are obvious issues with deployment, one being the lack of compatibility on multiple platforms and operating systems. There are system paths that need to be set, dependencies that need to be installed, and versions to be monitored for multiple packages. Of course, modern-day package managers, virtual environments, and automated testing do make things that much easier. Still, getting the infrastructure to production-grade from a local development server is a nightmarish task for even the most skilled team of developers. This situation was extremely common for most developers until the inception of isolated environments within a Linux operating system—an idea intuitively named Linux containers.

The idea was not the first of its kind—FreeBSD jails and their chrooted equivalents on Linux did exist since 2000. However, eventually, support grew around Linux containers, initiated by Jacques Gélinas’ VServer project. The containers we see today are a technology that allows you to isolate applications as part of a package—including the entire runtime environment that it depends on for execution. This makes it easy to move the ‘contained’ application from development to testing and production while retaining full functionality. Linux containers also help minimise conflicts that may arise between development and operations teams by segregating areas of responsibility. Developers focus on apps, and the operations team focuses on infrastructure. A container image is essentially a standalone, executable software package comprising all the tools, libraries, settings and binaries required to run the code on (almost) any given operating environment. It helps streamline a number of tasks that would otherwise lead to considerable overheads in terms of man-hours spent on setting them up.

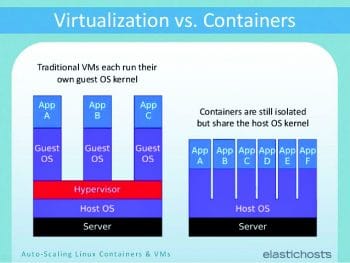

Containers vs virtualisation

Containers provide an isolated environment—an operating system-level virtualisation—with their own process and network space. This is far lighter than a virtual machine, which provides full virtualisation in an attempt to replicate the functionality of a physical computer. Virtual machines characteristically use a hypervisor for native execution, in order to share and manage hardware, allowing for multiple isolated environments that are sharing the same physical machine.

There are a number of trade-offs between both virtualisation and containerisation but the primary difference is as shown in Figure 3.

Features of Linux containers

Containers provide a simplified pipeline for shipping a product across testing and production environments by eliminating platform-specific requirements for an application.

Lightweight and resource-friendly: They permit the running of multiple instances of applications or operating systems on a single host without much overheads for the CPU and memory, thus preserving both rack space and power.

Rapid and easy deployment: Containers make the deployment process simple enough – it is just a matter of downloading and launching multiple instances of the desired image across multiple servers. The testing phase becomes swift due to the availability of clean system images.

Process and resource isolation: The primary use-case for containers is aptly handled by Linux containers. There are multiple applications that run safely and securely on a single system—if the security of one is compromised, the others remain unaffected.

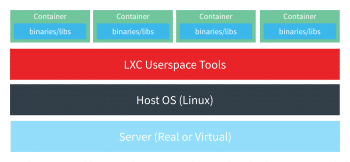

Services: As adoption rates rose, services were developed to support the idea of a container. Control groups (cgroups) is a kernel feature that allows the CPU to limit resources utilised by a process. An initialisation system (systemd) is used in setting up and managing these processes within this isolated environment. Another key feature included user name spaces, which allowed local privileges to be isolated from global ones without much modification of the environment.

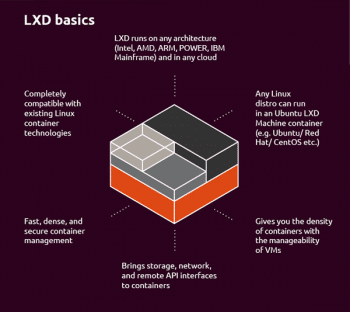

Container management: LXD provides a simple, efficient means of managing multiple containers using a simple REST interface. It can handle a large number of images, including pre-made images available for a variety of Linux distributions.

Applications of Linux containers

Containers are being used by thousands of companies in order to streamline their DevOps workflows and to simplify the shipping pipeline from development to production environments. In 2015, the Open Container Initiative (OCI) was launched within the Linux Foundation “…for the express purpose of creating open industry standards around container formats and runtime.”

Over the years, the Red Hat Foundation has been instrumental in promoting as well as supporting the growth and usage of containers among other open source technologies.

The inception and meteoric growth of Docker into a multi-billion dollar business is indicative of the enormous potential this technology presents for businesses worldwide.

Users of containers in production include thousands of established businesses, ranging from Google and Facebook, to Microsoft, Amazon and the smaller ones seeking to establish a foothold in the market. Say what you will … containers are here to stay!