A semantic Web is a network in which data can be processed by machines. In other words, the data from the Web is machine readable because it is structured and tagged in such a way that machines can extract meaningful information in response to users’ queries. Social media sites use semantic Web algorithms to make suggestions and create associations between persons.

Since the advent of online publishing and the digital marketing of products and services, search engines have been used to get accurate information. Nowadays, most services and products are searched for by people from search engines, using different search tricks and specific keywords.

According to data analytics reports of InternetLiveStats.com, Google processes more than 40,000 search queries per second. Around 20 per cent of these queries are new, having never been entered in the search engine before. The major challenge with search engines is to get accurate results without irrelevant outcomes.

The semantic Web broadly refers to ‘a Web with meaning, using interconnections’. The ‘Web’ in a semantic Web is able to describe things in a way that computers can understand the actual meaning that resides in the search query or browsing behaviour.

The wonderful powers of the semantic Web can be seen on websites like Skyscanner.net and Trivago.com. These portals compare the real-time prices from different service provider portals and give the best results in the form of price comparisons. The back-end libraries of these portals communicate with different websites and then fetch the results so that users can see the minimum price of flights or hotels. The protocols of the semantic Web work with these websites to fetch the related information from multiple locations.

In the semantic Web, the information on objects (music, vehicles, cars, persons, tickets, etc) is stored in special format files, and intelligent Web applications that collect information from many different sources, analyse and present it to users in a meaningful way.

Whenever information about specific services, products, companies or objects is required, users navigate different search engines so that related websites can be fetched and the particular information can be found. One major issue with traditional search engines is that the information may be scattered and irrelevant.

For example, if a user enters the search keywords ‘gold today’ in the search bar, these may give many haphazard results including the following:

- Gold prices

- Star Gold

- Gold jewellers

- Gold loans

Similarly, the search word ‘Python’ may give results that include references to the snake, programmes about the python on Discovery Channel, as well as Python programming.

With such diverse search results, it becomes difficult for new users to make any sense of the results fetched. If what the user really wanted was information on Python programming alone (and not details about the snake), there was no way for the search engine to have deduced this, which was why the search results included a lot of results about the snake. This is why the concept and algorithms of the semantic Web are required.

The semantic Web is focused on understanding search queries on the basis of the syntax, semantics and actual meaning of the keywords entered by the user. The semantic Web assists the user to get the results in a categorised way.

For example, if a user types the keyword ‘Python’, the semantic Web search engine gives the search results under different categories, as shown below.

Category 1: Python programming:

Search result-1, Search result-2, Search result-3, Search result-4…..

Category 2: Python reptile:

Search result-1, Search result-2, Search result-3, Search result-4…..

Category 3: Python movie:

Search result-1, Search result-2, Search result-3, Search result-4…..

Speech based searches on Google implement semantic technology and speech recognition, whereby the meaning and context of the sentences are understood, based on which responses are given by the Google search engine. But in some cases, speech based searches also give results that may not be accurate.

Knowledge representation and ontologies

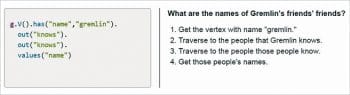

The technology of the semantic Web comprises knowledge representation and ontologies, which extract the actual meaning of the keywords entered by the user. The knowledge representation techniques used in the semantic Web are such that the database can be associated with the corresponding metadata, and the knowledge can be stored in the databases. The creation of ontology is required so that association in the data objects is possible.

| A knows B B knows C C is a friend of A B may be knowing D D met C D is a friend of A |

Limitations of websites without the semantic Web

Without the semantic Web, websites do not have accurate and interrelated information. In general, the base programming language of Web pages is HTML, in which different mark-ups are defined and then the websites are programmed. Yet, there are various limitations and disadvantages with HTML. One of its major limitations is that it is just a mark-up language to render and display Web pages on the Web browser. The text or content written in HTML does not have any relationship. This means that anything can be displayed on the Web browser using HTML, whether it has any association or not.

For example, text like ‘A is the spouse of B’ and ‘B is the son of A’ can also be displayed on a Web browser using simple HTML tags. But these types of associations do not occur using knowledge representation techniques in semantic Web based apps.

The main motive of the semantic Web app is to enable the machine to understand the content and its relationships, which are defined in the Web pages. Using semantic Web based knowledge representation, direct queries can be asked from the search engines like, “What is my date of birth?”, “Which is my birth place?” or any other. Using the semantic Web, the ontologies will scan the available information about the specific person from multiple sources and then give accurate results. In this manner, a Web based expert system can be developed using semantic Web technology.

There are different types of standards associated with knowledge representation and ontologies so that data definition and interrelationships are created.

The following are the data formats and standards of the semantic Web.

- XML: eXtensible Markup Language

- RDF: Resource Description Framework

- OWL: Web Ontology Language

- FOAF: Friend Of A Friend

- SPARQL: Recursively known as SPARQL Protocol and RDF Query Language

Friend Of A Friend (FOAF)

This standard defines the ontology in machine readable format so that the description of different objects and their relationships can be specified.

For example, as in social media portals, the relationships are defined and extracted by the algorithms. In Facebook, Twitter and Instagram, these associations are grouped and then predicted. Generally, in social media, users receive suggestions like, “You may know these persons,” or “You may be interested in following these people,” and so on. These types of relationships are extracted using FOAF profiles.

Whenever new information is uploaded by the user on social media, the relationships are built using ontology and FOAF. After that, people with the matching profile, and who have met the person at a specific place are extracted. And such people are the ones suggested to users — “You may know these people.”

The FOAF profile about a person can be created using the URL http://www.ldodds.com/foaf/foaf-a-matic.html.

The FOAF file will be created with RDF as follows:

<rdf:RDF xmlns:rdf=”http://www.w3.org/1999/02/22-rdf-syntax-ns#” xmlns:rdfs=”http://www.w3.org/2000/01/rdf-schema#” xmlns:foaf=”http://xmlns.com/foaf/0.1/” xmlns:admin=”http://webns.net/mvcb/”> <foaf:PersonalProfileDocument rdf:about=””> <foaf:maker rdf:resource=”#me”/> <foaf:primaryTopic rdf:resource=”#me”/> <admin:generatorAgent rdf:resource=”http://www.ldodds.com/foaf/foaf-a-matic”/> <admin:errorReportsTo rdf:resource=”mailto:leigh@ldodds.com”/> </foaf:PersonalProfileDocument> <foaf:Person rdf:ID=”me”> <foaf:name>Gaurav Kumar</foaf:name> <foaf:title>Mr</foaf:title> <foaf:givenname>Gaurav</foaf:givenname> <foaf:family_name>Kumar</foaf:family_name> <foaf:nick>GK</foaf:nick> <foaf:mbox_sha1sum>eccb3d9dd0cba386706462b4288acb0ff2678ab4</foaf:mbox_sha1sum> <foaf:homepage rdf:resource=”gauravkumarindia.com”/> <foaf:knows> <foaf:Person> <foaf:name>friend1</foaf:name> <foaf:mbox_sha1sum>4d2e2bea6b04249b226fe9b1761b32554f19e55d</foaf:mbox_sha1sum></foaf:Person></foaf:knows> <foaf:knows> <foaf:Person> <foaf:name>friend2</foaf:name> <foaf:mbox_sha1sum>c666c29d65fcd3461f8f81d3bc76e25802893e9f</foaf:mbox_sha1sum></foaf:Person></foaf:knows></foaf:Person> </rdf:RDF>

The FOAF file can be used as the database and matched with the other profiles using semantic Web protocols and algorithms.

The FOAF search engine (https://www.foaf-search.net/ ) is available and can be used to extract information about any person (Figure 1).

Open source tools and libraries for the semantic Web include:

- Apache TinkerPop: https://tinkerpop.apache.org

- RDFLib: https://github.com/RDFLib/rdflib

- Apache Jena: http://jena.apache.org/

- Protégé: https://protege.stanford.edu

- Sesame: http://www.openrdf.org/

- Linked Media Framework: https://code.google.com/p/lmf/

- Open Semantic Framework: http://opensemanticframework.org/

- D2R: http://d2rq.org/d2r-server

- Paget: http://code.google.com/p/paget/

- Semantic Media: http://semantic-mediawiki.org/wiki/Semantic_MediaWiki

Installing Apache TinkerPop on Windows

Apache TinkerPop is one of the multi-featured and powerful frameworks for the development and programming of semantic Web applications. Available as a free and open source distribution, it is primarily used for graph computing and ontology based programming, which is quite useful in online transaction processing and graph analysis in social media based applications.

The dynamic graph of people, and their relationships/relevance to a particular topic, can be created. These graphs are used for the predictive mining of relationships in social media, as shown in Figures 3 and 4.

RDFLib: Python based library for the semantic Web

RDFLib is a powerful Python package for RDF semantic Web programming. It contains the standards required to work with RDF. It includes the parsers and serialisers for RDF/XML, NTriples, N-Quads, N3, RDFa, Turtle, TriX, Microdata and many others. RDFLib has the Graph interface so that the creation of connections and visualisation is quite easy to implement.

The features of RDFLib are:

- Loads and saves RDF

- Creates RDF triples and relationships

- Navigates graphs

- Merges graphs

- Name spaces

- Bindings

- Interconnections

- Querying with SPARQL

- Utilities and convenience functions

Installing RDFLib with Python

On Anaconda Prompt, the following instruction can be executed for the installation of RDFLib:

Anaconda Prompt > pip install rdflib

After this, the RDFLib functions will be available for programming with the semantic Web.

Python Programming with RDF import rdflib g=rdflib.Graph() g.load(‘http://dbpedia.org/resource/Semantic_Web’) for s,p,o in g: print (s,p,o)

Querying with SPARQL

The SPARQL query is used for the mapping of a FOAF file with the Python code so that the dynamic extraction of data from the FOAF RDF file can be done. Here, the FOAF RDF file myfoaf.rdf is used as the back-end database. To query this database, the SPARQL query is fired rather than SQL.

import rdflib

mygraph = rdflib.Graph()

mygraph.parse(“myfoaf.rdf”)

mygraph qres = mygraph.query(

“””SELECT DISTINCT ?fname ?lname

WHERE {

?a foaf:knows ?b .

?a foaf:name ?fname .

?b foaf:name ?lname .

}”””)

for myrow in qres:

print(“%s knows %s” % row)

In traditional RDBMS databases, an SQL query is used for query processing. In case of semantic Web applications, the SPARQL query extracts the information associated from the ontology file and FOAF association data set. This type of data extraction is used to extract hidden associations for digital forensic applications.

Scope of research and development

Semantic Web programming is typically dependent on the associations and relationships mentioned in RDF, FOAF and OWL. These knowledge representation standards and protocols can be enhanced so that hidden fingerprints in the data items and their relationships can be associated. Using this approach, indirect relationships about a specific person or object in the database can be extracted.