Deep learning is an approach that makes a machine imitate the network of neurons in a human brain. It consists of algorithms, which allow machines to train to perform tasks that include computer vision, speech recognition and natural language processing.

The demand for deep learning applications, such as image processing, signal recognition and data mining, in industries such as aerospace and defence, automotive, healthcare, IT and telecommunications, is expected to drive the growth of the market across the globe.

Computer vision is the most popular deep learning application used across the industry. Pattern recognition, optical character recognition, code recognition, facial recognition, object recognition, natural language processing and digital image processing are all driving the demand for deep learning.

Industry adoption of deep learning

The global deep learning market is expected to reach US$ 18.16 billion by 2023.

In the APAC region, deep learning is growing as this technology is used not only in electronic products such as smartphones, tablets and PCs but also for medical and automotive products. The global deep learning market is poised to grow by US$ 7.2 billion during 2020-2024, at a CAGR of 45 per cent during the forecast period. Let us now look at the drivers and characteristics of deep learning. We will also explore the open source options for various deep learning models.

About deep learning

Advances in technology and hardware are making the development and deployment of deep learning based solutions relatively simple, and these are driving growth. There are two main branches in deep learning: discriminative modelling and generative modelling.

Discriminative modelling uses deep neural networks with hierarchical and supervised learning to predict the target. Generative modelling also uses deep neural networks but with competitive and unsupervised learning.

The key factors driving deep learning adoption are:

- The requirement for sophisticated computer vision applications in various fields like genetics, robotics, etc

- The requirement for complex predictive deep learning models across various industries

- Advances in speech and natural language processing

- Autonomous vehicle control

- The capability to process data gathered from connected devices

- Next generation (intelligent) industrial revolution

Deep learning requires large amounts of data (labelled, unlabelled or semi-labelled) for training, testing and to come up with accurate predictions. For example, driverless car development requires millions of images and thousands of hours of video.

Deep learning requires huge computing power. High-performance GPUs have a parallel architecture that is efficient for deep learning. When combined with clusters or cloud computing, this enables development teams to reduce training time for a deep learning network from weeks to hours or less. For example, the availability of data sets on deep learning network architectures helps in multi-object detection and tracking in real-time with more accuracy and high speed.

Much more research is going into many areas of deep learning such as self-driving cars, genetics, medical diagnosis, connected devices, etc.

The characteristics of deep learning are:

- Requires large amounts of data and compute power

- Automatic feature extraction (feature learning)

- Auto-correction of hyper parameters (backpropagation)

- Trainable with custom data (transfer learning)

- Works well with both structured and unstructured data

- Hierarchical feature learning

Types of deep learning

We will primarily focus on the discriminative models and will touch upon generative models briefly.

Supervised models: Like supervised machine learning models, supervised deep learning models use labelled/semi-labelled data to train. These fall into the discriminative models category. Given below are various models.

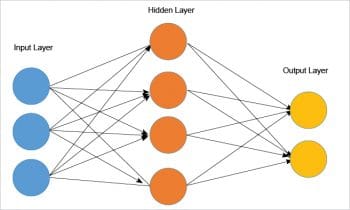

- Multi-layer perceptron (or classical neural networks): This is a shallow learning model with very few hidden layers. This model is applicable to deep learning problems like regression, classification, etc. These layers are fully connected across entire networks.

- Convolutional neural networks: These are deep neural networks (CNN) and consist of an input layer, multiple hidden layers and an output layer. The layers are convolutional layers, pooling layers and fully connected layers. This model can handle complex data processing as well as unstructured data like images, voice, etc.

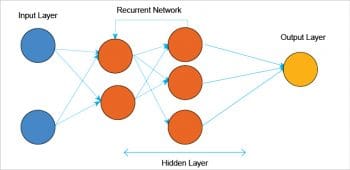

- Recurrent neural networks: A recurrent neural network (RNN) is designed to accept a series of inputs of variable sizes. Here, a series means a set of related inputs that influence/relate to one another. RNNs operate on a hidden state with shared weights for predictions. This model is used for sentiment analysis, NLP, etc.

Unsupervised models: Unsupervised models are used for sample generation, market segmentation, and to cluster/segment large data sets without labels. These fall in the deep generative models category. The various models are given below.

- Self-organising maps (SOM): A self-organising map is a model trained using unsupervised learning to produce low dimensional input data. It is used for dimensionality reduction.

- Boltzmann machines (BM): Boltzmann machines are non-deterministic (or random) generative deep learning models with only two types of nodes — hidden and visible. Nodes can capture all the parameters, patterns and correlations between the input data. These models are used to generate synthetic data sets close to the target ones, and feature identification on large data sets.

Another variation of the Boltzmann machine is a restricted Boltzmann machine (RBM) used for dimensionality reduction, classification, regression, collaborative filtering, feature learning and topic modelling.

Deep learning architectures and data sets

The various deep learning architectures are based on discriminative models and generative models. Discriminative models are widely used by the industry, while the generative models are still evolving. Let’s examine the key features of these models.

Discriminative models: Here, we explore the architectures of few discriminative models like CNN and RNN with respect to computer vision and speech, or text processing.

- Convolutional neural network (CNN) based models: The architecture is designed based on the number of layers the model has, as well as a high-level description of the layers and techniques used in the network. A typical diagram of the CNN based model will look like what’s shown in Figure 1.

This figure depicts the two-layer neural network — one hidden layer of four neurons and one output layer with two neurons and three inputs. AlexNet, VGG and ResNet are variations in terms of the network layer stacking and the new network elements of the base CNN network architecture.

- Recurrent neural network (RNN) based models: These are designed to act upon the series of data with variable length. They remember the previous input (using the in-memory state) to be used along with the input for the next node. They are best suited for applications related to speech and text processing, and are based on natural language understanding (NLU). The variation of RNN is the Long Short Term Memory (LSTM) network.

- Generative models: Generative adversarial networks (GAN) are generative deep neural network models intended to produce or generate new content from given training.

- Data sets: The data sets are used for training the neural network. Generally, the training needs large data sets, which are used for image processing, natural language processing (NLP) and speech processing. The most popular data sets are COCO, ImageNet and Open Images.

The applicability of deep learning architectures to industrial domains

The following are some of the industrial domains to which deep learning architectures are applicable.

- Healthcare: Deep learning and its innovations are advancing the future of precision medicine and health management. Breast cancer and skin cancer diagnosis are just a few examples of deep learning in healthcare. In the years to come, computer aided diagnosis will play a major role in this domain.

- Computer vision and pattern recognition: Image detection, segmentation, multi-object detection, and tracing on videos and on live stream, are a few examples of using deep learning.

- Computer games, robots: Advances in deep reinforcement learning are creating the possibilities of introducing AI into games (like Go) and robots.

- Automated driving: This includes computer vision, reinforcement learning and many other subjects used in the control of autonomous vehicles.

- Voice-activated intelligent assistants: Apple’s Siri, Google Now and Microsoft’s Cortana are a few examples of deep learning in voice search and voice-activated intelligent assistants.

- Advertising: Deep learning enables publishers and ad networks to leverage their content to create data-driven predictive advertising, precisely targeted advertising, and much more.

- Predicting natural calamities: Predicting natural hazards and setting up a deep learning based emergency alert system will play an important role in the coming years.

- Finance: Analysing trading strategies, reviewing commercial loans and form contracts, and preventing cyber-attacks are examples of deep learning in the finance industry.

- Aerospace and defence: Deep learning is used to detect/locate as well as mask objects from other satellites that locate areas of interest, and identify safe or unsafe zones for troops.

Open source frameworks for deep learning

Here is a brief introduction to a few open source frameworks for deep learning.

TensorFlow: This is an end-to-end library, low level and high-end framework for deep learning. It can handle deep neural networks for image recognition, handwritten image/writing classification, recurrent neural networks, NLP (natural language processing), word embedding and PDE (partial differential equation).

Keras: This is used for constructing neural networks and machine learning projects. It interoperates with TensorFlow, Theano and R. It runs efficiently on CPUs and GPUs.

It offers standalone modules including optimisers, neural layers, activation functions, initialisation schemes, cost functions and regularisation schemes. It’s a simple high-level framework that works on TensorFlow, Theano, etc.

Caffe: This deep learning framework is supported with interfaces like C, C++, Python, MATLAB as well as the command line interface. It provides high speed and transpose capabilities, and it can be used in modelling convolution neural networks (CNN).

It is very important to choose the right model, architecture and open source deep learning framework for industrial solutions. This is a great challenge, though, because of the plethora of options available in the market.

Analyse the business problem and arrive at the solution as the first step. Break the solution into services and understand the models needed for these services. This will help you narrow down the architecture choices.

The challenge in adopting deep learning technology is the increasing complexity in hardware due to the complex algorithms used. Besides, the standards and protocols are not yet well established and are still evolving.

In addition, there is a tremendous lack of technical expertise in the industry. There are several companies that provide installation, training and support pertaining to deep learning systems, along with online assistance and post-maintenance of deep learning software and the required services.