The KNIME (Konstanz Information Miner) analytics platform is perhaps the strongest and most comprehensive free platform for drag-and-drop analytics, machine learning, statistics, and ETL (extract, transform and load) models. This article introduces a supervised machine learning decision tree algorithm through the KNIME tool for a car evaluation data set.

Data pre-processing, modelling, analysis, and visualisation are all enabled within KNIME. The workflows can run both through the interactive interface and also in batch mode. These two setups allow for easy local job management and regular process execution. KNIME integrates various components for machine learning and data mining through its modular data pipelining ‘Lego of Analytics’ concept.

Let us look at a supervised machine learning decision tree algorithm through the KNIME tool for the car evaluation data set. Decision trees are created for the car model based on the technical features, pricing criteria (overall cost, maintenance cost), comfort, size of luggage boot, safety, passenger capacity, etc. The decision tree learner node and the predictor nodes are connected with both trained data and test data for training and evaluating the model with better output. Then the decision tree predictor is configured with the scorer node to generate the required evaluation performance metrics and to report data for user interface visualisation.

KNIME installation

To start with, we will first install KNIME. Download the KNIME desktop application from https://www.knime.com/ and install it as per the instruction on the screen. You will have to provide the necessary permissions during the setup process.

Decision tree

A decision tree is a decision support tool that uses a tree-like model of decisions and their possible consequences, including chance event outcomes, resource costs, and utility. It is one way to construct a model that contains conditional control statements. Decision trees are used for handling non-linear data sets effectively. The decision tree tool is used in real life in many fields, such as engineering, civil planning, law, and business.

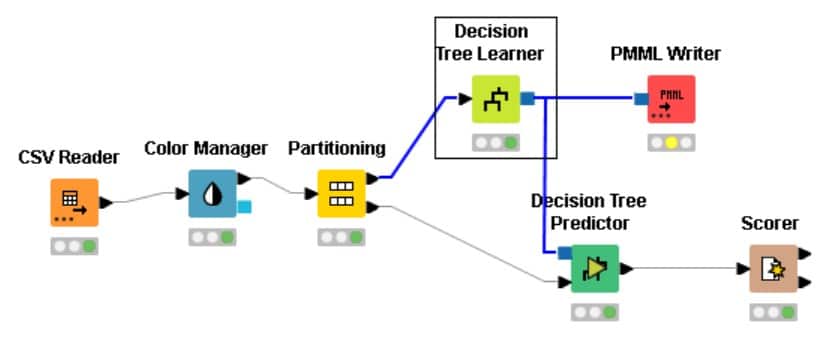

Figure 1 lists the nodes that we will be using to create the decision tree.

Designing the supervised ML car evaluation decision tree model

Here are the steps needed to create a decision tree model for car evaluation.

- Create a new workflow for the car evaluation model as seen in Figure 2.

- Drag the .csv file into KNIME to create and initialise the CSV reader node.

- Connect it to a colour manager, where each genre can be assigned a specific colour under swatches or a preset under palettes.

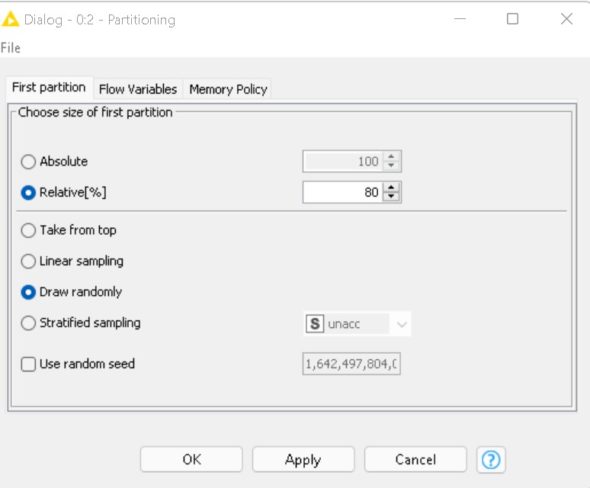

- Connect the colour manager to the partitioning node, which is used to separate the given data set into two partitions — one consisting of 80 per cent of the data and the other consisting of the remaining 20 per cent of the data. Thus the data is partitioned into two sets for testing and training data in an 80:20 ratio. This partition is done by choosing the randomly option.

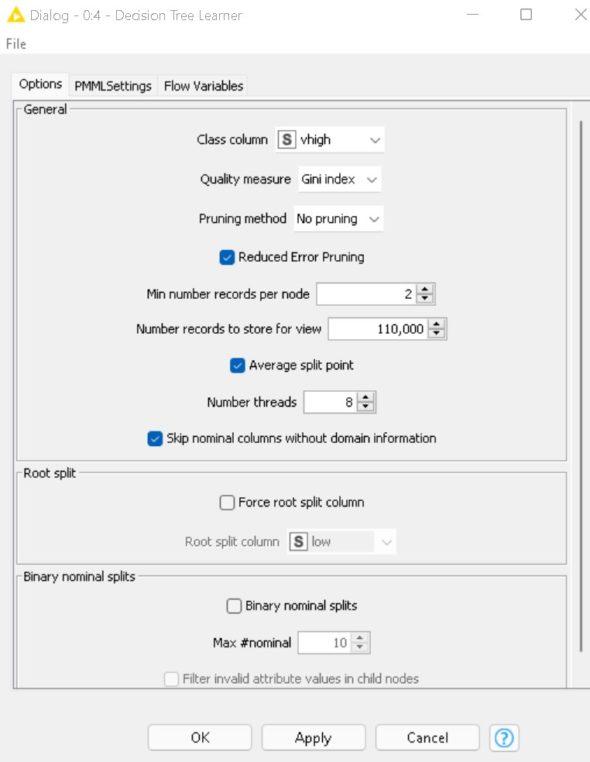

- Connect the partitioning node to the decision tree learner. Configure it as a class column as units, and change the records as per the required analysis.

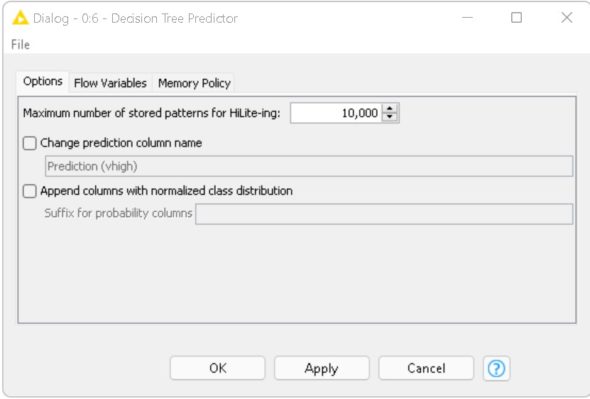

- Connect the decision tree learner to the decision tree predictor. Send the smaller partition (test data) to the decision tree learner in order to classify the values, which can then be predicted by the decision tree predictor.

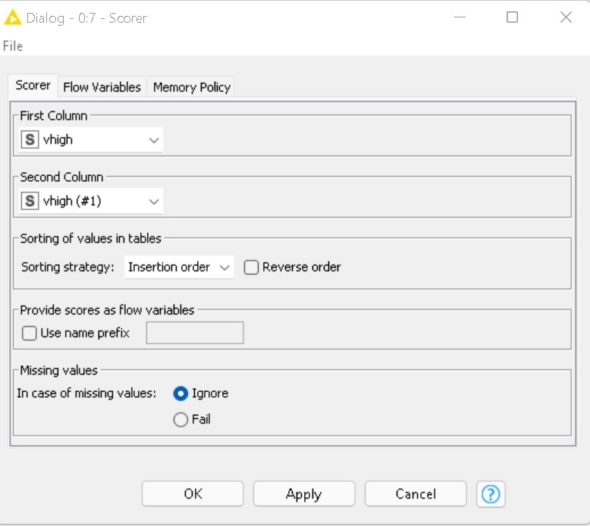

- Finally, send the output to the scorer node to measure the performance metric score. Compile and execute all the nodes at each stage of connection to get proper outputs.

Now, to view the output as a report, connect it to the PMML writer node.

Node configurations

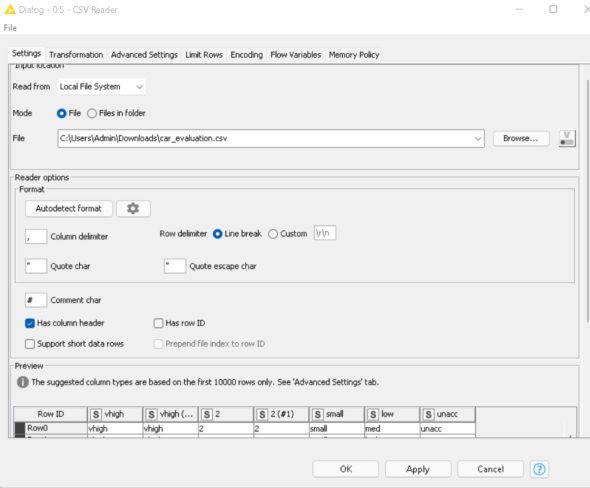

CSV reader: This node reads CSV files. You can also use this node if the workflow is created in a server or batch environment, and where the structure of the input files changes between different invocations. The car evaluation data set is loaded using the CSV reader.

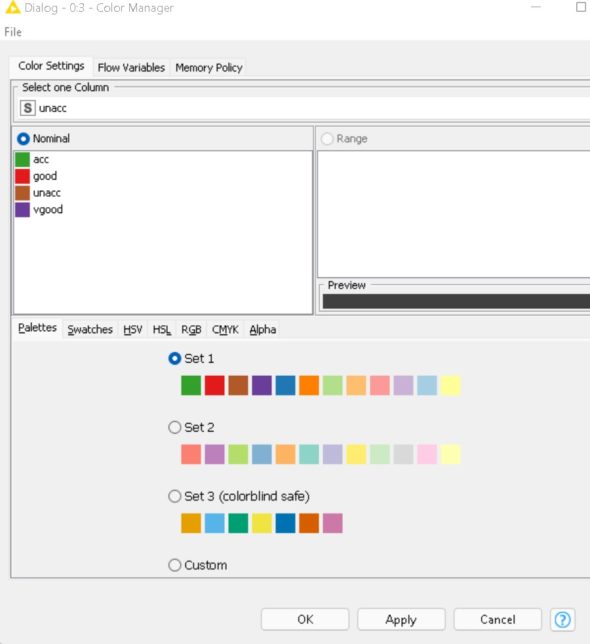

Colour manager node: Colours can be assigned for either nominal (possible values have to be available) or numeric columns (with lower and upper bounds). If these bounds are not available, a ‘?’ is provided as a minimum and maximum value. The values are then computed during execution. If a column attribute is selected, the colour can be changed with the colour chooser.

Partitioning node: The input table is split into two partitions (i.e., row-wise), e.g., train and test data. In this case, it is 80 per cent training and 20 per cent testing.

Decision tree learner node: This node induces a classification decision tree in the main memory. The target attribute must be nominal. The other attributes used for decision making can be either nominal or numerical. Numeric splits are always binary (two outcomes), dividing the domain into two partitions at a given split point. Here the target variable is ‘Evaluation’

Decision tree predictor node: This node uses an existing decision tree (passed in through the model port) to predict the class value for new patterns.

Scorer node: This node compares two columns by their attribute-value pairs and shows the confusion matrix, i.e., how many rows of which attribute and their classifications match. Additionally, it is possible to highlight cells of this matrix to determine the underlying rows. Here the accuracy achieved is 95.6 per cent.

Visualisation output

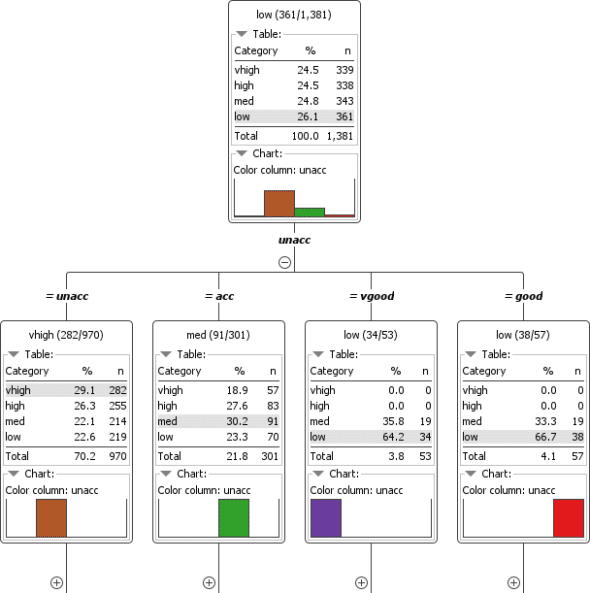

Figure 8 demonstrates the decision tree output of the car evaluation model, which visualises the learned decision tree. Based on the selections, the tree can be expanded and collapsed with the plus/minus signs.

As with the splitting criteria, the attribute name of the parent node was split and its numeric value and nominal set value were represented to this child. The value in round brackets states (x of y), where x is the quantity of the majority class and y is the total count of examples in this model.

The PMML (predictive model markup language) node is used to transmit models between nodes. PMML ensembles models through reader and writer nodes for importing and exporting them with other software. Figure 9 gives the visualisation report of the car evaluation model.