An open source project, Tekton is a cloud native solution and Kubernetes-native resource for building, testing, and deploying applications. It runs inside Kubernetes or its other variants, enhancing the efficiency of DevOps.

DevOps and Kubernetes (often referred to as DevOps with Kubernetes) complement each other to enable efficient and scalable software development, deployment, and management in modern cloud-native environments.

The combination of Kubernetes container orchestration capabilities and DevOps practices leads to improved collaboration, shorter development cycles, and enhanced application performance in modern cloud-native environments. By leveraging Kubernetes in a DevOps workflow, organisations can achieve faster, more reliable, and scalable software delivery.

Kubernetes offers several advantages when integrated with DevOps practices.

Agility and speed: Faster application deployments and updates enable swift responses to market demands and changes.

Scalability: Automatic horizontal scaling handles varying workloads efficiently.

Reliability and resilience: Self-healing capabilities and node management ensure continuous application availability.

Consistency and portability: Abstracting infrastructure allows consistent deployments across diverse environments.

Infrastructure as Code (IaC): Kubernetes resources defined as code enable version-controlled and repeatable infrastructure management.

CI/CD integration: It seamlessly integrates with CI/CD pipelines, streamlining the software delivery process.

GitOps: Version-controlled configurations ensure reliable and consistent application state management.

Microservices and service mesh: Suitable for microservices architectures, enhanced by service mesh for observability and security.

Resource utilisation: Optimises resource allocation, leading to cost savings and efficient cloud usage.

Observability: Extensive monitoring and logging capabilities provide insights into application and infrastructure health.

Multi-cloud and hybrid cloud support: Cloud-agnostic nature enables seamless deployments across various platforms.

Collaboration and communication: Encourages cross-functional collaboration, allowing developers to focus on application logic while operations handle infrastructure.

Problems of DevOps in Kubernetes

DevOps in Kubernetes comes with its own set of challenges due to the complexities of managing containerised environments and orchestrating microservices. Here are some common problems faced in DevOps with Kubernetes.

Steep learning curve: Kubernetes has a steep learning curve, especially for teams new to container orchestration and cloud-native technologies.

Infrastructure as Code (IaC): Writing and managing Kubernetes configurations as code (YAML manifests) can become complex, especially when dealing with large and distributed applications.

Versioning and rollbacks: Managing versioning and rollbacks of Kubernetes deployments can be challenging, especially when multiple microservices are involved.

Service discovery and load balancing: Properly managing service discovery and load balancing for microservices can be complex, especially in a dynamic and distributed environment like Kubernetes, OpenShift, ROSA, EKS, AKS and GKE.

Monitoring and observability: Setting up comprehensive monitoring and observability solutions for Kubernetes clusters and microservices can be daunting, requiring the integration of multiple tools.

Scalability and resource management: Scaling microservices dynamically while optimising resource allocation requires careful planning and continuous monitoring.

Secret management: Handling sensitive information like API keys, passwords, and certificates securely in Kubernetes can be tricky.

Application configuration: Managing application configurations across different environments consistently can be challenging, especially when multiple configurations are needed for various microservices.

Continuous delivery pipelines: Designing efficient and robust CI/CD pipelines for Kubernetes deployments requires a deep understanding of container images, Helm charts, and Kubernetes manifests.

Security and compliance: Ensuring security best practices and compliance standards are met in a containerised environment like Kubernetes can be complex.

Resource cleanup: Cleaning up unused resources and ensuring proper resource management in Kubernetes clusters can become a challenge, especially when dealing with dynamic scaling and frequent deployments.

DevOps tools

DevOps tools like Terraform, Ansible, Jenkins, AWS CodePipeline, AWS CodeDeploy, and Azure DevOps are widely used and powerful in traditional infrastructure and application management; they might face some challenges when used with Kubernetes. While these tools work well with infrastructure provisioning and configuring cloud based applications, they struggle when used with Kubernetes.

Paradigm mismatch: The DevOps tools mentioned before follow a procedural approach, whereas Kubernetes is declarative. This difference can lead to challenges in mapping workflows and resource management between these tools and Kubernetes.

Resource management: DevOps tools often have their own resource management mechanisms, which can conflict with Kubernetes resource management.

Kubernetes native concepts: Some of the previously mentioned tools might not fully leverage Kubernetes-native concepts and features, limiting their potential to take advantage of Kubernetes capabilities.

Kubernetes manifests: Kubernetes resources are defined in YAML manifests, which might not be natively supported by some DevOps tools, making it challenging to manage and update Kubernetes configurations.

Secret management: Handling Kubernetes secrets securely is crucial, and integrating this process seamlessly with the above DevOps tools might require additional effort.

Rolling updates and rollbacks: Kubernetes provides built-in support for rolling updates and rollbacks. Integrating these capabilities effectively with these DevOps tools can be complex, especially if the DevOps tools are not Kubernetes-aware.

Service mesh integration: Kubernetes service mesh technologies like Istio or Linkerd might not be well-integrated with these DevOps tools, leading to difficulties in managing advanced networking features.

Monitoring and observability: Some DevOps tools may not have native integrations with Kubernetes monitoring and observability solutions, making it harder to gain insights into the health and performance of Kubernetes deployments.

Dynamic environment changes: Kubernetes is designed to handle dynamic environments with ease, but this can pose challenges for some DevOps tools that are more suited to static environments.

Scalability and self-healing: While Kubernetes excels at dynamic scaling and self-healing, integrating these features effectively with some DevOps tools might require additional configurations and adjustments.

Continuous integration and continuous delivery (CI/CD) pipelines: These have become a cornerstone of modern software development practices. Among the numerous CI/CD solutions available, Tekton Pipelines stands out as a powerful and flexible platform, enabling teams to automate and streamline their software delivery workflows. We will explore the features, benefits, and applications of Tekton Pipelines, shedding light on how these have revolutionised the software development process.

Tekton

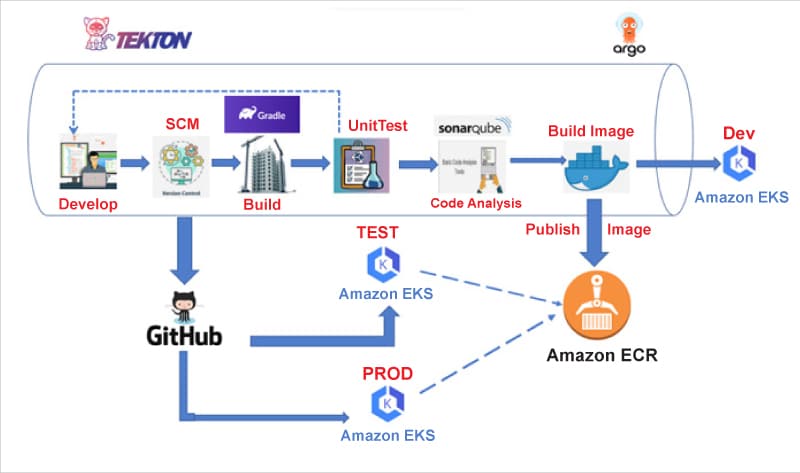

Tekton is a cloud native solution which is an open source project and Kubernetes-native resource for building, testing, and deploying applications. It consists of Tekton Pipelines, which provide the building blocks of supporting components, such as Tekton CLI and Tekton Catalog, that make Tekton a complete ecosystem. Tekton runs inside Kubernetes or its other variants like AWS ROSA.

Tekton provides several benefits to builders and users of CI/CD systems.

Customisable: Tekton entities are fully customisable, allowing for a high degree of flexibility. Platform engineers can define a highly detailed catalogue of building blocks for developers to use in a wide variety of scenarios.

Reusable: Tekton entities are fully portable; so once defined, anyone within the organisation can use a given pipeline and reuse its building blocks. This allows developers to quickly build complex pipelines without ‘reinventing the wheel’.

Expandable: Tekton Catalog is a community-driven repository of Tekton building blocks. You can quickly create new and expand existing pipelines using pre-made components from the Tekton Catalog.

Standardised: Tekton installs and runs as an extension on your Kubernetes cluster and uses the well-established Kubernetes resource model. Tekton workloads execute inside Kubernetes containers.

Scalable: To increase your workload capacity, you can simply add nodes to your cluster. Tekton scales with your cluster without the need to redefine your resource allocations or any other modifications to your pipelines.

As a Kubernetes-native solution, Tekton seamlessly integrates with Kubernetes clusters. This allows developers to define and execute pipelines as Kubernetes Custom Resource Definitions (CRDs), making it easy to manage and monitor their pipelines alongside other Kubernetes resources.

Tekton pipelines embrace a modular approach to CI/CD, where tasks can be combined into customisable pipelines. This modularity fosters reusability and simplifies the maintenance of complex workflows. Teams can share and collaborate on tasks, promoting a culture of automation and efficiency.

These pipelines follow a declarative approach, allowing developers to specify what they want to achieve without being concerned with the underlying implementation details. This abstraction enhances pipeline maintainability and readability. Moreover, Tekton supports versioning, ensuring that pipelines remain consistent over time.

They leverage Kubernetes’ scalability and isolation capabilities. Each task in a pipeline runs as a separate container, providing isolation and security. Teams can easily scale pipelines based on demand, ensuring optimal resource utilisation.

Tekton integrates well with a wide array of tools and platforms, including Kubernetes, Helm, Git, and more. This enables teams to create end-to-end delivery pipelines using their preferred tools while leveraging Tekton’s core capabilities.

Tekton components

The Tekton ecosystem comprises various components that serve as the foundation for creating efficient and flexible CI/CD pipelines. These components seamlessly integrate within Kubernetes environments to orchestrate complex workflows with ease.

Tekton pipelines are the foundation of Tekton. They define a set of Kubernetes custom resources that act as building blocks from which you can assemble CI/CD pipelines.

Tekton triggers allow you to instantiate pipelines based on events. For example, we can trigger the instantiation and execution of a pipeline every time a PR is merged against a GitHub repository.

Tekton CLI provides a command-line interface called tkn, built on top of the Kubernetes CLI, which allows you to interact with Tekton.

Tekton dashboard is a web-based graphical interface for Tekton pipelines that displays information about the execution of your pipelines. It is currently a work-in-progress.

Tekton catalogue is a repository of high-quality, community-contributed Tekton building blocks — tasks, pipelines, and so on — that are ready for use in your own pipelines.

Tekton hub is a web-based graphical interface for accessing the Tekton Catalog.

Tekton operator is a Kubernetes operator pattern that allows you to install, update, and remove Tekton projects on your Kubernetes cluster.

Tekton chain provides tools to generate, store, and sign provenance for artifacts built with Tekton pipelines.

We can work with Tekton using tkn cli or Kubectl or Tekton API (available only for pipelines and triggers).

Anatomy of Tekton Pipelines

Here is a simplified breakdown of Tekton’s anatomy.

Step is an operation in a CI/CD workflow, such as running some unit tests for a Python web app, or the compilation of a Java program. Tekton performs each step with a container image. Separate containers are created for each step.

At its core, Tekton is designed to simplify the CI/CD process by breaking down complex workflows into a series of smaller, reusable components known as tasks.

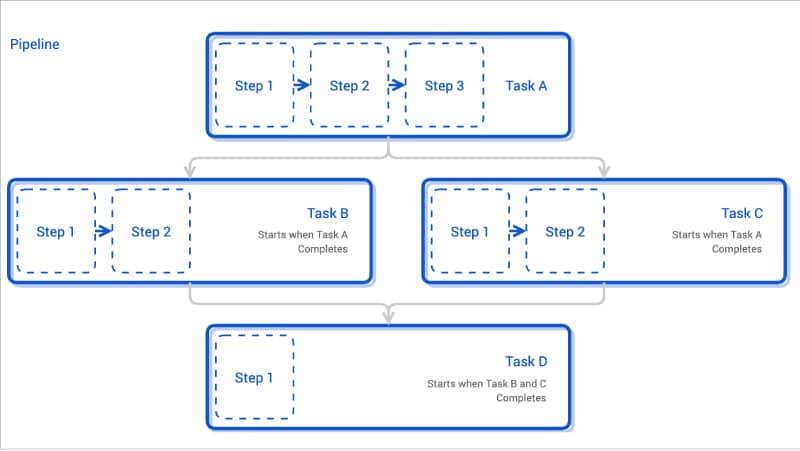

Task is a collection of steps in order. Tekton runs a task in the form of a Kubernetes pod, where each step becomes a running container in the pod. This design allows you to set up a shared environment for a number of related steps; for example, mounting a Kubernetes volume in a task will be accessible inside each step of the task. Each task will create one pod.

Taskrun is a specific execution of a task. Task needs either taskrun or pipelines to get executed. Taskruns can also be used to run a task outside a pipeline.

Pipeline is a collection of tasks in order. Tekton collects all the tasks, connects them in a directed acyclic graph (DAG), and executes the graph in sequence. In other words, it creates a number of Kubernetes pods and ensures that each pod completes running successfully as desired. Tekton grants developers full control of the process. You can set up a fan-in/fan-out scenario of task completion, ask Tekton to retry automatically should a flaky test exist, or specify a condition that a task must meet before proceeding.

Applications of Tekton Pipelines

Continuous integration: Tekton simplifies the process of building and testing code changes with automated CI pipelines. Developers can trigger these pipelines with each code commit, ensuring early detection of issues and promoting a consistent development workflow.

Continuous deployment: Tekton pipelines facilitate the deployment of applications to various environments, such as staging and production. Automated CD pipelines ensure that tested and approved code changes are safely and reliably pushed to production environments.

Canary and blue-green deployments: Using Tekton, teams can implement advanced deployment strategies like canary and blue-green deployments. These strategies allow for gradual rollouts and easy rollbacks, reducing the impact of faulty releases.

Custom workflows: Tekton’s flexibility enables teams to create tailored workflows for specific use cases. For instance, they can orchestrate complex multi-step processes involving multiple services and dependencies.

Tekton pipeline creation

The easiest way of creating a Tekton pipeline is by using a Helm chart, but to make it simpler we will go with simple YAML based declarations. We will write a separate article with a Helm chart sometime in future.

- Create an EKS cluster in AWS cloud for the Tekton pipeline to run. Tekton pipelines will run inside a Kubernetes/OpenShift cluster; so this is a mandatory step.

- Create a folder named IAC and create two sub-folders called AWS and GitOps.

- The AWS folder will hold all infrastructure related workload creation tasks like S3 bucket, CloudWatch, EBS, etc.

- The GitOps folder will consist of four sub-folders for different environments — dev, test, stage and prod folders. These folders will have the configuration definition as per their environment.

- In the AWS folder, create cloudformation templates for creating security groups, load balancers, Apigateway, Route53 hosted zones, Alb controllers, etc.

- Create a task for running the cloudformation template and name it as infratask.

- Create a service account associated with roles and name spaces. Provide access tokens for Git, Docker Hub and EKS complete access.

- Get access tokens for git access (read and write access), repository access and EKS deployment access with appropriate roles.

- Create tasks for GITcheckout, build, package to Docker image and push it into image repository like Nexus or AWS ECR or Docker image repository. (You need to have tokens for this.)

- Create a Tekton pipeline which consists of all the tasks sequentially or parallelly (as per your use case).

- Create a pipeline run file which consists of the exact value of the git paths, storage volume, etc.

- Docker containers are created using kaniko or buildah. These tasks are already available in Tekton Hub, which we can download and refer to in our pipeline.

Let us write a Tekton example code for pulling the Java code from the Git repository, build using Maven and package, create a Docker image, push it to Docker registry and deploy it into AWS EKS.

apiVersion: tekton.dev/v1beta1 kind: Pipeline metadata: name: java-maven-docker-pipeline spec: resources: - name: source-repo type: git - name: docker-image type: image tasks: - name: clone-repo taskRef: name: git-clone resources: inputs: - name: url resource: source-repo - name: revision resource: source-repo params: - name: path value: /workspace/source - name: build-with-maven taskRef: name: maven resources: inputs: - name: workspace resource: source-repo params: - name: PATH_TO_POM value: /workspace/source/path/to/pom.xml - name: GOALS value: “clean package” - name: build-docker-image taskRef: name: buildah runAfter: - build-with-maven resources: inputs: - name: source resource: source-repo params: - name: DOCKERFILE value: /workspace/source/Dockerfile - name: CONTEXT value: /workspace/source - name: IMAGE value: $(outputs.resources.docker-image.url) - name: push-to-docker-registry taskRef: name: docker-push runAfter: - build-docker-image resources: inputs: - name: image resource: docker-image params: - name: TAG value: latest - name: IMAGE value: $(inputs.resources.image.url) - name: REGISTRY value: DOCKER_REGISTRY - name: USERNAME value: your-docker-registry-username - name: PASSWORD value: your-docker-registry-password - name: deploy-to-eks taskRef: name: eks-deploy runAfter: - push-to-docker-registry resources: inputs: - name: source resource: source-repo params: - name: KUBECONFIG value: /workspace/.kube/config - name: CONTEXT value: eks-cluster - name: PATH_TO_K8S_YAML value: /workspace/source/path/to/kubernetes-deployment.yaml

The necessary Tekton tasks (git-clone, maven, buildah, docker-push, and eks-deploy) are defined in your cluster (pulled from tektonhub), after you have set up the appropriate credentials for accessing the Git repository and Docker registry.

Additionally, the Kubernetes deployment YAML should be available in the Git repository and specified correctly in the PATH_TO_K8S_YAML parameter.

apiVersion: tekton.dev/v1beta1 kind: Pipeline metadata: name: java-maven-docker-pipeline apiVersion: Specifies the Tekton API version being used. kind: Indicates that this resource is a Tekton Pipeline. metadata.name: The name of the pipeline resource. spec: resources: - name: source-repo type: git - name: docker-image type: image

spec.resources: Defines the resources used by the pipeline.

resources.name: Names the resources. In this case, there’s a Git resource named source-repo and an image resource named docker-image.

resources.type: Specifies the type of resource, which is either git for the Git resource or image for the Docker image resource.

These sections define the individual tasks that make up the pipeline:

tasks: - name: clone-repo taskRef: name: git-clone resources: inputs: - name: url resource: source-repo - name: revision resource: source-repo params: - name: path value: /workspace/source

tasks.name: Name of the task.

tasks.taskRef.name: Refers to the name of the Tekton task that will be executed (in this case, git-clone).

tasks.resources.inputs: Specifies the inputs required for this task. The URL and revision are inputs defined in the Git resource, and the path is set to the working directory for the task.

- name: build-with-maven taskRef: name: maven resources: inputs: - name: workspace resource: source-repo params: - name: PATH_TO_POM value: /workspace/source/path/to/pom.xml - name: GOALS value: “clean package”

This task (build-with-maven) runs the Maven Tekton task. It uses the Git resource source-repo as its workspace.

The Maven build is configured with the path to the pom.xml file and the goals ‘clean package’.

The remaining tasks (build-docker-image, push-to-docker-registry, and deploy-to-eks) follow a similar structure. They reference specific Tekton tasks and define the resources and parameters required for each step.

Pipelinerun.yaml apiVersion: tekton.dev/v1beta1 kind: PipelineRun metadata: name: java-maven-docker-pipeline-run spec: pipelineRef: name: java-maven-docker-pipeline resources: - name: source-repo resourceRef: name: your-git-resource-name - name: docker-image resourceRef: name: your-docker-image-resource-name

To run the above pipeline:

Create pipeline: Apply the pipeline definition YAML to your cluster using kubectl apply -f pipeline-definition.yaml.

Create pipeline run: Apply the pipeline run YAML to start the execution of your pipeline using kubectl apply -f pipeline-run.yaml. Make sure you adjust the resource names (Git and Docker image resources) in the pipeline run YAML.

Monitor progress: Use commands like kubectl get pipelineruns and kubectl describe pipelinerun <run-name> to monitor the progress of your pipeline run. You can also use kubectl logs -f taskrun <task-run-name> to view the logs of individual tasks.