Often, people get confused between Docker and a virtual machine. This article explains how Docker differs from a virtual machine and why you should choose the former over the latter.

The virtualisation concept originated in the 1970s during the mainframe era when IBM was putting in a lot of effort to create efficient time-sharing solutions. This implied sharing computer resources among multiple users and trying to achieve high performance and high utilisation. Today’s data centres use virtualisation technologies to create an abstraction of physical hardware. Physical resources are divided into many logical resources and different users leverage the benefits of this. Here, the resources include CPUs, memory, storage, networks and applications.

What is virtualisation?

Virtualisation is creating a virtual version of something (like a server, storage, OS or networking resources), which is similar to the actual version.

What is a virtual machine?

In layman terms, a virtual machine is the logical replica of a physical machine. We can say that it’s an emulation of the physical system. It follows the same architecture and functionality that the physical system provides.

Different types of virtualisation

There are many types of virtualisation, but we look at just the major types here.

Application virtualisation: Users can access different applications from remotely located servers instead of their physical machine. In this scenario, an application is not installed on it but will act like it is installed on it. All the application related information is available on the remote server.

Desktop virtualisation: In this kind of virtualisation, the users’ operating system is stored on the remote server and they are allowed to use it as a virtual desktop, anywhere in the world.

User virtualisation: This is similar to desktop virtualisation, but it has the capability of maintaining a personalised virtual desktop. Users can log into their virtual desktop using different types of devices.

Storage virtualisation: In this case, different network storage devices combine and act like a single storage device.

Hardware virtualisation: This is a very popular option and forms the basis of cloud computing. Here, one processor acts like a different virtual processor and more users can access the same physical processor at the same time. This kind of virtualisation requires a virtual machine manager called a hypervisor.

The benefits of virtualisation are:

- Leads to an exponential growth in productivity

- Better resource utilisation

- Offers users agility and good efficiency

- Cost reduction due to not having to buy and maintain a large number of physical servers

- Data centres become smaller and compact, compared to using only physical servers, which provide the following benefits:

- Energy savings

- Less hardware maintenance

- Considerable savings in time and money

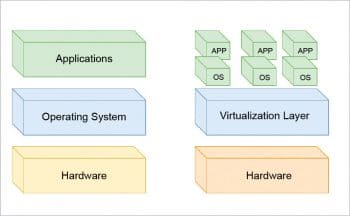

Figure 1 demonstrates the difference between traditional and virtual architecture. As we can see, in virtual architecture, we can create pools of OSs and apps using the virtual layer.

Hypervisor

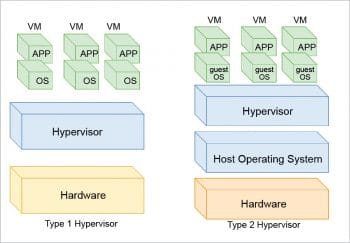

A hypervisor is software or firmware that creates virtual machines. It is also called a virtual machine monitor. Basically, it is responsible for managing virtual machines. There are two types of hypervisors: Type 1 and Type 2. Figure 2 demonstrates the difference between them.

Type 1 hypervisor: This directly runs on system hardware, and is also called the bare metal hypervisor.

Type 2 hypervisor: This runs on the host operating systems, above which VMs are created. And the OSs, which are installed inside VMs, are called guest Oss.

Virtual machines vs containers

Virtualisation has played a major role in improving system utilisation, isolation, micro services, modern computing, and much more. Virtual machines and hypervisors make up one approach towards workload deployment, while using containers is a new approach that is more efficient and reliable compared to traditional virtualisation.

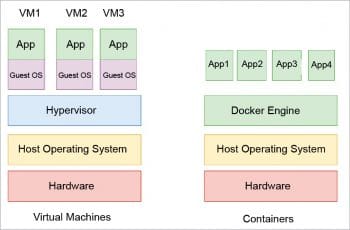

The main difference between a container and a VM is in the virtual layer, or the way OS resources are assigned. In the case of VMs, the first hypervisor is normally installed on bare metal hardware, and once it’s installed, VMs are created from the system’s available resources. Here, each VM has its own virtual OS and application. Usually, the first VM is used as a host – it has the management software, while other VMs have different applications/services like the CRM, databases, JVM, etc.

In the case of containers, the arrangement is totally different. Here, the host OS is installed first and then the container layer (Docker engine) is installed, like LXC (a Linux container). Container instances that are created on the container layer use the host OS only – this layer doesn’t have its own virtual OS and applications. So it is more efficient and a greater number of container instances can be created compared to Vms.

One of the disadvantages of the container approach is a single point of failure. If all the containers are using the same host OS, and if it is hacked or crashes, then it can create issues. Also, container migration has some limitations, such as other servers should have the same or compatible OS kernels.

Figure 3 gives a comparison between virtual machines and containers.

What is Docker?

Docker is open source software that performs operating system level virtualisation, which is also called containerisation. We can say that it’s a tool or software that makes our life easier by managing containers. Its responsibilities include the creation, deployment and running of applications using containers. It’s a little similar to a virtual machine, but rather than creating a whole new virtual OS for different VMs, Docker will allow you to use the same kernel space for all created containers. So there is no concept of a virtual OS in the container world.

Which one is better?

If we need to run more applications with the least amount of resources, then Docker or containers are the best choice. Currently, companies are using a mixture of both the technologies, but gradually, container technology will win the race.