Cloud management is a challenging business. Thankfully, organisations can choose from quite a few cloud platforms and open source cloud management tools today. The most popular tools are AI based and automate most cloud management tasks. This article describes some of these briefly.

It is a tedious task to both monitor and manage large cloud infrastructure. A cloud management platform acts as a wrapper for any hypervisor like VMware, Citrix XenServer, KVM, Xen Cloud Platform (XCP), Microsoft Hyper-V and Oracle VM server that can handle multi-cloud orchestration, monitoring, infrastructure provisioning using rule based services, and so on.

Cloud management and its life cycle

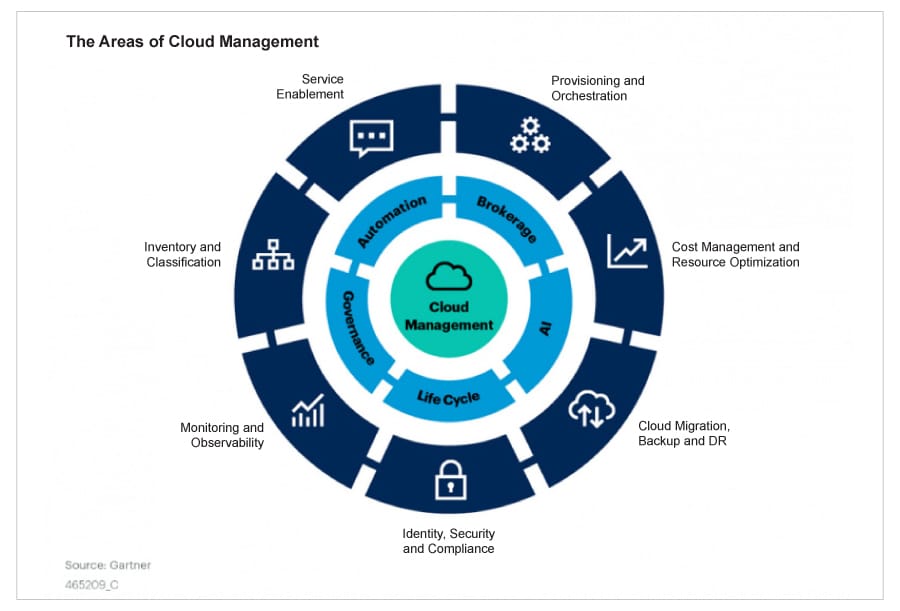

Cloud adoption for an enterprise involves various factors including the choice of platform, infrastructure detailing, application requirements, tech stack to build the application, interfacing requirements, security requirements, etc. US based Gartner, the tech research and consulting company, has provided a guidance framework for selecting a cloud management platform and related tools. The framework defines 215 parameters that can be used for choosing between a private and public cloud, as well as selecting IaaS/PaaS/SaaS.

This framework is captured in the cloud management wheel under eight categories and four cross-functional attributes, as shown in Figure 1.

The topmost priority for effective cloud adoption is to optimise workloads at each cloud tier, making it suitable and flexible enough to get out of vendor lock-in, unless there is a specific roadmap or vision for getting into a native model for a given cloud service provider (CSP).

Gartner also places an emphasis on ‘Design for Portability’ in order to keep the approach to solutions and architectural requirements agile. Developing an automation strategy, according to Gartner, can simplify and reduce the effort needed for large enterprise migration. For example, using a factory approach for migrating large application estate can be simplified with automation tasks like provisioning, assurance, packaging and delivery, monitoring and analytics, and cost management. This guidance framework also helps in cross-platform solutions for a multi/hybrid-cloud approach or a platform-specific approach for native cloud adoption.

Site reliability engineering (SRE) or a CloudOps related model is the core engine for cloud management, and in the cloud native world, observability is the term used most commonly. Observability is different from a monitoring solution and is proactive in nature, works for a dynamic environment, actively consumes information, and is suitable for unknown permutations of incident handling situations.

Cloud management is based on the following four factors.

Cost: This decides how effectively the monitoring and cloud analytics solutions can be optimised for better cloud governance.

Consumption: Helps to derive template based options like Terraform templates or CloudFormation/Azure resource manager (ARM) templates in order to reduce the effort and complexity of setting up CloudOps solutions.

Observability: Though built on traditional services like monitoring, log handling, tracing and auditing, this is one of the key pillars of CloudOps; it ensures reliability and agility in cloud governance.

Compliance: This ensures the solution blueprint and cloud platform services are as per the geographic, industry or customer expectations. Security, risk management and compliance handling are the key activities that fall under this.

Having uniform cloud management, monitoring and operational activities across CSPs is also a big challenge, and that is why third party cloud management tools like CloudBolt or Densify are used.

CloudOps and its activities

CloudOps (cloud operations) is defined as the management, delivery and consumption of software in a computing environment where there is limited visibility into an app’s underlying infrastructure. It is primarily concerned with the ongoing process of refining business solutions and methods to optimise the availability, flexibility and efficiency of cloud services such that all business operations can be executed without any hiccup.

The following are the four pillars that any CloudOps team can use for building a strong IT infrastructure to smoothly carry out all processes.

a. Abstraction: This decouples management from the underlying infrastructure so that all cloud servers, instances, storage devices, network infrastructure and other technologies can be managed under a single head.

b. Provisioning: Administrators can allow end users to allocate their own machines and monitor their utilisation. Provisioning allows applications to request more resources when needed and release them if not required. The process is efficient and automated.

c. Policy driven: This involves the creation and enforcement of policies in the cloud, which give limits to the activities of applications and users.

d. Automation: This includes provisioning, user management, security and API management. These days, artificial intelligence plays a big role in automation.

The main functions of CloudOps are:

- Keeping cloud services and infrastructure up and running 24x7x365.

- Managing, enforcing and updating service level agreements at regular intervals.

- Strong management of compliance as well as configurations.

- Meeting performance and capacity demands to reduce wastage and optimise efficiency.

- Timely recovery and migrations for cloud optimisations.

AIOps and more for cloud operational excellence

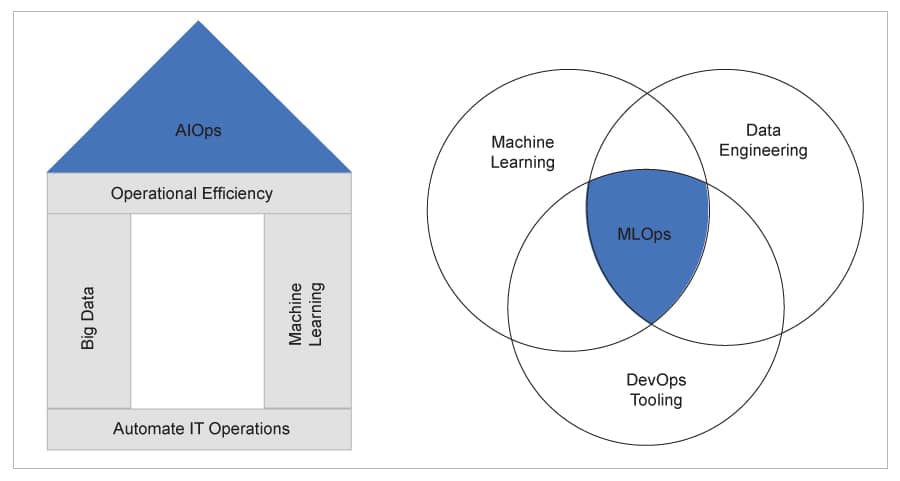

AIOps or artificial intelligence for IT operations, a term coined by Gartner, is designed to handle Big Data, machine learning (ML) and analytics platforms for greater automation. AIOps has a high maturity model and is a long-term solution to enable predictive optimisation based on usage patterns and historical data. It uses an ML driven approach to create models for predictive learning and also suggest areas of improvisation based on data patterns. It enables cost optimisation of production and non-production environments separately with low risk. Tools like CloudHealth and Densify are based on AIOps.

The five pillars of a well architected framework for cloud operations are: performance, availability/reliability, operational excellence, cost optimisation, and security/compliance. Cloud operations are slowly moving from DevOps (which relies on configuration management, CI/CD and pipeline handling) to CloudOps (which manages cloud infrastructure provisioning and management activities). CloudOps is now focusing more on AIOps, which uses machine intelligence in analysing logs and metrics, and proactively sends out alerts whenever there are issues with respect to performance or budget forecasts.

For example, Amazon DevOps Guru is an ML driven service which is fully managed and can improve application performance and availability. It gets data from Amazon CloudWatch, AWS Config, AWS X-Ray logs, AWS CloudTrail feeds and uses it to identify anomalous behaviour such as latency issues, error rates, resource level constraints, service level disruptions like API call failure or any such event failures. This service has an integrated dashboard to visualise its operational health based on continuous analysis by metrics and event analysis.

Based on the integrated machine learning knowledge base, Amazon DevOps Guru also enables resource level planning, and handles preventive maintenance activity by proactively notifying when the defined threshold is reached for resources like auto-scaling or API call volume.

Amazon DevOps Guru can also be integrated with Amazon OpsCenter or simple notification service (SNS) to connect to a third-party incident management system like ServiceNow, so as to automatically log tickets based on how critical an issue is.

AIOps for cloud platforms offers the following benefits.

1. Improved service quality and efficiency: By introducing automated solutions like self-monitoring, self-healing of services to automatically predict and handle failures, self-adapting solutions based on rules or policies, the service quality and overall efficiency in activities like infrastructure provisioning, monitoring, alerts and workflow operations can be improved substantially.

2. Improved productivity from integrated DevOps solutions: By improving build/deployment activities with integrated machine learning solutions like code reviews and regression tests and by integrating ticketing solutions with DevOps to handle ticketing requests automatically, the toolchain process in cloud provisioning and DevOps activities can be improved.

3. Improved customer satisfaction with less cloud adoption cost: Automating operational activities like data driven insights, monitoring and dashboard solutions, and action for events based on knowledge based policies and rule guidelines, helps in building overall customer satisfaction with minimal or no human intervention.

AIOps focuses on three services: data, insights and actions. Data provides the input for the design, which includes historical behaviour of problems and the solution that was carried out (e.g., shutdown instances when resource usage of a virtual machine is critically high). Insights give the detailed trained model for a given problem and bisect the data to detect it, diagnose the solution and optimise the search metadata tags.

Actions define the proposed command that needs execution based on data input and insights models. This service defines how to mitigate a problematic situation, integrate it with ticketing solutions like ServiceNow, optimise cloud services based on best practices, and improve process efficiency in operational activities like infrastructure provisioning, reporting and alert services.

By using AIOps many cloud service providers are improving incident management. They integrate their service with cloud operational activities like incident reporting, triage for priority handling, mitigation/resolution, anomaly detection in end-to-end monitoring of infrastructure, along with actions based on identified issues such as system failures and high resource usage. For a safe cloud deployment and for improved efficiency, there are a few additional things that need to be looked into such as the smoke/regression test, static review of code, configuration, and health checks of the environment.

In short, the movement of DevOps to CloudOps to improve native operational activities can be done with machine learning and automation solutions using AIOps.

Cloud management tools at a glance

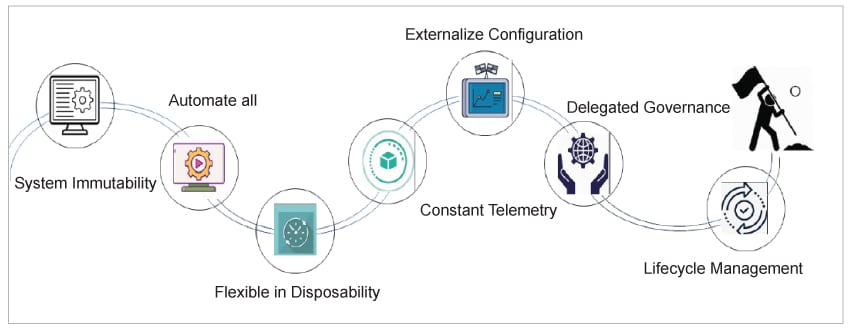

Cloud management tools play a vital role in improving productivity and simplifying operational activities including cost management, resource management and governance. Some of the key parameters that should be looked at while choosing these tools are listed in Figure 3.

System immutability: All configurations in the tool should be made in code (infra as code) such that the complete setup is immutable. No script should be run outside this code at any stage.

Complete automation: Build, test, validate and deploy should be automated as much as possible, to avoid human intervention and errors.

Flexible with respect to disposability: Everything in the cloud setup is transient and shortlived. Tools must enable regular updates to avoid unnecessary failure of services.

External configuration: Configurations like passwords, file location/path and credentials that can be changed should be stored externally from the image/binary to make life easier.

Constant telemetry: The tool must enable logs, event streams, audits, and alerts with visualisation dashboards so that any functional, operational and security issues can be monitored upfront.

Delegated governance: Business agility must be enabled by defining SLAs for managed services, along with strict governance in the DevSecOps life cycle.

Life cycle management: Life cycle management should be independent of decoupling activities like upgrading, scaling and deploying services.

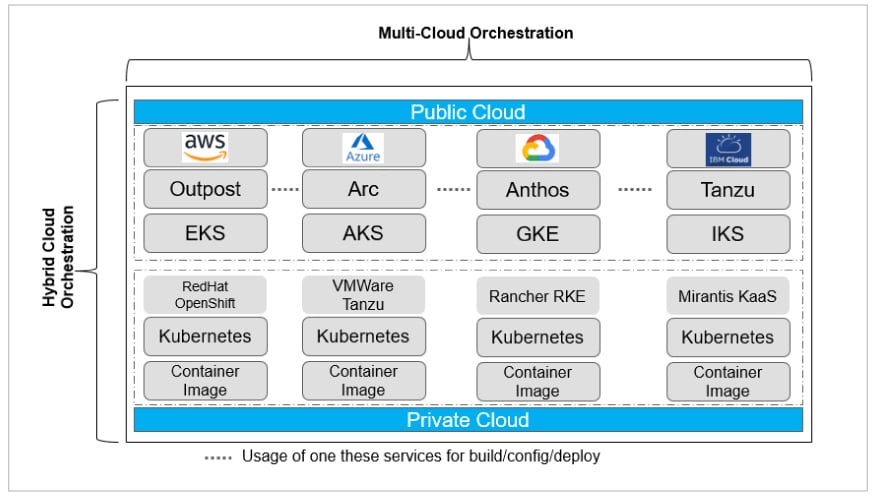

Some common native tools for cloud management are discussed below. Figure 4 highlights multi-cloud management tools.

AWS Outpost is suitable for on-premise usage in smaller organisations that have less hardware footprints, as it does not support custom hardware handling and server provisioning. Anthos and Arc, on the other hand, work relatively in the same way by using the Kubernetes engine to handle multi-cloud, on-premise or private cloud seamlessly. Kubernetes is an integral part of Anthos, whereas Azure Arc can work with Kubernetes and edge computing services.

AWS has improved Outpost by making EKS run on Fargate for serverless Kubernetes solutions to support hybrid cloud and multi-cloud solutions. Anthos, Outpost and Arc are strong competitors in the hybrid cloud space.

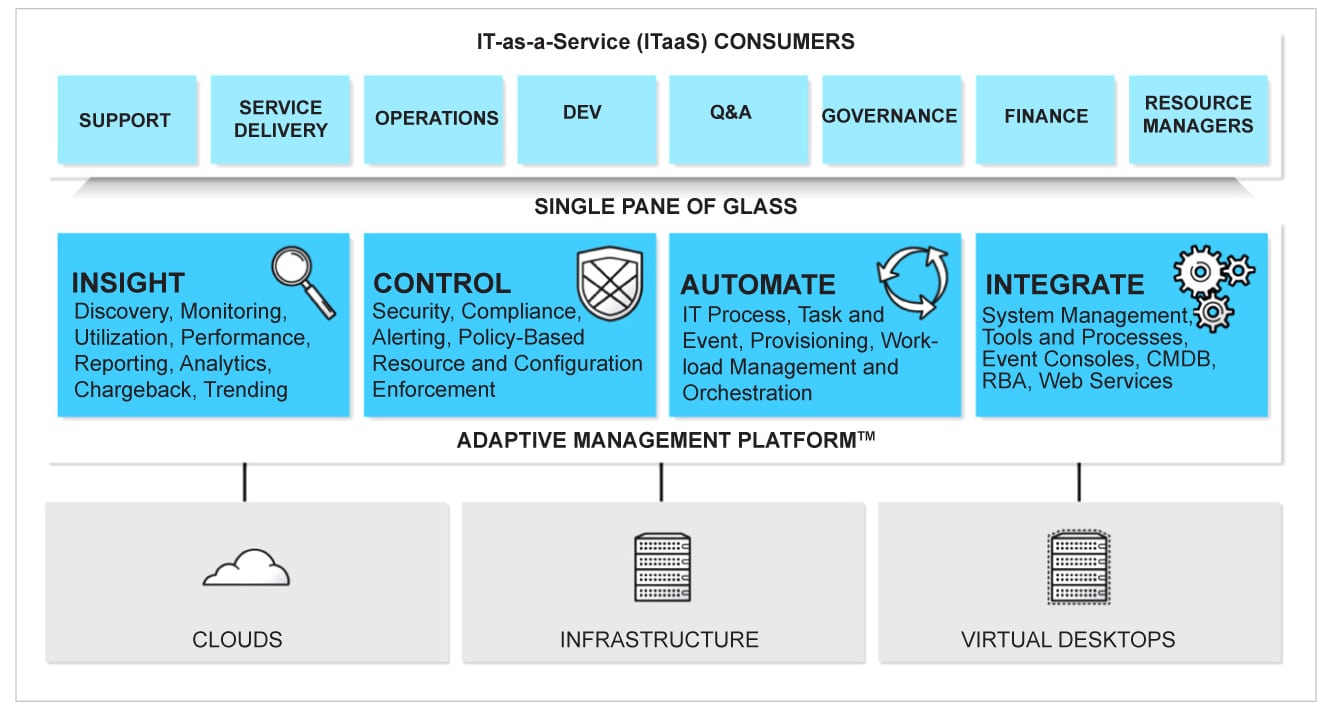

In cloud management, it is good to have a platform that operates as a ‘single pane of glass’ for discovery and tracking of cloud assets, optimising cloud utilisation and controlling cloud services. Though there are many tools like Apptio Cloudability, Opsani and Matilda that offer this, ManageIQ is an open source community development platform offered and sponsored initially by the Red Hat Foundation.

ManageIQ started off as a native platform for the Red Hat OpenShift environment, both for private and public clouds, and later started supporting cloud service providers like Azure, AWS and GCP. It is agentless and can be installed easily in private cloud environments using Vagrant or Docker, as well as on a public cloud as a PaaS service using Docker or a virtual appliance image. Figure 5 shows the ManageIQ architecture.

The core features of ManageIQ include the following.

Continuous discovery: Manages the inventory of cloud services including virtualisation, containers, network, storage, and compute services. It automatically updates when there is a change in the environment and serves as a ready-to-use configuration management database (CMDB) for the assessment of cloud readiness such as BMC Remedy or CAST.

Service catalogue: It manages the full life cycle of a cloud service, which includes policy management, compliance checks, delegated operations, and chargeback for cost management reports. End users can order services for provisioning from the given service catalogue.

Auto-discovery and compliance: Scans through the contents of cloud services using SmartState analysis, including VMs and containers, and creates security and compliance policies.

Cloud optimisation: Collects business and technical telemetry, and helps to prepare visualisation reports for cloud service utilisation and chargeback summary. This helps to build a capacity-planning model for the enterprise.

Advantages of using cloud management tools

Cloud management services have the following five cross-functional attributes:

- AI to provide advanced optimisation facilities using ML based solutions for analytics and anomaly detection.

- Brokerage to enable the relation between cloud provider, cloud services and consumers for seamless cloud migration.

- Automation to enable facilities like infrastructure-as-code (IaC), automatic code review, and package and delivery of cloud services.

- Governance to provide the mechanism for user management and role definition.

- Life cycle to enforce the governing principles in cloud adoption in a systematic way with best standards.

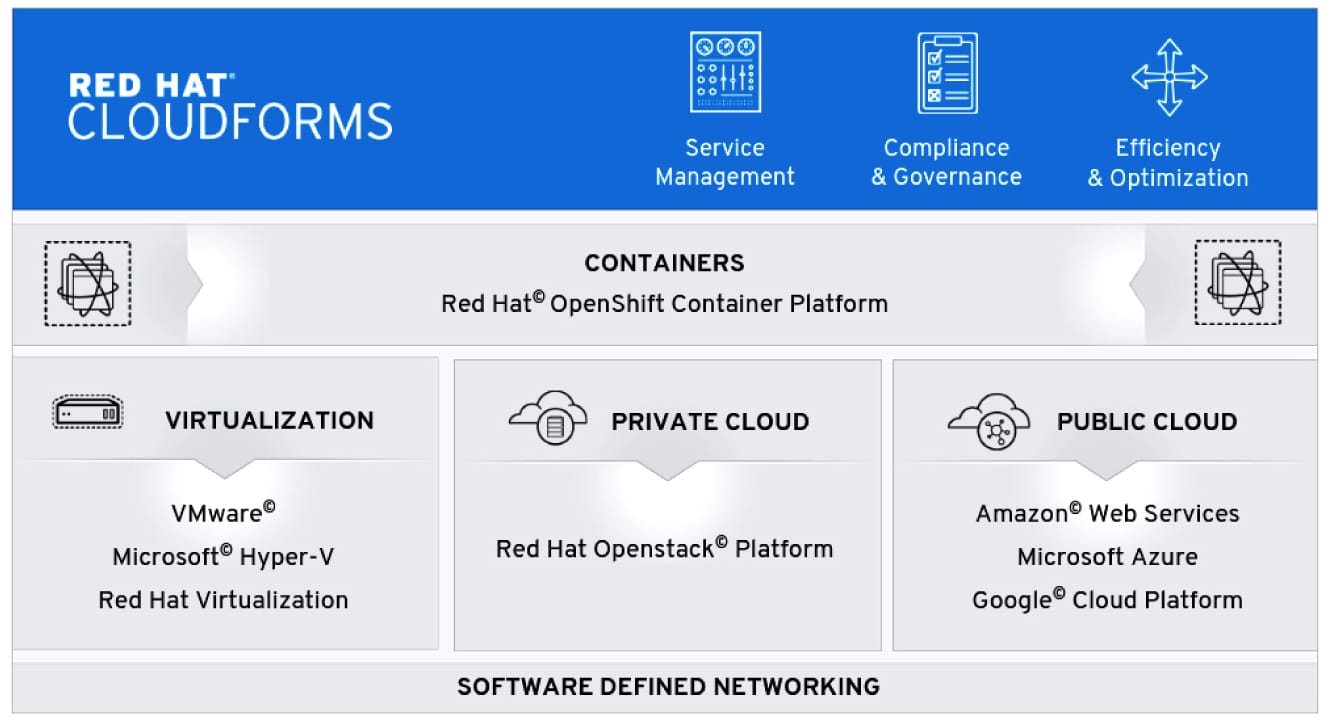

Infrastructure-as-code helps multi-cloud management since preparing provisioning scripts for each cloud is inconsistent and inefficient. Though cloud native facilities like CloudFormation in AWS, ARM templates in Azure and CDM templates in GCP help to achieve this infrastructure-as-code, they are mostly native to the cloud platform and don’t have the agility and flexibility for multi-cloud management. However, Google Anthos, HashiCorp Terraform and Red Hat Ansible templates can help with multi-cloud management and with developing zero-touch deployment scripts and provisioning templates for cloud tower setup. All three tools lead equally in this space for creating, changing and provisioning infrastructure for any cloud platform, including service management, security, compliance and optimisation. Red Hat CloudForms has five integral components as follows.

- CloudForms management engine appliance is the backbone for the cloud infrastructure.

- SmartProxy service is the brain of the platform and sets a SmartProxy agent for carrying out the tasks triggered by the server.

- CloudForms management engine server is the heart of the platform and resides on the appliance to communicate between the SmartProxy layer and the virtual management service.

- Virtual management database (VMDB) contains the preconfiguration of the virtual infrastructure; it maintains the status of appliance tasks.

- CloudForms management engine console is the graphical interface or Web console; it has a dashboard on status.

Though Terraform is a very popular cloud management tool, Red Hat CloudForms provides great flexibility for private and public cloud management.

Infrastructure provisioning activities, during cloud adoption, are repetitive. Automating these helps to reduce the overall DevOps operational activities.

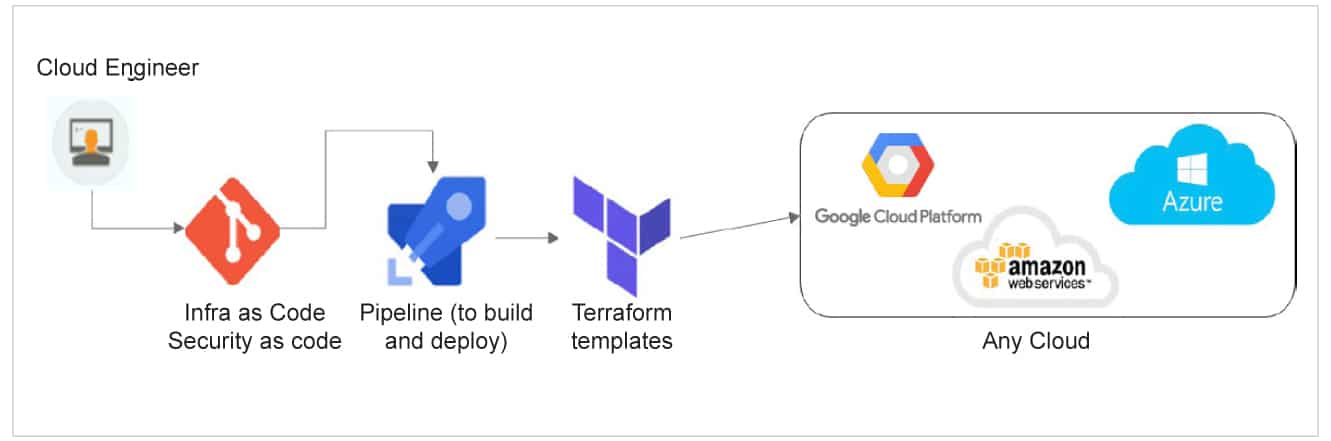

IaC is available as a native facility in Azure as ARM templates, in AWS as CloudFormation templates, and in GCP as Google deployment manager (GDM). A cloud agnostic solution for IaC for a multi-cloud environment is Hashicorp’s Terraform template, which can be JSON (to export/reuse from native scripts) or YAML script based. It can be integrated to any cloud service provider’s native deployment pipeline or a third-party pipeline like Ansible to enable infrastructure provisioning activities on-the-go. Figure 7 highlights Terraform templates for cloud resource provisioning.

In large scale infrastructure creation, we need to build test instances and create pre-production or production instances during horizontal scaling. The task is easier using IaC with Terraform scripts, which can run with resource schedulers, and create network configurations or a secured environment setup for software defined networks (SDN) using Terraform templates.

Terraform scripts can be used to spin-up instances or destroy disposable temporary test environments in a cloud platform. Its templates can be validated before actual provisioning and hence Terraform workflow involves Write (create Terraform templates for IaC), Plan (preview the changes before actually applying them in the cloud environment to validate) and Apply (to actually provision infrastructure quickly).

For multi-cloud architecture, it is easy to adapt Terraform templates for IaC to quickly create a uniform setup and configure for multiple environments, reduce complexity in creating multiple parallel scripts, and reuse as much as possible between these scripts for each cloud service provider in the multi-cloud setup.