Organisations, big or small, need to have a proactive data strategy to manage and make good use of the data they generate daily. Using the cloud for strategic data management could pave the way for their quick growth.

The world will have 163 zettabytes of data by 2025. That is a billion TB. Where is it going to be stored? How is it going to be managed? Whether you are a company of five or a fortune 500 company, how you manage your data determines the pace and scale of your growth. Many organisations have started taking note of the deficiencies in their data strategy and are making consistent efforts to wade through the quagmire.

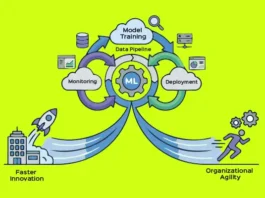

Leandro DalleMulle and Thomas H. Davenport in their 2017 article in Harvard Business Review presented a framework which provides immense clarity on and guidance in strategic data management. As per the article, an organisation should have a two-pronged strategy — offensive and defensive. Defence, in simple terms, is about keeping an organisation safe by minimising risks — being on the right side of the law and compliance, best practices, data security and protection. An offensive strategy is one that directly feeds business objectives like analytics, AI or ML.

A right mix of offensive and defensive strategies depends on the objectives of the organisation. For example, a hospital gives more weightage to the defence strategy as it has to ensure compliance and security. It does not have to build a go-to-market strategy, and hence does not focus much on the offensive part. On the contrary, a fintech organisation will balance both as it has to ensure security and compliance, and at the same time work on the marketing stack. With the growing footprint of cloud and cloud native services, there are scores of tools at our disposal to build an effective and agile data strategy. Let us look at how to build a data strategy based on the template suggested by Dallemulle and Davenport.

Fundamentally, defensive strategies are about data security, data protection and, now, data control. Enough has been spoken, written and understood about the need for security. If all of that can be summarised, it is that security of data is not a one-off activity to be implemented but a cultural mindset on which an organisation has to be built.

Over 40 per cent of SMEs do not back up their data. Of the other 60 per cent, nearly 50 per cent fail to restore their data from the backups they have created. Data protection is where most organisations struggle to create a balance between the need and want. The prolific pace of data growth, particularly the unstructured data, an increasing burden of compliances, and the hope of using analytics to derive insights from data have increased the total cost of ownership (TCO) of data. More importantly, this stresses the capex budget, leading to compromises in implementing data protection plans.

With pay per use metered costing, clouds are a great option to implement plans without any compromises. The challenges of security and egress costs can be addressed if the cloud is used judiciously to store data. One of the biggest benefits of moving to the cloud is the availability of multiple tools which otherwise have to be bought at very high licensing costs. For storing data, tiering can be easily implemented. The performant data used by applications is only a fraction of the total size of data. Most of this data is unproductive and unemployed but is a resource guzzler. The cold storages like Coldline in Google Cloud or Glaciers in AWS provide immense cost incentives for data that is minimally accessed. This, when coupled with a deduplication tool to eliminate duplicate data and a data processing program to extract relevant data and discard the fat, is a very simple yet cost-effective strategy to protect data for the long term.

With many public cloud vendors spreading their data centres in all major cities across the globe, the issue of data control is addressed to a large extent. Users can not only ensure their data resides in the required geographical regions but can also have multiple copies of data across considerable distances, making disaster recovery an easy goal to achieve compared to the Herculean task it used to be in the pre-cloud world.

The HBR article talks about single source of truth (SSOT) and multiple versions of truth (MVOT). SSOT data has to be incorruptible and immutable in some cases, with a very high degree of provenance and governance leading to its indisputable integrity. A lack of SSOT leads to a dent in an organisation’s credibility. Clouds play a major role in not only building a SSOT repository but also in providing a plethora of choices. These include Google Data Studio and Bigquery or Microsoft’s PowerBuilder, which help to build an offensive strategy, which can then foster the development of effective and insightful platforms for MVOTs, thus propelling the organisation to the next level of growth.