If asked, “What are the two mega-trends in the computing industry likely to be in the coming decade?”, most IT industry folks would name “Big Data” and “Cloud Computing” as the driving forces shaping the industry today. The computing world is shifting from enterprise-centric to data-centric workloads driven by the “Big Data” revolution, while cloud computing is becoming mainstream, reinventing utility/elastic computing as the new mantra for the IT industry.

The influence of these two paradigms is driving the revolution in different fields of the computing industry, such as processor/platform architectures, programming models, programming languages and software stacks, to name just a few. After all, no one would have paid attention to “Map-Reduce” as a programming paradigm, if it was not for Web 2.0 and the shift towards cloud computing.

The field of databases is no exception, and has been influenced heavily by these two driving forces. Cloud computing is driving the momentum towards making the database available as a service on the cloud. “Big Data” is changing the traditional ways in which data is stored, accessed and manipulated, with the arrival of the NoSQL movement and domain specific databases, resulting in moving computing closer to data. And if you ask the Internet-savvy the cheeky question, “What is the connection between Cassandra, Voldemort and Dynamo?” (No, this is not related to Harry Potter!), you can expect the majority to give you the correct answer — that they are all specialised NoSQL databases.

The fact that databases are no longer the domain of the few specialised expert programmers signifies the shift of the database movement from enterprise-centric workloads to focusing and delivering on the needs of social media networks, collaborative computing, massively multi-player online gaming, etc.

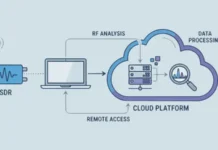

Cloud computing is transforming the way data is stored, retrieved and served. Computing resources like servers, storage, network and applications (including databases) are hosted, and made available as cloud services, for a price. Cloud platforms have evolved to offer many IT needs as online services, without having to invest in expensive data centres and worry about the hassle of managing them. Cloud platforms virtually alleviate the need of having your own expensive data-centre.

Today, those managing cloud platforms offer to design, develop, test, deploy and host apps in the cloud environment, with impressively economical cost models. Amazon EC2, Google App Engine, Microsoft Azure, Appistry CloudIQ, AppScale, AT&T Synaptic and RackSpace are just a few of the offerings.

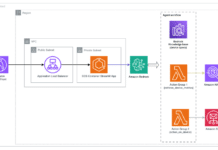

There are various classes of cloud computing services, such as:

- SaaS: In simple terms, SaaS is a software delivery model wherein business application software is hosted on the Internet/cloud centrally, and can serve multiple customers “on demand”.

- PaaS: This provides all systems and environments encompassing the software development life cycle, including development, testing, deploying and the hosting of business applications. Examples include Google App Engine and Microsoft’s Azure.

- IaaS: Infrastructure, in terms of computing resources and operating systems, is also offered as a service.

After all, hosting of business applications on the cloud also entails maintaining and manipulating the data by the applications.

Initially, it was left to developers to install, manage and use their choice of database instance on the cloud, with the burden of all the database administration tasks being left to the developer. The advantage of this is that you choose your own database and have full control over how the data is managed.

In order to simplify the burden on the users of their cloud offerings, many PaaS vendors today have started offering database services on the cloud. All physical database administration tasks, such as backup, recovery, managing the logs, etc., are managed by the cloud provider. The responsibility for logical administration of the database, including table tuning and query optimisation, rests on the developer.

The choice of databases is also limited typically to MySQL or Oracle RDBMS. Examples include Amazon’s Relational Database Service (RDS), Joyent‘s MySQL and Microsoft’s SQL Azure. Salesforce.com offers Database.com, which is actually built over Oracle’s Real Application Clusters. Though Database.com itself does not support traditional SQL, the query language it offers is built upon SQL, and is known as “Salesforce Object Query Language” (SOQL), with limited querying capabilities. This is internally translated to SQL by its system.

While these are cloud offerings of database services, their underlying technology is still traditional SQL-based database technology, not specifically reinvented for the cloud.

Of late, there has also been a considerable buzz around offering “Database as a Service (DaaS)”, wherein an organisation’s database needs are met by database offerings on the cloud. There are two use-case scenarios:

- A single large organisation that has many individual databases which can be migrated to a private cloud for the organisation, and…

- Outsourcing the data management needs of small and medium organisations to a public cloud provider, who caters to multiple small and medium businesses.

A true DaaS offering should satisfy certain requirements such as:

- Freeing the end developer/user from database administration, tuning and maintenance activities, while offering high performance, availability and fault tolerance, as well as advanced features like snapshot, analytics and time travel.

- Elasticity, or the ability to dynamically adjust to changing workloads. Elasticity is required to meet user SLAs while minimising the cloud provider’s infrastructure, power and administration expenses.

- Security and privacy guarantees, and a pay-as-per-usage pricing model. Today, there aren’t any true DaaS offerings that satisfy all these requirements. Therefore, these cloud-computing needs will drive the next generation of database evolution.

Big Data explosion and its impact on databases

The term “Big Data” is used to represent the explosive growth in online data, which has significantly outpaced the increases in CPU processing power, memory and storage capacity over the last few years. For instance, the amount of online data indexed by Google has grown from 5 exabytes in 2002 (1 exabyte is equal to 1 million trillion bytes) to 280 exabytes in 2009, numbers that are conclusively beyond the processing capabilities of any single relational database.

This explosion in data is not just limited to the Web, but has also occurred at the enterprise. Where data was earlier being generated from simple in-house data entry feeds, database management has to cater to data from multiple external data sources such as customers, GPS, mobile devices, the general public, point-of-sale devices, sensor data and so on.

There are new kinds of data, such as Web pages, digitised content such as books and records, music, videos, photos, satellite images, scientific data, messages, tweets and sensor data — each with different data-processing requirements.

Traditionally, databases only needed to cater to enterprise-centric workloads such as OLTP/OLAP. However, Big Data has ushered in a whole new set of data-centric workloads, such as Web search, massively multi-player online games, online message systems like Twitter, sensor networks, social network analysis, media streaming, photo processing, etc. The data management needs of all these data-types cannot be met by traditional database architectures. These data-centric workloads have different characteristics in the following areas:

- Response time requirements — such as real-time versus non-real-time.

- Data types:

- Structured data that fits in well with traditional RDBMS schemas.

- Semi-structured data, like XML or email.

- Fully unstructured data, such as binary or sensor data.

- Processing complexity:

- Simple data operations, such as aggregate, sort or upload/download, with a low compute-to-data-access ratio.

- Medium compute complexity operations on data, such as pattern matching, search or encryption.

- Complex processing, such as video encoding/decoding, analytics, prediction, etc.

Big Data has brought forth the issue of “database as the bottleneck” for many of these data-centric workloads, due to their widely varying requirements. The inability of the traditional RDBMS to scale up to massive data sets led to alternatives such as Data Sharding and Scale-Out Architectures, and subsequently to the NoSQL movement, which we will discuss later.

Database evolution from the 1960s onwards

There has been a huge evolution from the simple systems of the 1960s to what we have today. Let’s look at some of the stages in this evolution.

Flat and hierarchical databases

In a flat model, the data is stored as records and delimiters in a simple file. In hierarchical data, model data is organised into a tree-like structure using parent/child relationships with a one-to-many ratio (see this Wikipedia article).

This was the precursor to relational databases, with no support for querying, and the responsibility of data base administration was ad-hoc, being left to the individual maintainer to take care of, without any software support.

Relational databases

A relational database is a set of relations such that the data satisfies the predicates which describe the constraints on the possible values and their combinations. It provides a declarative method for specifying data and queries. RDBMS software describes data structures for storing the data, as well as the retrieval procedures.

Relations are represented as tables in the database. A table describes a specific entity type, and all attributes of a specific record are listed under an entity type. Each individual record is represented as a row, and an attribute as a column. This is the relational database model as proposed by Codd in the 1960s.

The relational model was the first database model to be described in the formal terms of relational algebra. The relational database model went on to become the de-facto standard for all enterprise database management systems from the 1960s till the late nineties.

Object-oriented databases

In the mid-eighties, object-oriented databases were proposed, in order to allow greater programming flexibility by allowing objects to be directly stored in databases. Relations in a relational database represent behaviours, whereas interconnection between objects cannot be represented easily in the relational form.

OODBMS were intended to address this shortcoming. In an OODBMS, application data is represented by persistent objects that match the objects used in the programming language. However, object-oriented databases were not very successful, since they were more focused on addressing the programmer needs rather than the business intelligence needs of the organisation.

Columnar databases

These organise data from the same attribute as columns of values, as opposed to storing it as rows on disk. This results in large I/O savings in analytical and data-warehousing type of data retrieval, that largely accesses a set of columns. Note that columnar databases are relational, and support ACID semantics, as well as providing SQL support.

A popular columnar database that offers state-of-the-art analytical capabilities is Vertica. It is based on CStore, a column-oriented academic database research project described in this paper [PDF].

Vertica has decoupled its Read Store (optimised for read-only accesses) from the Write Store (optimised for high performance updates and inserts) to evolve a hybrid model that offers excellent scalability. The high degree of compression that can be achieved due to the nature of columnar data, grouped together with the rest of the architecture, provides powerful analytical capabilities (white papers).

There have been several commercial and open source RDBMS products, including IBM’s DB2, Oracle Database, Microsoft SQL Server, MySQL and many others. Historically, relational databases have been providing transaction processing with the clarity that emerged from their formal mathematical models, and an elegant way of storing/retrieving data using SQL. When the reality of planetary-scale data management kicked in with the Big Data explosion, combined with the need for massive Web capabilities fuelled by Web 2.0, the industry felt the need for alternatives to traditional RDBMS.

Implementations of RDBMSs are generally tuned for their specific category of usage — say, OLTP, data warehousing, decision-support regimes, etc. Big Data applications are characterised by mostly read accesses, need for quick retrieval of query results even if the results are not fully complete, streaming media requests, textual search in large number of documents, 24X7 Web requests with varying data access patterns. However, RDBMS are not tuned for such big data application characteristics.

Architectural shifts on data-sharing models

A number of techniques have been proposed to address the changing needs of data management driven by Big Data and the Cloud. These include:

- Data replication, which creates multiple copies of the databases. The copies can be read-only, with one master copy where updates occur, and then are propagated to the copies — or the copies can be read-write, which imposes the complexity of ensuring the consistency of the multiple copies.

- Memory caching of frequently accessed data, as popularised by the memcached architecture.

- From the traditional “Shared Everything Scale-up” architecture, the focus shifted to “Shared Nothing Scale-out” architectures. The shared-nothing architecture allows independent nodes as the building blocks, with information replicated, maintained and accessed. Database sharding is a method of horizontal partitioning in a database, which typically partitions its data among many nodes on different databases, with replication of the application’s data via synchronisation. Shared-disk clustered databases, such as Oracle RAC, use a different model to achieve scalability, based on a “shared-everything” architecture that relies upon high-speed connections between servers. The dynamic scalability required for cloud database offerings still remains elusive in both these approaches. “Shared-nothing” architectures require time-consuming and disturbing data rebalancing when nodes are added/deleted. While node addition/deletion is faster in the “Shared-everything” architecture, they have scaling issues with increasing node counts.

Note that using the above techniques seriously impacts the maintenance of the ACID properties satisfied by traditional RDBMS engines. For instance, maintaining multiple read/write copies of the database impacts data consistency. Data sharding impacts atomicity requirements. Schema changes are time-consuming, and need to be propagated to multiple nodes in such data replicated/sharded/partitioned architectures.

Various SQL operations, such as joins, cannot be implemented at the database layer, since the database is partitioned; they need to be implemented in the application middleware layer. Therefore, supporting both RDBMS and distributed databases, which can scale to the needs of Big Data and the Cloud, have conflicting requirements.

Concurrency control

Relational data stores implement pessimistic concurrency, implying that updates to the records are protected and serialised. The locking overhead associated with the pessimistic concurrency control presents significant challenges for any light-weight implementation that needs to meet the high-performance requirements for massive data-stores.

On the contrary, many of the non-relational database implementations lean towards optimistic concurrency control, with a relaxed consistency model.

Shifts in the programming paradigm

The non-relational data models allow one to massively scale and perform in parallel. Map-Reduce (MR) techniques allow processing of partitioned subproblems in a distributed manner, with a greater degree of efficiency. There are libraries available in several forms that allow distributed processing of the map and reduction operations, with map operations executed in parallel.

Map-Reduce functions are both defined as key/value pairs, largely capable of operating on top of NoSQL data stores. It also provides inherent fault tolerance to recompute partial map results.

When does a static schema not make sense? Or, when does it need to be dynamic?

A database schema refers to the organisation of data to create a blueprint of how a database will be constructed. It specifies, based on the database administrator’s knowledge of possible applications, the facts that can enter the database, or those of interest to the possible end-users.

There is an incredible amount of diversity in terms of how data is structured in the Big Data era. The requirements for many data collections are different, and it could be a daunting task to attempt to unify all types of collections with a single schema. Instead of creating a “one size fits all” approach at the database level, the administrators who use this approach often look for flexibility in schemas. With the inability to design a predetermined schema during the design phase, a traditional SQL-based relational database is less likely to be appropriate.

The widely varying requirements of data management under Big Data and cloud computing resulted in the industry looking for alternatives to RDBMS. This led to the growth of non-relational distributed databases. These non-relational distributed database systems, which vary widely in their design, have come to be referred to by the term “NoSQL”, signifying that they are different from the traditional relational database systems that support a structured query language.

What is NoSQL?

NoSQL stands for “Not-Only-SQL”. It is the emergence of a growing number of non-relational, distributed data stores that typically do not attempt to provide ACID guarantees. NoSQL databases may not require fixed table schemas, and they typically scale horizontally. NoSQL architecture often provides weak consistency guarantees and restricted transactional support.

The origins of the NoSQL movement can be traced to what is now known as CAP theorem. Recall the fact that in order to handle massive datasets, databases turned to “Shared Nothing” partitioned systems. In 2002, Eric Brewster made the following conjecture in the PODC conference keynote talk [PDF]:

It is impossible for a distributed computer system to simultaneously provide all three of the following guarantees:

- Consistency (all nodes see the same data at the same time)

- Availability (node failures do not prevent survivors from continuing to operate)

- Partition tolerance (the system continues to operate despite arbitrary message loss)

According to the theorem, a distributed system can satisfy any two of these guarantees at the same time, but not all three. Therefore, the “Shared Nothing” partitioned database architectures moved towards non-relational databases, which sacrificed consistency in order to provide high availability, scalability and partition tolerance. These partitioned non-relational databases moved from the traditional ACID semantics supported by RDBMS to BASE semantics.

BASE stands for “Basically Available, Soft state, Eventually consistent”. Under the BASE semantics, it is enough for the database to eventually be in a consistent state. ACID is pessimistic, and forces consistency at the end of every transaction. BASE is optimistic, and accepts that the database consistency will be in a state of flux. In simple terms, eventual consistency means that while a database may be inconsistent at certain points of time, it will eventually become consistent; i.e., eventually, all database nodes will receive the latest consistent updates. This relaxed consistency allows BASE systems to provide high scalability.

A detailed discussion of ACID versus BASE semantics, and how correctness can still be maintained under BASE semantics, can be found in the ACM queue article. Incidentally, it was this article that coined the term “BASE” for such partitioned database architectures.

Types of NoSQL systems

Several NoSQL systems employ a distributed architecture, similar to the “Shared-Nothing” model. Simplistic implementations use associative arrays or key-value pairs. Often, they are implemented with distributed hash table (DHT), multi-dimensional tables, etc. A wide variety of distinct families exist under the NoSQL movement. Let’s look at these families now.

Simple key-value store

This is a schema-less data store that allows the application to store its data, and allows it to manage, most often with minimal data-type support. Dynamo (Amazon), Voldemort (LinkedIn) use the Key-Value Distributed Hash Table from implementing their databases.

SimpleDB provides simple key/value pairs, stored in a distributed hash table, but interoperates well with the rest of its cloud building blocks. Dynamo is Amazon’s major research paradigm for non-relational database design, with a simpler model with massive scaling abilities, and extraordinarily high availability requirements. It allows applications to relax their consistency guarantees, under certain failure scenarios. The techniques used to make Dynamo work and perform well include consistent hashing, vector clocks, Merkle tree data structure and Gossip (a distributed information sharing approach).

Dynomite is an open source implementation of Dynamo, written in Erlang. A detailed description of Dynamo is available from Werner Vogel’s paper [PDF].

Table-oriented NoSQL data stores

These are similar to key-value stores, but define the value as a set of columns. An example of a table-oriented NoSQL store is Google’s BigTable. One of the leaders in this space, Google’s BigTable is integrated with its cloud computing platform (Google App Engine) and offers simplified SQL capabilities called GQL.

BigTable and its clones are implemented as sparse, multi-dimensional sorted maps, instead of simple key-value stores. The row, column and timestamp are used to index data. Atomicity is guaranteed at a low-level row (updates to a single row are always transactional). Locality groups provide the ability to combine different columns to impose access control and hints about data that are typically accessed together.

Hypertable and HBase are two open source clones of BigTable.

One of the key differences between these implementations is the underlying file system that actually stores the data. BigTable uses Google’s proprietary file system (Google File System — GFS) while the open source clones use HadoopFileSystem or KosmosFileSystem (KFS). These are open source implementations of Google File System. HBase, in turn, provides additional database capabilities, map/reduce integration, etc.

Document-oriented data stores

These NoSQL key-value data stores are highly structured, and include complex self-defining objects as their values. Many of the popular document databases, such as MongoDB or CouchDB, use JavaScript Object Notation (JSON).

CouchDB defines a basic key/value storage mechanism to store documents in JSON (JavaScript Object Notation) format. It allows the creation of persistent views on top of this data, similar to database tables which can be queried. The storage engine for CouchDB does support ACID properties, and is intended to be a distributed fault-tolerant system. It has the capabilities to scale down and hence, can be used for mobile computing, efficiently.

A similar project is MongoDB, which does not provide ACID properties, but has powerful querying capabilities.

Graph databases

A graph database is a kind of NoSQL database that uses graph structures with nodes, edges, and properties to represent and store information. Nodes represent entities, and properties are information that relate to nodes. Edges connect nodes to nodes/properties representing the relationship. Most of the important information is really stored in the edges. Compared with relational databases, graph databases are often faster for associative data sets, scale more naturally to large data sets, and are more suitable for changing data/evolving schemas. Conversely, relational databases are typically faster at performing the same operation on large numbers of data elements. Examples of graph databases are Neo4j and hypergraphDB.

Various NoSQL implementations differ considerably on how consistency is achieved, what data types are supported, the structure and nature of how the data is stored, and the support of languages/libraries/interfaces for access. Implementations embrace what is best for them by considering how their targeted class of Web services would need to scale.

They also vary in terms of whether the key-values are stored in-memory, on-disk, or both; hierarchically or tabular; duple or triplet; single or multiple value per key, etc. A detailed discussion of NoSQL databases can be found in the paper “No Relation: The Mixed Blessings of Non-Relational Databases”, available here.

What’s around the corner for databases?

The question that has frequently been asked is whether the NoSQL movement signifies the end of traditional RDBMS.

While the NoSQL movement has helped to answer the initial needs of massive data sets of Web 2.0, traditional OLTP and OLAP enterprise applications still depend on RDBMS. Therefore, we expect that both NoSQL and traditional RDBMS would continue to coexist for the next decade.

Regarding the question on whether today’s databases satisfy the challenges of data management posed by the Cloud and Big Data, we should look back at database history for our answer. Recall that the RDBMS revolution enabled the conversion of business data into information, driving business processes and business intelligence. It is not sufficient for NoSQL databases to support high availability, scalability and low latency needs. They also need to provide state-of-the-art analytics, which can power business intelligence while being elastic to fit the cloud environment.

The opinion widely shared by many in the database industry is that, “The real database revolution driven by Big Data and the Cloud is just around the corner.” So we do have a lot of interesting innovations to look forward to in the database space over the next few years.

that’s very useful information you provide…thank you for it….

Cloud computing is where it’s at!

noSQL is the future

xtremly wel info….

Cool.

Here’s a quick look at the benefits you can expect from utilizing cloud computing in general, and a cloud database in particular.

http://blog.caspio.com/commentary/top-benefits-of-database-cloud-computing/

Thanks a lot for such a vast and useful information.

Databases are the base of IT. When you them, you understand things better and from the core. I myself was a Database engineer and now switched to Hadoop. I find myself performing really great due to all that knowledge.

This article is really something I would archive.

Thanks for sharing..

Thanks..

Its very useful information. Data base has always being the base of IT sector’s.

Its very important to have a database for every firm. You have written good content on this..

Thanks for great share..!!!