Technology is changing faster than styles in the fashion world, and there are many new entrants specific to the open source, cloud, virtualisation and DevOps technologies. Docker is one of them. The aim of this article is to give you a clear idea of Docker, its architecture and its functions, before getting started with it.

Docker is a new open source tool based on Linux container technology (LXC), designed to change how you think about workload/application deployments. It helps you to easily create light-weight, self-sufficient, portable application containers that can be shared, modified and easily deployed to different infrastructures such as cloud/compute servers or bare metal servers. The idea is to provide a comprehensive abstraction layer that allows developers to containerise or packageany application and have it run on any infrastructure.

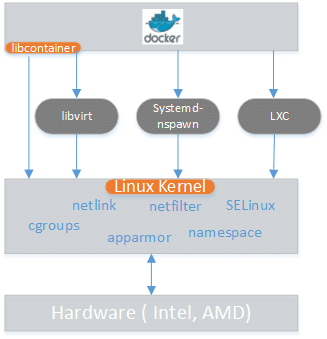

Docker is based on container virtualisation and it is not new. There is no better tool than Docker to help manage kernel level technologies such as LXC, cgroups and a copy-on-write filesystem. It helps us manage the complicated kernel layer technologies through tools and APIs.

What is LXC (Linux Container)?

I will not delve too deeply into what LXC is and how it works, but will just describe some major components.

LXC is an OS level virtualisation method for running multiple isolated Linux operating systems or containers on a single host. LXC does this by using kernel level name space, which helps to isolate containers from the host. Now questions might arise about security. If I am logged in to my container as the root user, I can hack my base OS; so is it not secured? This is not the case because the user name space separates the users of the containers and the host, ensuring that the container root user does not have the root privilege to log in to the host OS. Likewise, there are the process name space and the network name space, which ensure that the display and management of the processes run in the container but not on the host and the network container, which has its own network device and IP addresses.

Cgroups

Cgroups, also known as control groups, help to implement resource accounting and limiting. They help to limit resource utilisation or consumption by a container such as memory, the CPU and disk I/O, and also provide metrics around resource consumption on various processes within the container.

Copy-on-write filesystem

Docker leverages a copy-on-write filesystem (currently AUFS, but other filesystems are being investigated). This allows Docker to spawn containers (to put it simplyinstead of having to make full copies, it basically uses pointers back to existing files).

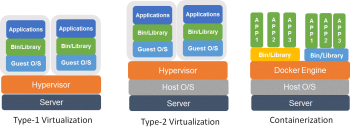

Containerisation vs virtualisation

What is the rationale behind the container-based approach or how is it different from virtualisation? Figure 2 speaks for itself.

Containers virtualise at the OS level, whereas both Type-I and Type-2 hypervisor-based solutions virtualise at the hardware level. Both virtualisation and containerisation are a kind of virtualisation; in the case of VMs, a hypervisor (both for Type-I and Type-II) slices the hardware, but containers make available protected portions of the OS. They effectively virtualise the OS. If you run multiple containers on the same host, no container will come to know that it is sharing the same resources because each container has its own abstraction.LXC takes the help of name spaces to provide the isolated regions known as containers. Each container runs in its own allocated name space and does not have access outside of it. Technologies such as cgroups, union filesystems and container formats are also used for different purposes throughout the containerisation.

Linux containers

Unlike virtual machines, with the help of LXC you can share multiple containers from a single source disk OS image. LXC is very lightweight, has a faster start-up and needs less resources.

Installation of Docker

Before we jump into the installation process, we should be aware of certain terms commonly used in Docker documentation.

Image: An image is a read-only layer used to build a container.

Container: This is a self-contained runtime environment that is built using one or more images. It also allows us to commit changes to a container and create an image.

Docker registry: These are the public or private servers, where anyone can upload their repositories so that they can be easily shared.

The detailed architecture is outside the scope of this article. Have a look at http://docker.io for detailed information.

Note: I am using CentOS, so the following instructions are applicable for CentOS 6.5.

Docker is part of Extra Packages for Enterprise Linux (EPEL), which is a community repository of non-standard packages for the RHEL distribution. First, we need to install the EPEL repository using the command shown below:

[root@localhost ~] # rpm -ivh http://dl.fedoraproject.org/pub/epel/6/x86_64/epel-release-6-8.noarch.rpm

As per the best practice update,

[root@localhost ~] # yum update -y

docker-io is the package that we need to install. As I am using CentOS, Yum is my package manager; so depending on your distribution ensure that the correct command is used, as shown below:

[root@localhost ~] # yum -y install docker-io

Once the above installation is done, start the Docker service with the help of the command below:

[root@localhost ~] # service docker start

To ensure that the Docker service starts at each reboot, use the following command:

[root@localhost ~] # chkconfig docker on

To check the Docker version, use the following command:

[root@localhost ~] # docker version

How to create a LAMP stack with Docker

We are going to create a LAMP stack on a CentOS VM. However, you can work on different variants as well. First, lets get the latest CentOS image. The command below will help us to do so:

[root@localhost ~] # docker pull centos:latest

Next, lets make sure that we can see the image by running the following code:

[root@localhost ~] # docker image centos REPOSITORY TAG IMAGE ID CREATED VIRTUAL SIZE centos latest 0c752394b855 13 days ago 124.1 MB

Running a simple bash shell to test the image also helps you to start a new container:

[root@localhost ~] # docker run -i -t centos /bin/bash

If everything is working properly, you’ll get a simple bash prompt. Now, as this is just a base image, we need to install the PHP, MySQL and the LAMP stack:

[root@localhost ~] # yum install php php-mySQL mySQL-server httpd

The container now has the LAMP stack. Type exit to quit from the bash shell.

We are going to create this as a golden image, so that the next time we need another LAMP container, we dont need to install it again.

Run the following command and please note the CONTAINER ID of the image. In my case, the ID was 4de5614dd69c:

[root@localhost ~] # docker ps -a

The ID shown in the listing is used to identify the container you are using, and you can use this ID to tell Docker to create an image.

Run the command below to make an image of the previously created LAMP container. The syntax is docker commit <CONTAINER ID> <name>. I have used the previous container ID, which we got in the earlier step:

[root@localhost ~] # docker commit 4de5614dd69c lamp-image

Run the following command to see your new image in the list. You will find the newly created image lamp-image is shown in the output:

[root@localhost ~] # docker images REPOSITORY TAG IMAGE ID CREATED VIRTUAL SIZE lamp-image latest b71507766b2d 2 minutes ago 339.7 MB centos latest 0c752394b855 13 days ago 124.1 MB

Lets log in to this image/container to check the PHP version:

[root@localhost ~] # docker run -i -t lamp-image /bin/bash bash-4.1# php -v PHP 5.3.3 (cli) (built: Dec 11 2013 03:29:57) Zend Engine v2.3.0 Copyright (c) 1998-2010 Zend Technologies

Now, let us configure Apache.

Log in to the container and create a file called index.html.

If you dont want to install VI or VIM, use the Echo command to redirect the following content to the index.html file:

<?php echo Hello world; ?>

Start the Apache process with the following command:

[root@localhost ~] # /etc/init.d/httpd start

And then test it with the help of browser/curl/links utilities.

If youre running Docker inside a VM, youll need to forward port 80 on the VM to another port on the VMs host machine. The following command might help you to configure port forwarding. Docker has the feature to forward ports from containers to the host.

[root@localhost ~] # docker run -i -t -p :80 lamp-image /bin/bash

For detailed information on Docker and other technologies related to container virtualisation, check out the links given under References.

References

[1] Docker: https://docs.docker.com/

[2] LXC: https://linuxcontainers.org/