Containers present an easy way of deploying traditional applications, cutting-edge microservices and Big Data apps anywhere. In enterprises in which a large number of Docker pods may be in use, Kubernetes is one of the best orchestration tools to choose. Migrating application workloads to containers has many advantages for enterprises, such as profitability, scalability and security.

It was only a matter of time before containers changed the way enterprise cloud infrastructure was built and packaged. Containers have promoted and supported the concept of modularity, allowing applications and software to be independent of their life cycles. They’re now used intensively with cloud orchestration and are therefore increasingly critical for enterprises looking to scale up and succeed with their cloud infrastructure.

Cloud orchestration

Orchestration refers to the process of automating the deployment of containers, which optimises applications. Container orchestration is imperative to manage the life cycles of containers in large environments. The most popular container orchestration tools today are Kubernetes, Marathon for Mesos and Docker Swarm. The configuration files point to the container images and establish networking between containers. These files are branched so that they can deploy the same applications across various environments, after which they are deployed to the production clusters.

Business advantages of the migration

The main reason for businesses to migrate to containers is that they offer a cost advantage. This is accomplished through a number of avenues.

- Lower development costs: Containers are portable and platform-independent, which eliminates the need to modify modules to make them compatible across various environments. This makes organisations more flexible, speeding up the development process.

- Better security: Since containers do not interact with each other, there are no security risks involved with any module crashing.

- Smooth scaling: Containers allow horizontal scaling. This allows you to run the containers you need in real-time, reducing your costs drastically.

Docker has been fairly successful, with almost every enterprise trying it out and more than 66 per cent of them adopting it thereafter. Its adoption continues to increase rapidly, with major corporations like PayPal, Uber, Spotify (which has more than 300 servers up and running) and even institutes like Cornell University choosing to adopt it. It is highly popular among most enterprises due to its support for multiple languages, and has a host of other benefits, such as:

- It reduces the infrastructure resources by a huge margin, assisting organisations in saving on a number of fronts, including server costs and employee costs.

- Container orchestration is already a speedy process, but Docker is able to deploy software and applications in seconds.

Kubernetes

Kubernetes is the popular open source orchestration tool for Docker containers and has grown rapidly over the past few years — not just as a tool, but even as a community, with more services, support and assisting tools now widely available. A number of cloud native Kubernetes distributions, like Azure Kubernetes Service, Google Kubernetes Engine, Amazon Elastic Kubernetes Service and DigitalOcean Kubernetes Service (DOKS) are being widely adopted to deploy and manage the containers.

Using cloud native Kubernetes distribution: The business advantages

The cloud native Kubernetes distribution has lightened the enterprise workload by a considerable amount by automating the deployment process.

- All distributions, be it Azure, Amazon EKS, or DigitalOcean have a set of battle-tested host images, optimised for Docker and Kubernetes.

- Security is rarely an issue with cloud native distributions, especially when containers are involved. Microsoft Azure has one of the most advanced security technologies backing it (courtesy Microsoft) with tools like multi-factor authorisation and advanced threat analytics.

- Kubernetes distributions support an array of languages and are not heavy on the pocket either. DigitalOcean gets you an SSD based VM for just US$ 5, with 512MB RAM, 20GB disk space and 1TB transfers.

- Kubernetes ensures that even after undesirable events, the system regularly heals itself so that it stays the same as time passes by. For example, if you create four replicas of an application, and one gets deleted, or destroyed manually, Kubernetes will bring one back so that the same number of replicas continue to run. This autonomous process is called a control loop, and is a popular controller implementation choice amongst users.

- The concept of decoupled microservice architectures was consolidated through Kubernetes. While building a microservice, do note that one microservice can be utilised by several teams for their service implementation. Groups of containers finally bring together the images developed by different teams into one deployable unit.

- The criticality of logs is apparent to anyone responsible for debugging programs. With Kubernetes, logging is automatic.

Kubernetes is a relatively novel concept. With the community around it and their skillsets still evolving, it can be potentially difficult to implement the concept of containers for existing applications. Working with Kubernetes requires a certain degree of team reorganisation and some additional employees with the desired skillsets, as it can be hard to set up and configure.

Considerations

While diving into Kubernetes, there are a number of factors an enterprise must keep in mind to avoid any roadblocks.

- Start afresh for legacy applications: Legacy applications are already built completely with no errors. Instead of breaking down the entire application, it is easier to either create the application from scratch, or re-architect the code base for containerisation.

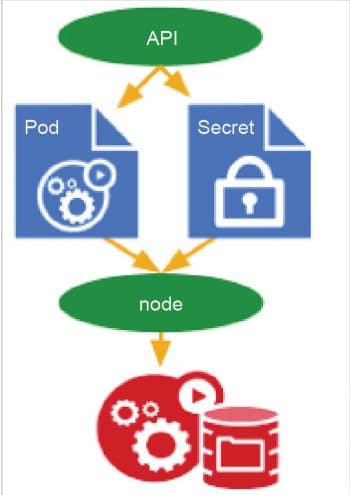

- Use secure secrets: Kubernetes has a highly recommended secure secrets management system, which keeps API keys and tokens safe. Users only need to define the secret in the cluster and attach the secret to the pod.

- Set resource constraints: It is critical to set the appropriate resource constraints. Limiting oneself to low resources comes with its obvious problems, while taking too much may turn out to be expensive and a waste. Not specifying the limit will result in the pod using unlimited resources, which could ultimately crash the entire node.

- Kubernetes APIs may change frequently over multiple version updates due to the large community and open source base. It’s advisable to refer to the current documentation for any updates and to check the latest information lest you are following tutorials that may be outdated. What might have been acceptable a couple of versions ago can be obsolete now. This happens frequently with storage, since newer ways to consume persistent storage in a K8s cluster keep popping up.

Migrating workloads to the cloud and containers can seem like a daunting task. However, with Docker and Kubernetes and their well documented tutorials, the process is certainly made a lot easier. With industry support behind both (Google designed Kubernetes and Docker has alliances with Red Hat and Microsoft), security, safety and reliability are more than reasonably assured.