DevOps is to IT what IoT is to manufacturing. Both are disrupting the status quo and are taking the world closer to automation. Let us look at one of the biggest drivers of this revolution — Kubernetes.

Containers have made a visible impact on software development over the last six years. They have the ability to co-locate, encapsulate and isolate application environments, yet abstract the complexity of the underlying OS and physical hardware. This results in a transformative shift in data centre operations — from a machine-centric one to an application-centric one. However, the manual efforts required to manage a group of containers inhibit their large scale deployment and proliferation. Docker Swarm attempted to solve this problem but lost to the Goliath, i.e., Kubernetes, which innumerable users will vouch for. Docker Inc. itself has now included Kubernetes in its distribution alongside Docker Swarm, which endorses Kubernetes capabilities. This should not surprise anybody.

Though Kubernetes was officially launched in 2014, its predecessor, Borg, has a reputation for running millions of jobs in isolated application-led environments in Google’s data centres, which host all its offerings. As containers gained ground, Google ported Borg from C++ to Go, applied lessons learned and re-christened it Kubernetes. It then donated Kubernetes to the Cloud Native Computing Foundation (CNCF), an open source community, which now maintains it.

It is an injustice to ‘stereotype’ Kubernetes as a plain container orchestration tool. Undoubtedly, it does that work very well. But it does much more than simply run containers.

It heals (self-healing): Applications fail more often than not. What matters is the impact of that failure and the time it takes to return to normalcy. Kubernetes solves this problem to a great extent. If a pod (group of containers actually running the application) is out of order, Kubernetes ensures the number of replicas and desired state of pods is always maintained. Replicas are recreated even before an end user realises that an instance of an application or a service has suffered an outage. Further, its monitoring capabilities equip administrators to attend to outages immediately and with focus.

It deploys faster: A major advantage of K8S is its deployment speed. All the case studies that I went through on Kubernetes, had users acknowledging that faster deployment reduced the duration of development cycles. From five days to five hours or from one hour to five minutes, users are immensely impressed by the transformational shift Kubernetes brings to operations, processes and the work culture. It takes away all the complexity of managing infrastructure so that developers can focus on their code and not the environment. In collaboration with DevOps tools like Jenkins, Terraform and Ansible, the value Kubernetes adds is unmatched.

It uses resources better: After human resources, IT infrastructure forms the bulk of operational expenses to keep businesses running. Under-utilisation of resources hurts any company financially. Virtualisation gained market share riding on the story of better hardware usage. Kubernetes takes this to the next level. Running applications and services on containers instead of VMs, allows the addition of a bigger workload while extracting more juice from the underlying hardware. Users have reported a 40 per cent to 80 per cent increase in resource utilisation with Kubernetes — from 4 per cent CPU utilisation to 40 per cent, and from 2600 VMs to just 40! This translates to massive cost savings on Amazon’s EC2 and on GCE (Google Compute Engine).

It scales: What happens when you try to fix a watch with a sledgehammer? If there are just a few tens of containers to manage, Kubernetes could be a liability. Docker Swarm is better in such cases. Kubernetes is for bigger tasks. If there are 75 million users, Kubernetes delivers — handling 28 million requests in 10 minutes, with 100 per cent uptime. One can imagine the thrills engineers experience on seeing a setup up and running in a few minutes — something they would have earlier spent nine months to provision and build, and still face downtimes during peak load. Google creates 2 billion containers every week, all managed by Kubernetes.

It automates and monitors: Users sing paeans of praise to Kubernetes because it virtually automates everything that they would otherwise spend months to write code for. From provisioning containers to deploying services, from rolling out patches to moving pods across nodes in the event of a failure, Kubernetes does it all— automatically. And it monitors even the surface level logs to help you do some basic debugging.

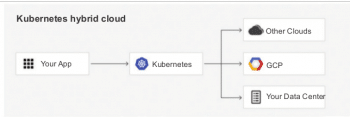

It frees users from vendor/technology locking: So, all looks good, but what if a vendor defaults? No problem. Kubernetes is completely free from vendor lock-in. It is maintained and managed by a very active open source community backed by major players. It is built to work on any platform and can run as a managed service on-premise, on-cloud or both. A scenario where normal operations are running on-premise while replicas are placed on different public clouds for a burst or failure situation, not only provides fault tolerance but also frees users from vendor lock-in.

Most of the benefits that the cloud offers are available in Kubernetes. And it offers much more than the cloud. Organisations that have been jittery about moving to the cloud due to various concerns are bullish on the possibilities Kubernetes offers them. It carves out an architecture which has the same elasticity, flexibility, scalability and performance that the cloud offers, yet it is on-premise.

With the introduction of features like Cloud Federation and Dashboard UI, the possibilities are only increasing. Kubernetes has given those who felt that the cloud would make on-premise data centers extinct, some food for thought. It has breathed new life into on-premise services and may also lead to the rise of ‘bare metal’ programming.