The rise of quantum computing will be accompanied by a failure of conventional cryptography. Post-quantum cryptography and advanced threat detection methodologies, among other techniques, are being evolved to counter security threats in the quantum era.

Digital security is undergoing a profound transformation as quantum computing transitions from theoretical promise to practical reality. For security professionals, researchers, and IT leaders, understanding quantum security has become essential. Quantum computers, with their immense computational power, threaten to undermine the foundations of classical cryptography, making previously unbreakable encryption susceptible to rapid decryption.

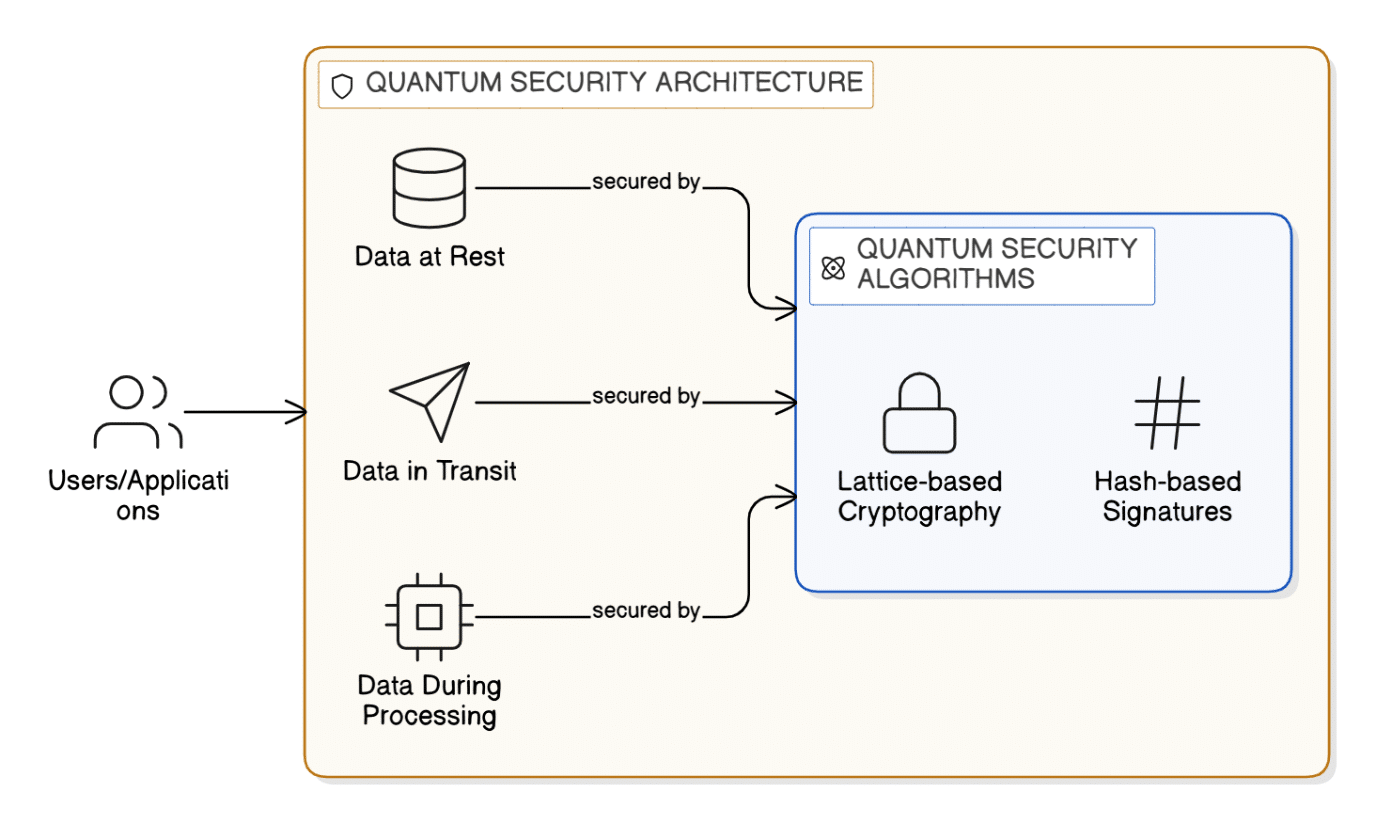

Quantum security algorithms are poised to fundamentally reshape the way we think about safeguarding information in the digital age. Unlike traditional cryptographic methods, which often depend on the immense difficulty of solving mathematical puzzles using classical computers, quantum-resistant algorithms are designed with the express intent of surviving the powerful capabilities of quantum machines. This distinction is crucial, as quantum computers threaten to render many established encryption techniques obsolete by solving problems once considered infeasible in a fraction of the time.

What sets quantum security algorithms apart is their proactive approach to anticipating the evolving threat landscape. Rather than reacting to emerging risks, these algorithms are conceived to withstand the unique and powerful attack vectors unleashed by quantum computing. By embedding resilience into the very foundation of cryptographic protocols, they help ensure that confidential information, critical communications, and essential services remain protected, regardless of technological advances. As organisations and governments move towards adopting these standards, quantum security algorithms are becoming an indispensable cornerstone for the future of data protection, inspiring confidence in the continued safety of digital interactions and transactions.

Post-Quantum Cryptography (PQC) represents a pivotal advancement in the ongoing evolution of cryptographic security, emerging in direct response to the accelerating development of quantum computing technologies. The primary aim of PQC is to develop and standardise cryptographic algorithms that can withstand the unprecedented computational capabilities of quantum machines. As traditional public key cryptosystems, which currently underpin secure communications and digital trust, face obsolescence in the quantum era, the adoption of robust PQC algorithms is essential for maintaining the confidentiality, authenticity, and integrity of sensitive information.

Key algorithms shaping the post-quantum cryptography landscape

| Algorithm | Features | Common use cases |

| Lattice-based cryptography algorithms | Built on the mathematical difficulty of lattice problems, these algorithms are designed to be resistant to both classical and quantum cyber attacks. They offer strong security guarantees and can be efficiently implemented, making them one of the most promising approaches for future cryptographic standards. | Securing digital communications, protecting confidential data, and serving as the foundation for advanced encryption and digital signature schemes in quantum-resilient systems. |

| Shor’s algorithm | Unlike the others, Shor’s algorithm is a quantum algorithm that can efficiently factor large integers and solve discrete logarithms. Its primary significance lies in its ability to break widely-used classical encryption techniques, such as RSA and ECC, if run on a sufficiently powerful quantum computer. | Primarily a threat model for existing cryptographic systems, Shor’s algorithm is not used for securing data but serves as the catalyst for the development and adoption of quantum-resistant cryptography. |

| CRYSTALS-Kyber algorithm | This is a lattice-based key encapsulation mechanism that utilises the Learning With Errors (LWE) problem, making it highly resistant to quantum attacks. It is known for efficient key exchange and strong security even in the presence of quantum adversaries. | Ideal for establishing secure shared secrets over insecure channels, such as in encrypted messaging platforms and secure online transactions, ensuring that eavesdroppers cannot derive the exchanged keys. |

| CRYSTALS-Dilithium algorithm | Also founded on lattice-based constructs, Dilithium is a digital signature scheme relying on Module-LWE and Module-SIS problems. It offers a blend of robust security and practical performance, enabling swift signature generation and verification. | Widely used for authenticating software updates, verifying transactions, and ensuring the integrity and origin of digital communications, especially in environments where resistance to quantum attacks is paramount. |

Quantum security algorithms — the foundation of data protection in the future

Lattice-based cryptography algorithms

These draw upon advanced branches of mathematics to create formidable barriers against even the most sophisticated quantum attacks. Lattice-based cryptography leverages the inherent complexity of lattice problems, which quantum computers find exceptionally challenging to break. Similarly, hash-based cryptography relies on the irreversible nature of cryptographic hash functions, making it difficult for adversaries to reconstruct original data. Code-based and multivariate polynomial-based schemes further expand this repertoire, offering a variety of mechanisms that collectively strengthen the protective framework around sensitive digital assets.

Shor’s algorithm

The critical security threat posed by quantum computing arises from Shor’s algorithm, a groundbreaking quantum algorithm devised by mathematician Peter Shor. Shor’s algorithm efficiently factors large integers and computes discrete logarithms, tasks that are prohibitively time-consuming for classical computers. This efficiency directly undermines the security basis of widely used public key systems such as RSA, which relies on the difficulty of factoring large numbers, and Elliptic Curve Cryptography (ECC), which depends on the hardness of the discrete logarithm problem over elliptic curves. In theory, a sufficiently powerful quantum computer running Shor’s algorithm could break these systems in a matter of hours, rendering encrypted communications, digital signatures, and key exchanges vulnerable to interception and forgery. This imminent risk has accelerated the global search for quantum-resistant alternatives to current cryptographic standards.

CRYSTALS-Kyber algorithm

PQC algorithms based on lattice problems are believed to be resistant to both classical and quantum attacks. Among these, CRYSTALS-Kyber has gained significant attention as a key encapsulation mechanism (KEM) designed for secure key exchange. This algorithm utilises the hardness of the Learning With Errors (LWE) problem, a mathematical challenge for which no efficient quantum solution is known. In practice, when two parties wish to establish a shared secret over an insecure channel, CRYSTALS-Kyber enables them to exchange messages in such a way that an eavesdropper, even with access to a quantum computer, cannot feasibly compute the secret key. For instance, in a secure messaging application, Alice can use Kyber to generate a public key and share it with Bob. Bob then uses this public key to encapsulate a secret, sending the resulting ciphertext back to Alice. Only Alice, with her private key, can decapsulate the ciphertext and retrieve the shared secret, ensuring both confidentiality and forward secrecy in the presence of quantum threats.

CRYSTALS-Dilithium algorithm

Complementing key exchange mechanisms, secure digital signatures are indispensable for authentication and non-repudiation in digital transactions. The CRYSTALS-Dilithium algorithm, also founded on lattice-based cryptography, establishes a quantum-resistant digital signature scheme. Built on the Module-LWE and Module-SIS problems, it offers strong security guarantees and efficient implementation. In a typical scenario, an organisation may use Dilithium to sign software updates before distribution. The developer generates a signature using their private key, which is then attached to the update package. Recipients, such as end users or automated systems, can verify the authenticity of the update using the corresponding public key, confident that even a quantum-enabled adversary cannot forge signatures or impersonate the sender. This ensures the integrity and provenance of critical software, protecting against tampering and supply chain attacks.

As quantum computing continues to advance, the necessity for robust post-quantum cryptography solutions becomes ever more apparent. Understanding the distinguishing features, strengths, and typical applications of various algorithms is essential for organisations seeking to safeguard their digital assets against future quantum-enabled threats (see Table).

Lattice-based cryptography stands out for its flexibility and strong quantum resistance, making it a cornerstone for next-generation encryption and signature mechanisms. In contrast, Shor’s algorithm represents a pivotal breakthrough in quantum computing, serving as a reminder of the vulnerabilities in current cryptographic standards and driving the urgent transition to post-quantum solutions. CRYSTALS-Kyber offers a practical and secure method for exchanging keys, ensuring confidentiality in the face of both classical and quantum threats. Meanwhile, CRYSTALS-Dilithium guarantees authenticity and non-repudiation for digital signatures, addressing the growing need for trust in software distribution and electronic transactions.

These algorithms are being actively standardised and integrated into security protocols, providing a path for organisations to transition away from vulnerable systems and embrace quantum-resilient architectures.

Post-quantum readiness assessment

As quantum threats loom on the horizon, assessing organisational readiness for post-quantum security becomes imperative. A thorough readiness assessment involves reviewing existing cryptographic assets, identifying vulnerable systems, and estimating the exposure to quantum-enabled attacks. This evaluation extends to strategic planning, resource allocation, and workforce training, ensuring that organisations are equipped to implement quantum-resistant solutions. The importance of such assessments cannot be overstated, as proactive preparation is key to mitigating risks and maintaining operational resilience. Effective readiness assessments enable security leaders to construct roadmaps for migration, prioritise critical assets, and align business objectives with emerging technological demands.

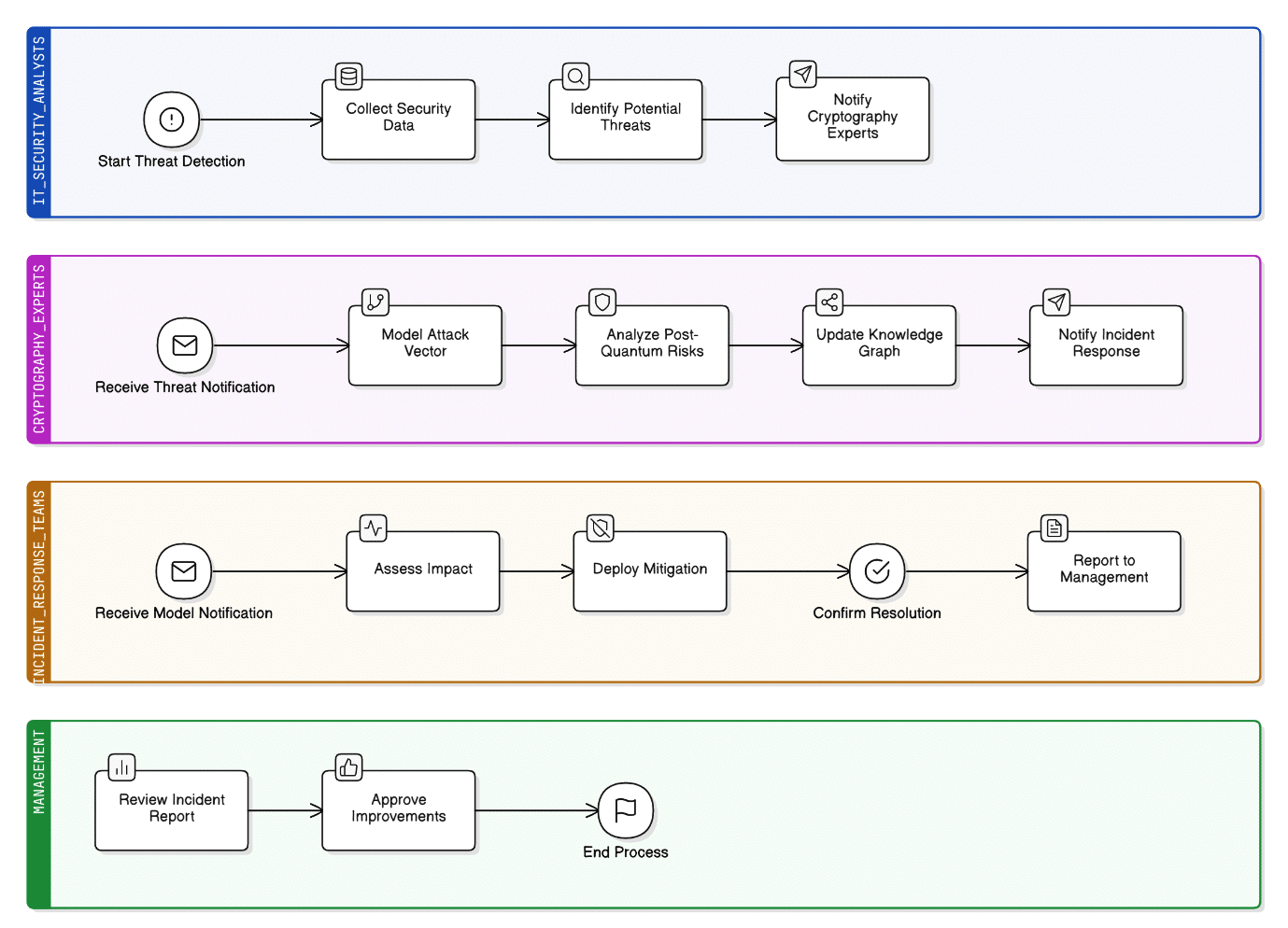

Threat detection and attack modelling using knowledge graphs

Threat detection and attack modelling in the age of quantum computing require innovative strategies that go beyond traditional security approaches. One such advancement is the use of knowledge graphs, which provide a sophisticated means to map out and understand the intricate web of relationships between entities, events, and vulnerabilities within an organisation’s digital environment. Knowledge graphs offer a structured framework, enabling security teams to integrate and analyse vast amounts of data from multiple sources. This interconnected representation allows for the identification of nuanced patterns and hidden correlations that might otherwise remain undetected, especially those associated with quantum-enabled threats.

By leveraging knowledge graphs, organisations can simulate potential attack vectors, including those that exploit the unique capabilities of quantum technology. These models help security professionals anticipate how quantum adversaries might target critical assets, exposing gaps that need to be addressed before they can be exploited. The dynamic nature of attack modelling using knowledge graphs supports continuous refinement as new threats emerge and as the organisational landscape evolves. As a result, security teams gain a holistic perspective that enhances their situational awareness, enabling them to respond more effectively to emerging risks. In essence, the adoption of knowledge graph-based methodologies elevates the organisation’s ability to detect threats early, model complex attack scenarios, and reinforce the overall security posture in preparation for the quantum era.

Continuous monitoring for quantum threats and vulnerabilities

Quantum continuous monitoring is driven primarily by the ‘harvest-now, decrypt-later’ cyberthreat – adversaries collect encrypted data today and decrypt it in the future when quantum systems are mature enough to do so. Thus, monitoring needs to be threat‑informed for quantum capability signals and cryptography-informed for tracking wherever breakable or weak cryptography is still in use.

Quantum threat intelligence and horizon tracking

A revamped programme regularly fetches quantum-aligned and ecosystem readiness-related indicators: vendor roadmaps, error-correction achievements, logical qubit forays, and standardisation updates. This stream feeds a Quantum Risk Register which is reviewed as vulnerability exposure.

Cryptographic asset discovery and ‘Y2Q’ telemetry

Organisations need constant visibility of where RSA/ECC, weak parameter sets and non-agile crypto are deployed. This is done by Crypto Bill of Materials (CBOM) and automated crypto scanners for code, containers, firmware, PKI, and SaaS configurations.

Hybrid deployment and monitoring that is safe to rollback

Hybrid key exchange (classical + PQC KEM) is about the only thing that’s standard while migrating to de-risk adoption. Monitoring must validate:

- Proper negotiation (no silent downgrade), matching sets of parameters, and library versions.

- Behaviour and failure scenarios (handshake latency, fragmentation, retransmissions) with respect to identifying operational regressions that could also be exploited by attackers.

Handling vulnerabilities in PQC implementations

Continuous monitoring should include:

- Side-channel exposure analysis (timing/cache), RNG monitoring, constant-time-testing, and dependency CVE analysis in PQC-enabled TLS/VPN stacks.

- Key encapsulation/decapsulation error handling, logging hygiene, and key lifecycle security controls.

Automation of response and governance

Organisations must tie detections to playbooks, auto-ticket crypto debt, quarantine non-compliant services, enforce crypto-agile baselines, and produce audit-ready evidence that migration is measurable, continuous and resistant against quantum-enabled adversaries.

Explainable security metrics in PQC

Explainable security metrics are essential in post-quantum cryptography (PQC) because the migration is both technical and organisational. Leaders must understand why an algorithm choice, parameter set, or hybrid mode meaningfully reduces quantum risk. In practice, explainability means metrics that are traceable to standards, grounded in threat models, and actionable for engineers.

Standard-aligned strength metrics (what level of protection?)

PQC deployments should report security using NIST security categories and the specific primitive role:

- Key establishment (e.g., lattice-based KEMs such as CRYSTALS-Kyber, standardised by NIST as ML-KEM).

- Digital signatures (e.g., CRYSTALS-Dilithium, standardised as ML-DSA).

Metrics should clearly state target category, parameter set, and whether the configuration is hybrid (classical + PQC) or PQC-only.

Attack-surface explainability (why is this secure?)

Replace vague ‘quantum-safe’ labels with evidence-oriented indicators:

- Assumption mapping (e.g., LWE/Module-LWE hardness), known reduction notes, and what is not assumed (no reliance on factoring/discrete log).

- Implementation posture: constant-time status, side-channel hardening level, and dependency integrity (library provenance, reproducible builds, SBOM/CBOM coverage).

Migration readiness metrics (how exposed are we today?)

To counter harvest-now, decrypt-later exposure, organisations should quantify:

- Quantum exposure window: Proportion of sensitive traffic/data encrypted with vulnerable algorithms and the retention duration.

- Crypto agility score: Ability to rotate algorithms/parameters without outages (coverage across TLS, VPN, PKI, IAM, HSMs).

- PQC coverage: Percentage of endpoints/services successfully negotiating PQC or hybrid handshakes, with downgrade-resistance verification.

Operational metrics (will it work at scale?)

Explainable metrics must also address performance and reliability trade-offs:

- Handshake latency deltas, CPU/memory overhead, failure rates, and interoperability exceptions linked to Kubernetes services and remediation steps.

Humanised reporting: dashboards that justify decisions

The output should read like an engineering narrative: ‘Asset X stores data for 7 years → RSA exposure is unacceptable → move to hybrid ML-KEM + classical today, ML-DSA for signing next → residual risks are implementation CVEs and downgrade attempts, monitored continuously’. This closes the loop between cryptographic theory, operational reality, and accountable governance.

Quantum security stands at the forefront of the next wave of technological advancement, demanding a proactive, informed approach from security professionals and leaders. The deployment of quantum-resistant algorithms, the adoption of post-quantum cryptography, and the rigorous assessment of organisational readiness are foundational steps in safeguarding data and infrastructure. Advanced threat detection methodologies, continuous monitoring, and transparent security metrics further reinforce defences, enabling organisations to navigate the uncertainties of the quantum era. As quantum computing continues to evolve, the commitment to quantum security will determine the resilience and trustworthiness of digital ecosystems for years to come.