Deduplication helps to save space on Linux-based storage systems. Choose the right platform and check whether it meets your goals.

Stop storing the same data a thousand times! If you snapshot the same virtual machine every night for a month, you’re not really storing 30 versions but the same blocks repeatedly. That invisible redundancy quietly eats disks, backup windows, and your budget. Data deduplication is simply a way of saying: “Store each unique piece of data once and reuse it everywhere it appears.”

On Linux, you don’t need proprietary software to get there. Between modern filesystems like Btrfs and ZFS, block-layer solutions like dm-vdo, and user-space tools built around clever chunking algorithms, you can retrofit serious deduplication into an otherwise boring stack.

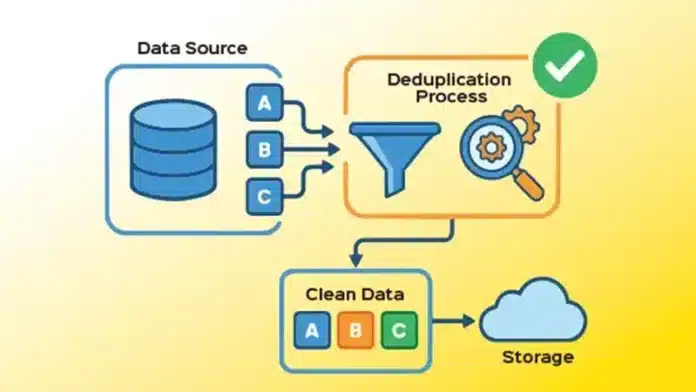

What exactly is deduplication doing?

Deduplication works in two big steps: first it decides how to slice your data, then it decides what is already there.

- Chunking: Data is split into fixed-size or content-defined chunks. Fixed-size chunks are simple, but content-defined chunking (CDC) uses patterns in the data stream to choose boundaries and is more robust when bytes are inserted or deleted in the middle of a file.

- Fingerprinting: Each chunk gets a fingerprint, typically a cryptographic hash, that acts like its identity card.

- Indexing: Fingerprints are stored in an index. When a new chunk arrives, the system checks if its fingerprint already exists.

- Reuse: If it’s a duplicate, the system creates or updates references instead of writing the chunk again.

Inline deduplication runs this logic on the write path; data is inspected before hitting disk, while post-process schemes run a scanner later, when the system is quiet. Inline gives instant savings but increases CPU, memory, and I/O overhead. Post-process keeps writes fast and shifts the cost into scheduled jobs.

Linux has more dedup options than most people think

Linux doesn’t have one dedup switch. Instead, you pick the layer that fits your problem.

-

Btrfs: dedup-friendly copy-on-write

Btrfs (B-tree filesystem) is a modern, copy-on-write (CoW) filesystem for Linux designed for high scalability, reliability, and advanced storage features. Developed to handle large data volumes, it features built-in snapshots, data compression, and integrated volume management (RAID 0, 1, 10). Btrfs is a copy-on-write filesystem, so it is already great at not duplicating blocks when you clone or snapshot data. Features like reflinks (cp –reflink) let you create instant logical copies that share blocks until they diverge.

But Btrfs does not do automatic inline deduplication. Instead, you run user-space tools that:

- Scan the filesystem, compute hashes of extents or blocks

- Look for duplicates in a database

- Ask Btrfs to ‘merge’ them by turning separate extents into shared ones

- Two popular options are:

- duperemove: Batch scanner plus hash database; good for periodic dedup on backup volumes.

- bees: A long-running daemon that incrementally walks the filesystem tree and deduplicates in the background.

Admins report high savings on collections of VMs, container layers, and ISO trees, but also warn that heavy dedup plus fragmentation can slow down random reads if you are aggressive on a busy volume.

-

ZFS on Linux: Inline dedup with a big brain

OpenZFS is an open source, advanced storage platform that combines a filesystem and a volume manager, offering features like data integrity, snapshots, compression, deduplication, and built-in RAID (RAID-Z) for data protection and efficient storage, available across Linux, FreeBSD, macOS, and other systems. It focuses on reliable, self-healing data management. OpenZFS offers native, inline block-level deduplication.

Every write goes through this:

- Hash the block.

- Look it up in the dedup table (DDT).

- If present, bump a reference count instead of writing new data.

The good part is that you don’t have to run external scanners. The risky part is that the dedup table lives largely in RAM, and its size scales with unique blocks, not logical size. People running ZFS for VM boot disks often see 3:1 to 5:1 dedup ratios, but they also plan memory carefully, and think gigabytes of RAM per few terabytes of unique data, depending on block size and workload.

Recent work in the ZFS community has made dedup smarter and less of a trap:

- Age-based pruning of old unique entries

- ‘Opportunistic’ dedup modes that try to use spare resources without committing to huge DDT footprints

ZFS dedup shines on carefully scoped pools — for example, a pool dedicated to VM images or template-based workloads, rather than as a blanket setting on every dataset.

-

dm-vdo: dedup below the filesystem

The dm-vdo (Virtual Data Optimizer) device-mapper target sits below your filesystem and offers inline block-level deduplication, compression, zero-block elimination, and thin provisioning.

Its design splits responsibilities:

- A dedup index (UDS) for quickly recognising duplicate blocks

- A reference-counted data store mapping logical to physical blocks

Because VDO lives at the block layer, it is filesystem-agnostic: you can run XFS, ext4, or Btrfs on top and still benefit. That’s useful in environments where changing filesystems would be too disruptive but storage savings are urgent.

Research systems and page-cache aware designs

Academic and experimental work pushes Linux dedup further:

- Dmdedup: A kernel device-mapper target focused on primary storage dedup, exposing a flexible platform to test algorithms at block level.

- Dual-dedup and related designs: Schemes that share duplication knowledge with the page cache so the kernel avoids caching multiple copies of the same on-disk data, improving read performance.

- Hybrid inline/out-of-line systems (e.g., RevDedup and successors): Use coarse inline dedup for new backups, then offline ‘reverse deduplication’ to shift fragmentation onto old data and keep recent backups sequential and fast to read.

These ideas increasingly influence production stacks even when the exact prototypes are not deployed as-is.

Why dedup can be both a hero and a villain

On paper, deduplication looks like free capacity. In practice, it’s a trade between space, speed, and complexity.

The good news

- Backups: Studies and vendor reports routinely show 60–80% logical space reduction on backup workloads because daily or weekly backups share large regions with previous ones.

- VDI and VMs: Clusters of similar virtual machines share kernels, libraries, and base images, often yielding 3:1 or better dedup ratios.

- Network and cloud: Client-side dedup in backup tools avoids sending duplicate data at all, shrinking WAN usage and enabling longer retention on the same hardware.

The less glamorous side

- Fragmentation: When many logical blocks map to a patchwork of shared physical blocks, sequential reads can degrade to random I/O. That hurts HDD-based arrays and cold storage particularly hard.

- Metadata and RAM: Dedup indices grow with the number of unique chunks. If they outgrow RAM, lookups spill to disk and performance plummets.

- Encryption: Application-level encryption (encrypt before writing to disk) effectively destroys dedup because identical plaintexts turn into different ciphertexts. Encrypted dedup requires special schemes where ciphertexts remain comparable.

A good rule of thumb: dedup is fantastic for backup and image-like data, risky for latency-sensitive primary databases, and mostly irrelevant for already-compressed or heavily encrypted payloads.

A practical playbook for Linux admins

Instead of treating deduplication as a magic tick-box, think of it as a workload-specific tool. Here is a pragmatic approach you can adapt.

Identify your ‘low-hanging fruit’:

Look for:

- Large backup repositories with many similar full backups

- VM image libraries, golden images, and lab snapshots

- ISO mirrors, container base layers, code repositories with many branches

These workloads naturally contain lots of duplicate blocks and are not usually as latency sensitive as transactional databases.

Choose the right layer

If already on Btrfs for backup disks, use duperemove weekly or bees continuously on that subvolume. Start with a test directory to measure space savings and runtime.

If you want filesystem-agnostic dedup on a SAN or local RAID, evaluate dm-vdo on a new test LUN, layer XFS or ext4 on top, then replay a typical backup workload and compare logical vs physical use.

If you are running ZFS for VM pools, enable dedup only on datasets that contain highly similar data. Monitor DDT size, RAM use, and read latency over a few weeks before scaling out.

Combine dedup with smart backup design

Hybrid designs from systems like RevDedup show that you don’t have to choose between performance and savings.

A simple adaptation for Linux is:

- Take regular snapshots on a CoW filesystem (Btrfs/ZFS).

- Deduplicate older snapshots more aggressively, leaving the newest generation relatively unfragmented.

- When you prune snapshots, prefer to delete heavily fragmented, older generations.

This pushes fragmentation cost towards data that is rarely read and keeps recent backups fast.

Watch the numbers that actually matter

Track:

- Logical vs physical usage (your effective dedup + compression ratio)

- Average and tail latencies for backup and restore jobs

- Dedup index size and memory footprint (DDT size for ZFS, index size for VDO, database size for duperemove)

If your dedup ratio is below ~1.2:1 and you are paying a heavy performance or complexity tax, it may not be worth it for that workload.

Where Linux dedup is heading

Recent research and kernel work hints at where Linux storage is going next:

- Faster content-defined chunking (e.g., RapidCDC and newer CDC variants) that keep high dedup ratios while slashing CPU cost.

- Inline dedup optimised for non-volatile memory and SSDs, where random I/O penalties are smaller and metadata can live in persistent memory.

- Container-aware dedup engines that understand Docker or OCI layer semantics and avoid redundant copies across thousands of images without killing pull performance.

For practitioners, this means dedup will increasingly feel less like an expensive experiment and more like a predictable building block, especially in backup, VM, and container-image platforms.