Explore the essence of quantum algorithms, their groundbreaking applications, recent innovations, and the challenges that remain.

Quantum computing stands at the forefront of technological progress, promising to revolutionise how we solve complex problems. Unlike classical computing, which relies on bits as units of information, quantum computing harnesses the peculiar properties of quantum mechanics to process information in fundamentally new ways. Quantum algorithms, the driving force behind this paradigm, offer dramatic speedups for certain tasks, with Shor’s and Grover’s algorithms serving as prominent examples.

What makes an algorithm ‘quantum’?

At the heart of quantum algorithms lie quantum bits, or qubits, which differ from classical bits by existing in a superposition of states. This means a qubit can represent both 0 and 1 simultaneously, thanks to quantum superposition. Further, entanglement allows qubits to correlate their states with one another, enabling powerful computational techniques. However, superposition and entanglement alone are not enough. The true engine of speedup is ‘quantum interference’. Algorithms are carefully choreographed so that computational paths leading to wrong answers destructively interfere (cancel out), while paths to the correct answer constructively interfere (amplify), ensuring the correct result is observed upon measurement. Quantum gates act on qubits, manipulating their states through unitary transformations, unlike the irreversible logic gates of classical computers. These principles enable quantum algorithms to explore possibilities in parallel and solve problems that are infeasible for classical machines.

Here’s an example. A qubit can be represented mathematically as |ψ⟩ = α|0⟩ + β|1⟩, where α and β are complex numbers and |α|² + |β|² = 1. Quantum gates, such as the Hadamard gate, can create superpositions as follows:

# Quantum circuit in Qiskit (Python) from qiskit import QuantumCircuit qc = QuantumCircuit(1) qc.h(0) # Apply Hadamard gate qc.measure_all()

This code snippet creates a single qubit in superposition, a foundational step in many quantum algorithms.

Shor’s algorithm: Cracking codes with quantum

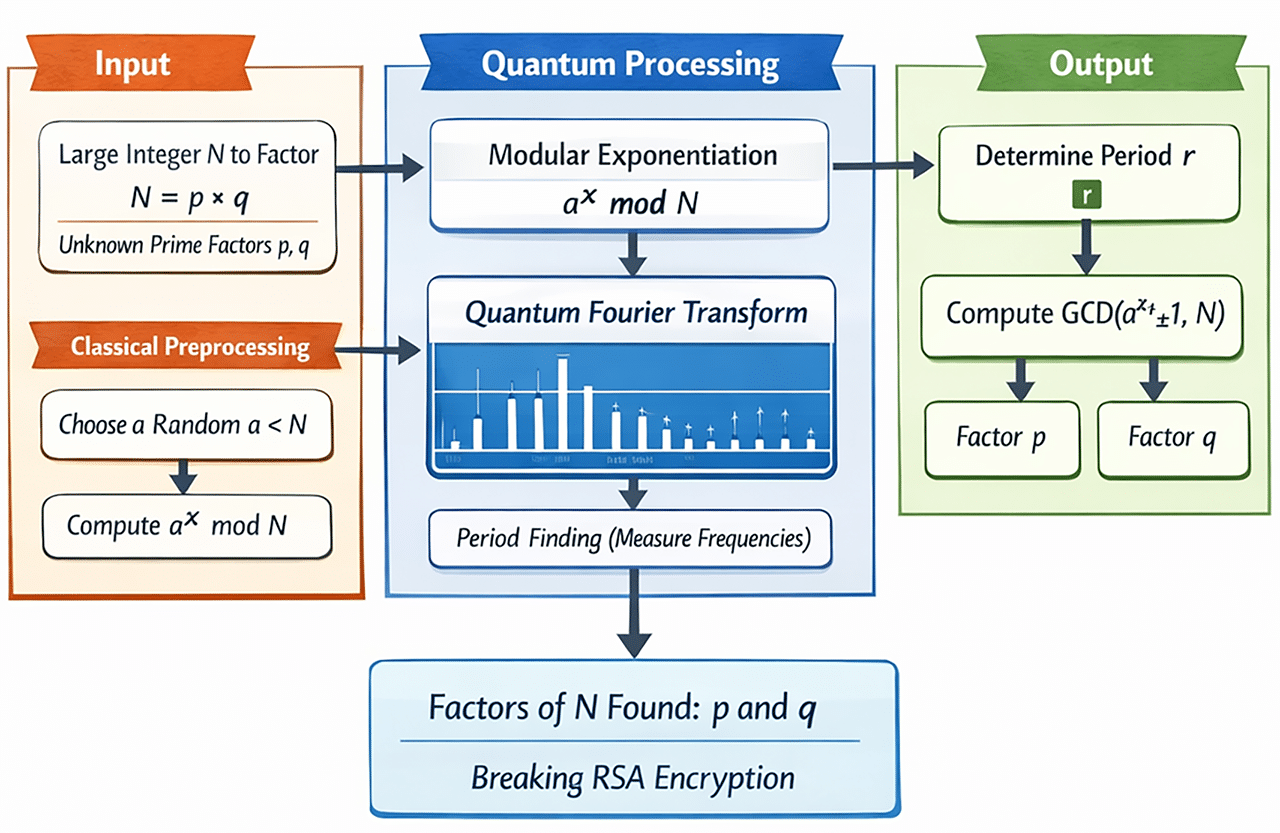

Shor’s algorithm, introduced by Peter Shor in 1994, is celebrated for its ability to factor large integers exponentially faster than classical methods. This breakthrough has profound implications for cryptography, especially for systems like RSA that rely on the difficulty of factoring large numbers. Shor’s algorithm utilises quantum Fourier transform and modular exponentiation to uncover the periodicity in number sequences—a feat unattainable with classical approaches. It is crucial to note that the quantum component does not output the factors directly. Instead, it provides a probabilistic estimate of the period, which is then fed into a classical ‘continued fraction expansion’ algorithm to derive the exact factors. This highlights that Shor’s algorithm is inherently hybrid, relying on classical post-processing to finalise the result.

Example: Factoring 15

Let us consider factoring the number 15. Classically, factoring is straightforward for small numbers, but the process quickly becomes infeasible as the numbers grow. Shor’s algorithm can factor 15 by finding the period of the function f(x) = ax mod N, where N=15 and ‘a’ is a randomly chosen integer coprime to N.

Design approach

The algorithm consists of quantum modular exponentiation, quantum Fourier transform, and classical post-processing. In practice, quantum circuits for Shor’s algorithm look like what’s shown in Figure 1.

# Skeleton of Shor’s Algorithm in Qiskit from qiskit.algorithms import Shor result = Shor().factor(N=15) print(“Factors of 15:”, result.factors)

While this example is simplified, actual implementations require intricate circuit design and error correction, especially for larger numbers.

Grover’s algorithm: Speeding up search problems

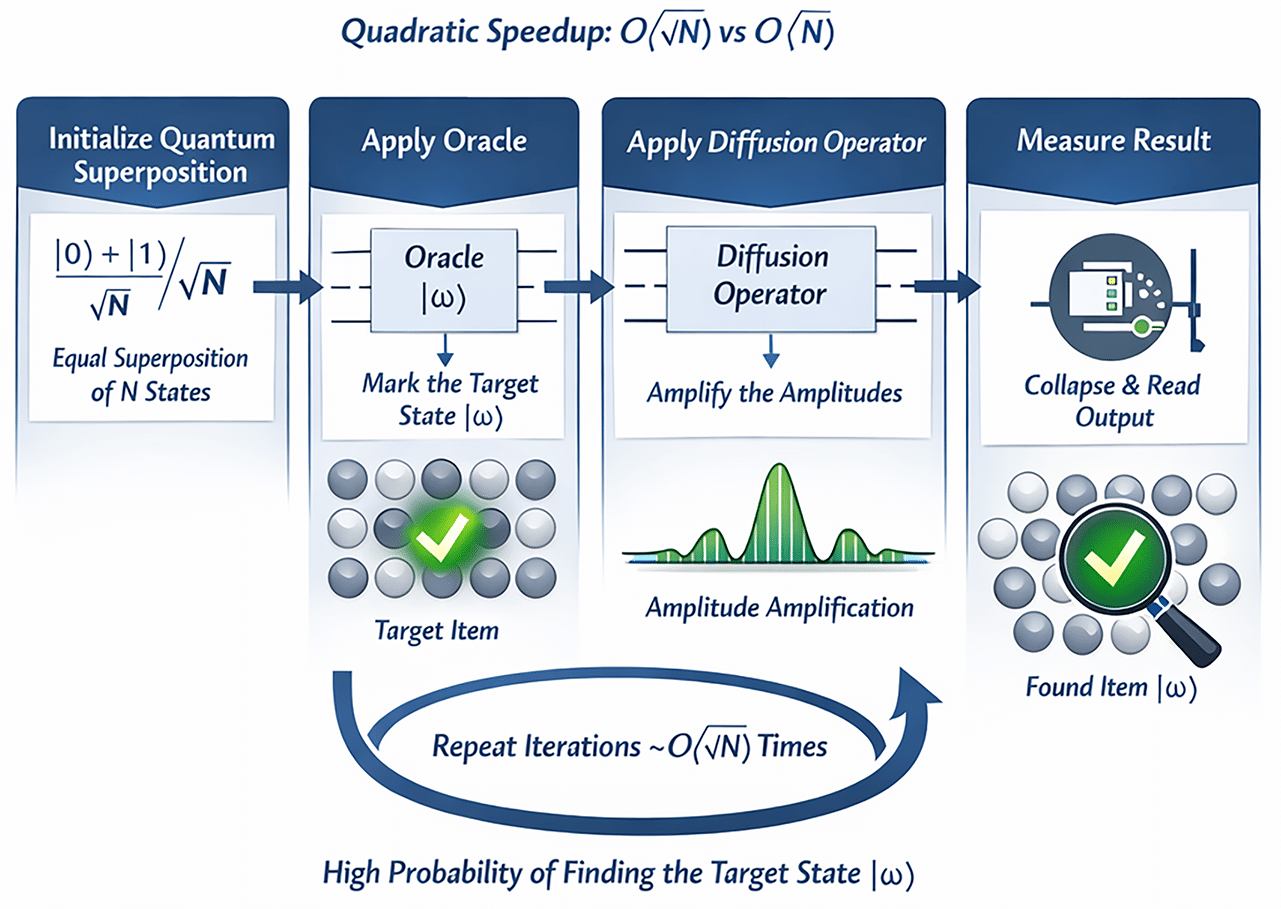

Grover’s algorithm, devised by Lov Grover in 1996, addresses the challenge of searching unsorted databases. Classically, searching N items requires O(N) time, but Grover’s algorithm achieves this in O(√N) time, providing a quadratic speedup. The algorithm leverages quantum amplitude amplification, repeatedly applying an oracle and a diffusion operator to increase the probability of finding the desired item. Figure 2 gives a reference architecture of Grover’s algorithm to visually understand the design approach.

Example: Database search

Suppose we need to locate a specific entry in a database of 100,000 items. Classically, this would require, on average, 50,000 checks. Grover’s algorithm can accomplish this with only about 317 queries.

Here’s the code snippet:

# Grover’s Algorithm in Qiskit from qiskit import QuantumCircuit from qiskit.circuit.library import GroverOperator oracle = QuantumCircuit(2) oracle.cz(0, 1) # Example oracle grover_op = GroverOperator(oracle)

Grover’s algorithm is versatile, applicable to various search and optimisation problems, and forms the basis for quantum-enhanced combinatorial optimisation.

Recent breakthroughs: New algorithms on the horizon

The quantum computing landscape is rapidly evolving, with new algorithms emerging to tackle optimisation, simulation, and machine learning problems. Hybrid quantum-classical algorithms, such as the Quantum Approximate Optimisation Algorithm (QAOA) and the Variational Quantum Eigensolver (VQE), are designed to work with noisy intermediate-scale quantum (NISQ) devices. These approaches combine quantum circuits with classical optimisation routines, enabling practical applications despite hardware limitations.

Recent work includes quantum algorithms for simulating chemical reactions, solving linear systems (Harrow-Hassidim-Lloyd algorithm), and quantum walks for graph analysis. These advances are expanding the scope of quantum computing beyond traditional factoring and search tasks.

Design approach

Hybrid algorithms typically involve parameterised quantum circuits, where classical computers optimise the parameters to minimise or maximise a cost function.

# Example: Variational Quantum Eigensolver (VQE) from qiskit.algorithms import VQE from qiskit.circuit.library import TwoLocal ansatz = TwoLocal(rotation_blocks=’ry’, entanglement_blocks=’cz’) vqe = VQE(ansatz)

Quantum machine learning: Algorithms for AI

Quantum machine learning (QML) is an emerging field that explores how quantum algorithms can enhance artificial intelligence. Algorithms such as Quantum Support Vector Machines, Quantum Principal Component Analysis, and Quantum Neural Networks promise to speed up data processing and pattern recognition. Variational quantum algorithms, in particular, are well-suited for NISQ devices and leverage quantum circuits to model complex data distributions.

Example use case

Quantum classifiers can analyse large datasets more efficiently, potentially transforming areas like fraud detection and image recognition.

The code snippet is:

# Quantum Support Vector Machine (QSVM) skeleton from qiskit_machine_learning.algorithms import QSVM qsvm = QSVM(training_dataset, test_dataset)

While practical quantum advantage for machine learning remains an open question, ongoing research is bridging the gap between theory and real-world application.

Real-world use cases: Where algorithms shine

While the theoretical underpinnings of quantum algorithms are mathematically elegant, their true value lies in translating abstract Hilbert spaces into tangible industrial advantages. We are currently transitioning from pure theory to the ‘quantum utility’ era, where algorithms begin to outperform classical approximations in specific, high-value domains.

Financial modelling and risk analysis

In the financial sector, the calculation of risk and the pricing of complex derivatives often rely on Monte Carlo simulations, which are computationally expensive and converge slowly. Quantum algorithms, specifically those leveraging Quantum Amplitude Estimation (QAE), offer a quadratic speedup over classical Monte Carlo methods. This allows financial institutions to achieve higher precision in Value at Risk (VaR) calculations with significantly fewer samples, enabling near real-time portfolio optimisation and more robust fraud detection systems.

Pharmaceuticals and materials science

Perhaps the most natural application of quantum computing is simulating quantum systems themselves. Classical computers struggle with the exponential scaling required to simulate molecular interactions (the ‘many-body problem’). Algorithms like the Variational Quantum Eigensolver (VQE) allow researchers to calculate the ground state energies of complex molecules. This capability is poised to revolutionise drug discovery by accurately modelling protein-ligand binding interactions and catalysing the development of new materials, such as high-efficiency battery electrolytes or room-temperature superconductors, without the need for costly trial-and-error lab synthesis.

Logistics and supply chain optimisation

Combinatorial optimisation problems, such as the Traveling Salesperson Problem (TSP) or vehicle routing, scale factorially on classical machines. Quantum annealing and gate-based algorithms like the Quantum Approximate Optimization Algorithm (QAOA) can map these problems onto Ising models or QUBO (Quadratic Unconstrained Binary Optimization) formulations. By finding global minima in complex energy landscapes, these algorithms can optimise global shipping routes, fleet management, and traffic flow more efficiently than classical heuristics, leading to massive reductions in operational costs and carbon footprints.

Aerospace and computational fluid dynamics (CFD)

Designing next-generation aircraft and turbines requires solving complex partial differential equations, specifically the Navier-Stokes equations, to model air flow and turbulence. Classical supercomputers often rely on simplified models or coarse meshes to keep simulation times manageable. Quantum algorithms, particularly those adapted for linear algebra and differential equations (like the HHL algorithm or Variational Quantum Linear Solvers), promise to simulate fluid dynamics with much higher fidelity. This could drastically reduce the reliance on expensive physical wind tunnel testing and lead to aerodynamic designs that significantly cut fuel consumption and emissions.

Energy grid optimisation

As the world shifts towards renewable energy, power grids are becoming increasingly decentralised and complex. Managing the fluctuations of solar and wind energy while balancing supply and demand across millions of nodes is a massive combinatorial optimisation challenge. Quantum annealing and QAOA-based approaches can analyse vast datasets from smart grids in real-time to optimise power flow and storage distribution. By solving these optimisation problems faster than classical solvers, utility companies can prevent blackouts, reduce transmission losses, and integrate a higher percentage of renewables into the grid without compromising stability.

Challenges and limitations of quantum algorithms

Despite the theoretical elegance of quantum algorithms, their practical implementation faces a ‘tyranny of numbers’ where physical constraints currently throttle algorithmic utility. The transition from mathematical proof to industrial advantage is hindered by several formidable barriers.

The decoherence and noise bottleneck (NISQ era constraints)

The primary adversary is decoherence, where interaction with the environment causes qubits to lose their quantum state (T1 relaxation and T2 dephasing). Current algorithms must run on Noisy Intermediate-Scale Quantum (NISQ) devices with limited gate fidelity. This restricts ‘circuit depth’—if an algorithm requires too many sequential gates, the signal is drowned out by noise before the calculation concludes.

The massive overhead of Quantum Error Correction (QEC)

To run deep algorithms like Shor’s, we need logical qubits that are fault tolerant. However, implementing schemes like the Surface Code requires a massive overhead, potentially needing 1,000 physical qubits to encode just one logical qubit. This means an algorithm requiring 100 logical qubits may demand a processor with 100,000 physical qubits, far beyond today’s hardware capabilities.

The data loading problem (the QRAM challenge)

Many algorithms, particularly in quantum machine learning and Grover’s search algorithm, assume we can instantly load classical data into a quantum state. In reality, converting a classical database of size $N$ into a quantum superposition takes $O(N)$ time without efficient quantum RAM (QRAM). This initial loading cost often negates the $O(\sqrt{N})$ speedup gained during the actual search or processing phase.

Limited qubit connectivity and SWAP costs

In many hardware architectures (like superconducting circuits), qubits can only interact with their immediate neighbours. If an algorithm requires entangling Qubit 1 with Qubit 10, the system must perform a chain of ‘SWAP’ gates to move the information. These SWAP operations add significant depth and noise to the circuit, making complex, highly entangled algorithms difficult to execute faithfully.

The measurement collapse and readout latency

Quantum algorithms manipulate a vast state space, but we cannot ‘read’ the full state vector; measurement collapses the wave function into a single binary string. To approximate the result distribution, the algorithm must be run thousands of times (‘shots’). This statistical sampling creates a readout bottleneck, making quantum computers slower than classical ones for tasks requiring high-precision scalar outputs.

Verification and debuggability

As we approach ‘quantum supremacy’, we face a paradox: when a quantum computer solves a problem too complex for any classical supercomputer to simulate, how do we verify the answer? Debugging quantum algorithms is notoriously difficult because we cannot inspect the program’s variables (qubit states) in the middle of the execution without destroying the computation, forcing researchers to rely on indirect verification methods.

Quantum algorithms represent a leap forward in computational capability, offering solutions to problems once thought insurmountable. Shor’s and Grover’s algorithms have paved the way for a new era, inspiring advances in optimisation, simulation, and machine learning. While challenges remain, continued innovation in quantum algorithm design and hardware development promises to unlock new possibilities for science and industry.