Memori Labs has commercialised its widely adopted open source memory engine into a managed cloud service, giving teams persistent AI memory with zero database setup while slashing inference costs.

Memori Labs has launched Memori Cloud, a fully hosted version of its SQL-native memory infrastructure, enabling production AI agents to deploy persistent, LLM-agnostic memory in minutes with zero database setup, full observability, and up to 98% lower inference costs.

The service builds directly on the company’s widely adopted open-source SQL-native memory layer, whose GitHub repository ranks among the top memory systems and has seen rapid developer adoption alongside growing enterprise use across customer support agents, commerce automation and internal copilots. Memori Cloud operationalises that open-source foundation into a managed, production-ready platform.

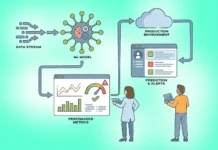

The platform addresses a core limitation in today’s AI stacks: stateless LLM calls that inflate tokens, increase latency, repeat context injection and drive up costs while degrading user experience. Memori instead stores structured, durable and auditable memory with transactional integrity, using a SQL-native design that fits existing enterprise databases and avoids vendor lock-in.

Deployment options include fully hosted cloud, BYODB, VPC and on-prem environments.

At launch, Memori Cloud supports synchronous capture of conversations, asynchronous augmentation to extract facts and preferences, semantic recall, knowledge graph construction, and dashboard-based inspection and analytics.

Adam B. Struck, CEO and Co-Founder, Memori Labs, said: “AI agents without memory are inherently stateless and inefficient. Memori Cloud transforms interactions into durable, structured knowledge and retrieves the right context in real time – dramatically reducing inference spend while eliminating the operational burden of managing memory infrastructure.”

Michael Montero, CTO, added: “Developers want memory that feels native to their application: fast on the request path, richer in the background, and observable when something goes wrong. Memori Cloud pairs synchronous capture with asynchronous augmentation, then makes the entire memory lifecycle visible through a dashboard so teams can inspect, validate, and optimize as they scale.”

Memori Cloud is available immediately.