Prompts are no longer simple text. As generative AI evolves, they are turning into engineering assets, and more specifically, code that needs to be designed, tested, versioned, and maintained.

We know of prompts as intelligent instructions that can be written quickly and modified with no need for written proof. However, as generative AI advances from experimental environments to legitimate operational settings its prompts have begun to function more like software components than regular sentences. A single change in the choice of words can lead to major output differences, impacting customer experience, business choices and regulatory compliance. Software teams have discovered that prompts contain hidden dependencies, implicit logic and failure modes that engineers commonly associate with code.

This transformation is a game changer. Text no longer serves as the material for prompts because they have turned into structured components for specific purposes with operational limits and potential dangers. The prompt that directs a model to summarise legal documents and create financial summaries requires the same control that all vital system elements need.

Today, prompts function as behaviour instructions because they determine how a model thinks, what it considers important, and what it will overlook. People use prompts to express their needs while machines apply their cognitive abilities to meet these. Prompts are no longer ordinary text; they have started turning into code!

The question now is not how to write better prompts, but how to design, test, version, and maintain them—just like any other piece of software that operates at scale.

Viewing prompt engineering through a software lens

Once prompts were recognised as engineering assets, software teams started building them by applying software development methods that require designs to be structured, readable and reusable. The process of creating prompts changed from using intuition to building systems through planned design work.

This software-oriented approach requires a prompt to demonstrate its intended function through its operational guidelines. Casual prompts allowed certain ambiguous elements but now all unclear components have become unacceptable. The major advantage of these prompts is that they produce written material which teams can utilise to review their work and make improvements through collaborative processes.

Today, engineers create prompt designs that establish specific word choices and build multiple boundaries to direct how the model will think. Software teams maintain control over their creative work through systematic procedures, which enable them to produce work that matches expectations.

A simple example illustrates this shift:

BASE_INSTRUCTIONS = “”” You are an AI analyst. Follow instructions precisely and use neutral language. “”” TASK_PROMPT = “”” Analyze the input document and produce: 1. A concise summary (max 5 bullet points) 2. Key risks, categorized as legal, financial, or operational 3. Any missing or unclear information “”” final_prompt = BASE_INSTRUCTIONS + TASK_PROMPT

Table 1: Version control for thinking machines

| Aspect | Without prompt versioning | With prompt versioning (code-like approach) |

| Change tracking | Changes are informal and undocumented | Every modification is recorded with history |

| Debugging | Hard to identify why outputs changed | Easy to trace issues to a specific prompt version |

| Reliability | Model behaviour shifts unpredictably | Outputs remain consistent and reproducible |

| Collaboration | Prompts live in chats or personal notes | Teams collaborate using shared repositories |

| Experimentation | Risky trial-and-error in production | Safe branching and controlled testing |

| Rollback | Previous working prompts are lost | Instant rollback to stable versions |

| Audit and compliance | No traceability for decisions | Full accountability with prompt lineage |

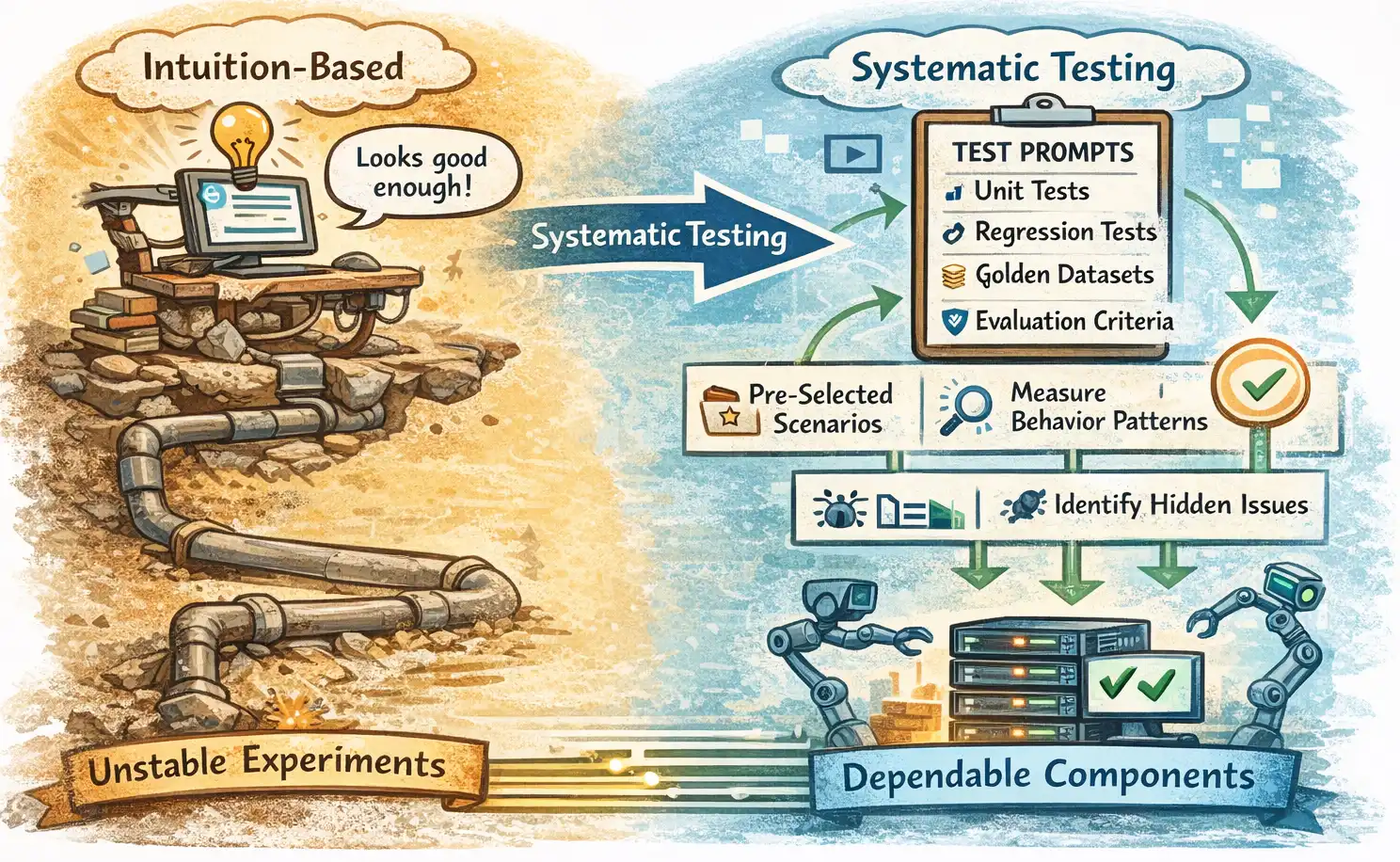

Testing prompts before they fail at scale

The prompt quality assessment methods that were used initially relied on people’s intuition to determine if the output matched acceptable standards. This approach fails when prompts are used in production environments. The operational needs of prompts require them to function accurately through testing. This involves all types of user inputs, testing their limits, and tracking user interaction changes. Systematic testing is now a necessity.

Testing prompts serves two purposes — verifying a prompt’s response and observing how users interact with it. The testing process reveals all the hidden problems that originate from small modifications to the instructions or the addition of an example.

Today’s teams use established testing methods from software engineering to test their prompts. Unit tests assess how well a prompt processes input types. Regression tests ensure that enhancements made to one aspect of the system do not result in a fall in performance in other parts of the system. Prompt quality assessment uses golden datasets that contain pre-selected input samples and their corresponding expected results to establish evaluation standards.

In prompt testing, assessment criteria are as important as evaluation criteria. The testing process examines prompts for their ability to produce correct results while evaluating their structural integrity, completeness, potential biases, and safety features. Human assessment works with automated systems to provide better evaluation results in situations that carry high risks. Multiple testing sessions lead to a fact-based method for developing prompts.

Organisations that test their prompts before launching them into operation improve public confidence and trustworthiness towards their AI systems. The testing process changes prompts from unstable experimental approaches into dependable operational elements.

Table 2: The prompt engineering toolchain

| Stage in prompt lifecycle | Purpose | Typical tools / Practices | Engineering benefit |

| Prompt authoring | Design clear, structured prompts | Templates, YAML/JSON formats, prompt libraries | Consistency and readability |

| Version control | Track prompt changes over time | Git, DVC, prompt repositories | Traceability and rollback |

| Experimentation | Test alternative prompt designs | Prompt branching, A/B testing setups | Safe innovation without risk |

| Evaluation and testing | Measure prompt behaviour | Golden datasets, automated evaluators, human review | Reliability and quality assurance |

| Optimisation | Improve cost, latency, and accuracy | Token analysis, prompt compression techniques | Efficient and scalable AI usage |

| Deployment | Integrate prompts into applications | CI/CD pipelines, configuration files | Controlled and repeatable releases |

| Monitoring | Detect performance drift | Logging, feedback loops, analytics dashboards | Early detection of failures |

Optimisation as a discipline

Once prompt testing is completed, optimisation work can begin. In the initial phase of prompt tuning, intuition is used for determining which instructions, examples and restrictions must be added until the output reaches an acceptable standard. The temporary solution functions properly, but it fails to handle larger operations.

Prompt optimisation extends beyond achieving correct results. Production systems require prompts to determine how much time they take to respond, which tokens they consume, which expenses they incur, and which results they produce. The use of a lengthy prompt will result in better output quality. The use of a brief prompt will decrease expenses. Optimisation seeks to find the optimal solution that applies to the context of the model.

The assessment process requires evaluation through metrics. Teams assess output quality scores, failure rates, token consumption, and response variability for different prompt versions. The performance of the system improves through small structural changes like reordering instructions, tightening constraints, and removing unnecessary examples.

Optimisation leads to better results but does not establish one optimal prompt. A prompt designed for internal analysis needs different design elements than a prompt that serves customer-facing interactions. The scheduling of optimisation as a permanent practice enables prompt development to progress with model enhancements, workload changes, and business objectives.

Organisations develop a consistent prompt engineering process by implementing measurement and iterative testing as their foundation for prompt optimisation work. The process leads to AI systems that function efficiently and produce dependable outcomes.

PromptOps: The evolution of prompt engineering

PromptOps enables AI systems to function with operationalised prompts that act as ongoing elements just like DevOps enables software development teams to build and maintain their products. The system establishes a link between prompt testing and operational deployment while it maintains prompt performance throughout its lifecycle.

PromptOps unites all the elements of prompt engineering, which include versioning, testing, optimisation, deployment, and monitoring, into one complete workflow. Teams handle prompts as dynamic assets that they can modify to fit their changing requirements. The organisation builds systematic processes for testing and implementing changes, which help to maintain system performance during production.

This new system establishes a new way for teams to work together. Data scientists, engineers, product teams, and domain experts all participate in the process of designing and assessing prompts, making decisions based on shared metrics and tools.

PromptOpsconsiders intelligence in modern AI systems extends beyond their underlying models. Organisations that adopt it early and excel at it will gain a market advantage.