Johns Hopkins APL has turned open source datasets and Hugging Face tooling into a reusable LLM training stack for secure government AI, enabling mission-specific models for defence, CBRN response, and multimodal intelligence workflows.

Johns Hopkins Applied Physics Laboratory (APL) has built a mission-specific large language model training stack powered by open-source datasets and tooling from the Hugging Face ecosystem, creating a reusable infrastructure that can help government agencies develop secure, domain-adapted AI systems.

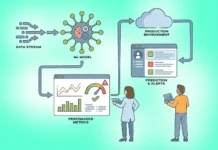

At the centre of the breakthrough is a two-billion-parameter model trained on a specially curated mix of open-source datasets spanning general knowledge, mathematics, engineering, and foreign languages. To support this, the APL team developed lightweight in-house infrastructure that blends and streams multiple Hugging Face datasets into manageable chunks while preserving full control, replicability, and transparency across the training workflow.

The new stack was built after existing open-source solutions failed to meet all mission requirements, particularly around sensitive data handling, specialised workflows, and deployment in restricted environments. The infrastructure is now available for other researchers to use, strengthening its open-source relevance beyond the original programme.

Samuel Barham, artificial intelligence researcher at APL’s Intelligent Systems Center, said the capability gives government partners a trusted pathway to build and adapt LLMs for mission-relevant domains while avoiding major technical pitfalls.

The team also derived empirical scaling laws for Nvidia DGX H100 clusters, successfully trained both one-billion- and two-billion-parameter models, and built custom safeguards against loss spikes, catastrophic forgetting, and restart failures.

The platform is already being used for CBRN threat-response LLMs, global health mission systems, and multimodal warfighting models, with the next phase focused on larger models, richer data types, and broader operational deployment across national security missions.