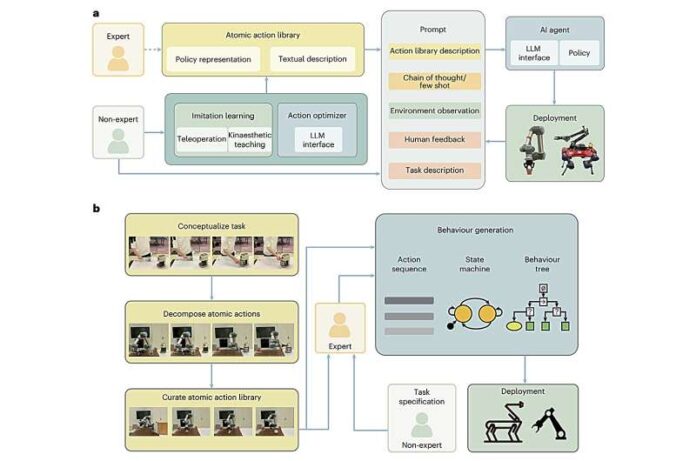

New integration framework allows robots to interpret complex human language commands and convert them into precise, real-world actions, simplifying human-robot interaction and reducing the need for specialized programming expertise across applications

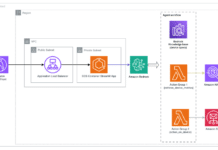

Researchers have developed a new framework that integrates large language models (LLMs) with the Robot Operating System (ROS), enabling robots to understand and execute natural language commands more effectively, according to a recent report by TechXplore.

The system bridges a longstanding gap in robotics: translating human instructions into machine-executable actions. By combining ROS—widely used for robot control—with LLMs capable of interpreting conversational language, the framework allows users to issue high-level commands without needing technical programming knowledge.

Instead of relying on rigid, pre-coded instructions, the approach enables robots to process flexible, real-world language inputs. The LLM component interprets user intent, breaks it into actionable steps, and passes structured commands to the ROS-based control system. This improves adaptability in dynamic environments where tasks may vary or evolve.

The development reflects a broader trend in robotics toward combining cognitive AI models with physical systems. LLMs provide reasoning and language understanding, while robotic platforms handle perception and motion—together enabling more intuitive human-robot interaction.

Researchers highlight that such integration could simplify deployment across applications ranging from service robots to industrial automation. By lowering the barrier to interaction, non-expert users could potentially operate robots using everyday language rather than specialized interfaces.

However, challenges remain around reliability, safety, and ensuring that interpreted commands align accurately with real-world constraints. Translating ambiguous human language into precise robotic actions continues to require robust validation mechanisms.

The work signals a shift toward “language-first” robotics, where natural communication becomes a primary interface—bringing robots closer to seamless integration into human environments.