A-Evolve’s open source release gives developers a Git-native framework for building AI agents that rewrite prompts, skills, and logic autonomously, signalling a major shift from manual tuning to reproducible evolutionary optimisation.

The open-source release of A-Evolve marks a significant shift in agentic AI development, introducing a universal infrastructure layer for self-improving AI agents that can autonomously rewrite their own prompts, logic, skills, and configuration files over time.

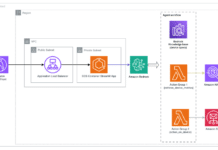

At the core of the framework is a five-stage evolutionary loop—Solve, Observe, Evolve, Gate, and Reload— designed to continuously benchmark agent performance, mutate weak components, validate gains, and instantly redeploy improved versions. Unlike traditional prompt-layer optimisation, A-Evolve directly edits real workspace files, including manifest.yaml, prompts, tools, skills, and memory, creating a standardised open-source Agent Workspace architecture.

A major developer advantage lies in its Git-native reproducibility, where every mutation is versioned as tagged checkpoints such as evo-1 and evo-2, enabling rollback, auditability, and safe experimentation. Combined with hot reload support, teams can apply evolved changes instantly without rebuilding the entire environment.

The framework also introduces strong modularity through BYOA, BYOE, and BYO-Algo, allowing engineering teams to plug in any agent architecture, environment, or evolutionary strategy. This developer-first design runs in standard Python workflows, requires only a three-line setup, and supports leading OpenAI and Claude-class models.

Early benchmark results strengthen the open-source case, with 79.4% on MCP-Atlas (#1), 76.8% on SWE-bench Verified, 76.5% on Terminal-Bench 2.0, and 34.9% on SkillsBench (#2).

Beyond self-improving agents, the larger story is the emergence of an open infrastructure standard for reproducible agent evolution, opening the path for community-led optimisation stacks and domain-specific AI workflows.